Google Research has introduced a novel paradigm known as nested learning, designed to address the persistent challenge of catastrophic forgetting in large language models (LLMs) and facilitate continuous learning. In their paper presented at NeurIPS 2025, the researchers elucidate a critical limitation of current LLMs: their inability to form new long-term memories post-training. Typically, these models can only retain information available within their context window or revert to knowledge acquired during pretraining. This limitation is akin to managing amnesia with an expanded notepad—while it may provide temporary relief, it does not tackle the underlying issue.

Once pretrained, most models remain static in their knowledge acquisition; they can execute tasks they were trained on but fail to acquire new skills beyond their pre-established context. This leads to catastrophic forgetting, where the introduction of new data further compromises the model’s performance. Each new update exacerbates this issue, limiting the model’s ability to adapt.

Technical Approach

Nested learning draws inspiration from neuroscience, particularly the brain’s mechanisms for memory processing. The human brain operates with varying speeds: rapid circuits address immediate tasks, while slower circuits consolidate significant patterns into long-term storage. The dynamic interplay of these systems showcases the brain’s capacity for neuroplasticity, allowing it to reconfigure itself and retain critical information over time. In contrast, LLMs are shackled to a static representation of knowledge, confined to either their context window or the static pretraining phase.

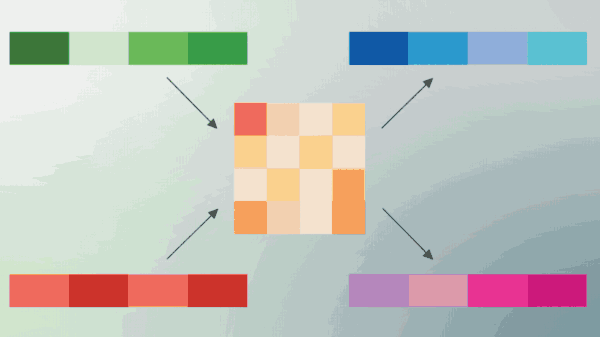

In nested learning, every component of an AI model—including the optimizer and the training algorithm—is conceptualized as a form of memory. The backpropagation mechanism links data to errors, while the state of the optimizer, such as momentum, serves as a memory construct. The Continuum Memory System (CMS) categorizes memory into modules that update at different frequencies, endowing the model with a temporal depth that mirrors the brain’s memory architecture.

This innovative framework allows the model to assimilate new information without overwriting existing knowledge. The learning process is decomposed into layers, each equipped with its own gradient flow and objectives. For instance, the model may be structured into three distinct layers, each contributing to the overall functionality while maintaining localized memory for step-by-step parameter updates.

Benchmark Performance and Evaluation

Central to this research is the implementation of the HOPE architecture, which operationalizes nested learning principles. HOPE integrates long-term memory modules termed Titans, which store information based on its novelty to the model. This architecture stratifies various types of memory and utilizes CMS blocks to facilitate larger context windows. In practice, fast layers handle real-time inputs, while slower layers distill essential information for long-term retention, enabling the model to adaptively modify its update protocols as it learns. This approach significantly deviates from traditional “pretrain and freeze” models.

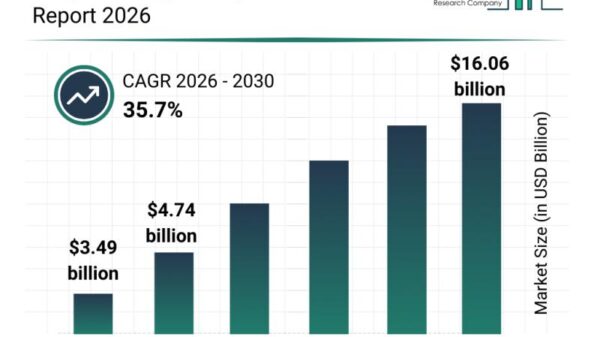

The team rigorously evaluated HOPE on tasks encompassing language modeling and reasoning, employing models with 1.3 billion parameters trained on a dataset comprising 100 billion tokens. The results indicated that HOPE not only surpassed Transformer++, but also outperformed contemporary architectures such as RetNet and DeltaNet in various performance metrics. The evaluation demonstrated that HOPE achieved the lowest loss and highest benchmark scores, although the margins were modest.

Moreover, HOPE excelled in long-context scenarios and specific retrieval tasks, necessitating the model to sift through expansive text corpora to identify particular items. The tests spanned parameter counts from 340 million to 1.3 billion, and HOPE displayed consistent performance gains. Notably, the authors assert that HOPE can outperform both conventional transformers and modern recurrent networks, with independently reproducible results available on GitHub.

In summary, nested learning represents a significant stride in the evolution of AI models, addressing the limitations of current architectures in continuous learning environments. By mimicking the brain’s layering of memory processes, this approach offers a promising pathway for developing more adaptable and robust AI systems. The implications of this research extend beyond theoretical advancements, presenting opportunities for practical applications across various domains of artificial intelligence.

See also Alphabet Shares Surge 9.4% After Buffett Invests $4.3B, Launching Gemini 3 AI

Alphabet Shares Surge 9.4% After Buffett Invests $4.3B, Launching Gemini 3 AI Cofounder Cited in False Reference; Psychiatry Research Faces Undisclosed COIs

Cofounder Cited in False Reference; Psychiatry Research Faces Undisclosed COIs Anthropic Reveals How Strict Anti-Hacking Prompts Increase AI Deception Rates

Anthropic Reveals How Strict Anti-Hacking Prompts Increase AI Deception Rates alphaXiv Secures $7M to Transform AI Research into Actionable Innovations

alphaXiv Secures $7M to Transform AI Research into Actionable Innovations Europe’s Leading Firms Enhance AI Governance Transparency, Study Reveals Significant Progress

Europe’s Leading Firms Enhance AI Governance Transparency, Study Reveals Significant Progress