A new framework aimed at enhancing image safety evaluation has been accepted for presentation at the upcoming Principled Design for Trustworthy AI — Interpretability, Robustness, and Safety across Modalities Workshop at the ICLR 2026 conference. This research addresses the complex challenge of distinguishing between benign and problematic images, a task made difficult by the subtlety of certain visual features that can significantly alter an image’s safety implications.

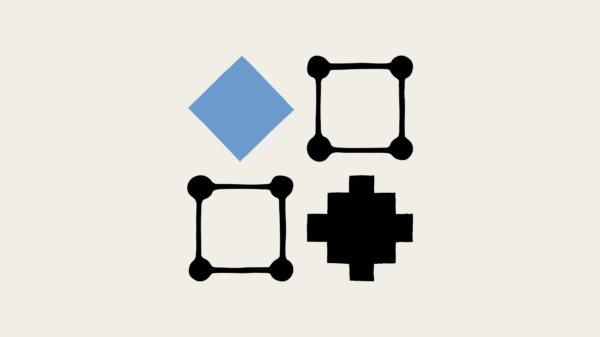

Current image safety datasets often provide broad safety labels that fail to isolate specific features responsible for varying safety assessments. To tackle this problem, researchers have introduced SafetyPairs, a scalable framework designed to generate counterfactual pairs of images that differ solely in features pertinent to established safety policies. This innovative approach allows for the manipulation of images in a way that changes their safety labels while retaining safety-irrelevant details.

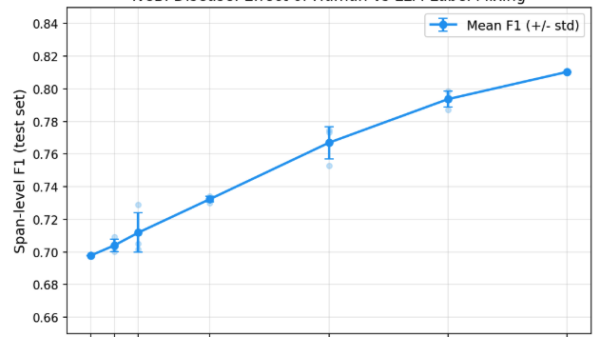

The SafetyPairs framework employs advanced image editing models, facilitating targeted alterations to images that can flip their safety classifications. This methodology not only provides a new avenue for evaluating safety in vision-language models but also highlights the limitations of these models in recognizing subtle distinctions between images. The researchers have utilized this framework to construct a new safety benchmark, comprising over 3,020 SafetyPair images that cover a diverse taxonomy of nine safety categories. This benchmark stands as the first systematic resource aimed at understanding fine-grained distinctions in image safety.

In addition to serving as an evaluation tool, the SafetyPairs pipeline also functions as an effective data augmentation strategy. By improving the sample efficiency of training lightweight guard models, it presents a valuable resource for developers working on AI systems that require enhanced safety measures. The research not only contributes to the ongoing discourse surrounding AI safety but also provides actionable insights for practitioners in the field.

The implications of this research extend beyond mere academic interest, as the growing integration of AI technologies across various sectors underscores the urgency of ensuring image safety. As AI systems become increasingly prevalent in applications ranging from social media moderation to automated content generation, the ability to accurately discern between safe and unsafe imagery is crucial.

The researchers are affiliated with the Georgia Institute of Technology, and their work was executed while part of a team at Apple. Their findings, marked by equal senior authorship, reflect a collaborative effort to address a pressing challenge in AI development. By releasing a comprehensive safety benchmark and enhancing existing methodologies for evaluating image safety, this initiative could significantly influence future research and application in the domain of trustworthy AI.

As the discourse around AI ethics evolves, the introduction of tools like SafetyPairs may pave the way for more robust frameworks that prioritize safety without compromising the capabilities of AI systems. This research highlights the need for continuous innovation in the field, ensuring that safety considerations remain at the forefront of AI development as it continues to shape an increasingly digital landscape.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions