Anthropic confirmed on Tuesday that a misconfigured software package led to the leak of much of the source code of its prominent product, Claude Code. This incident follows a separate leak reported last week, where thousands of files were inadvertently made public.

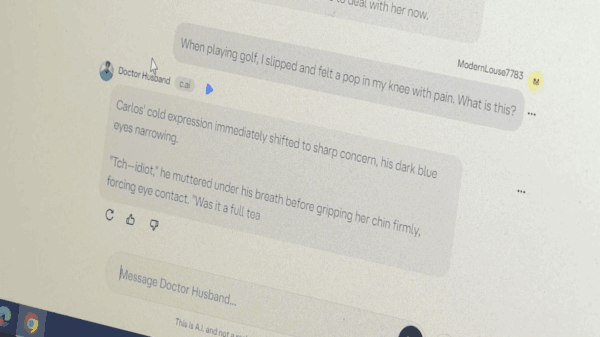

The leak was uncovered by security researcher Chaofan Shou, who discovered that the official npm package for Claude Code contained a map file that referenced an unobfuscated TypeScript source. Shou subsequently shared his findings on X, generating considerable attention within the tech community.

The problematic file pointed to a zip archive stored on Anthropic’s Cloudflare R2 storage bucket, which was accessible for anyone to download. This archive reportedly included approximately 1,900 TypeScript files, amounting to over 512,000 lines of code. Among the contents were full libraries of slash commands and built-in tools.

Within hours of its discovery, the exposed code was uploaded to GitHub, where it was forked more than 41,500 times, according to reports from The Register. This rapid dissemination effectively ensured that the exposure of the code could not be easily reversed.

In response to the incident, an Anthropic spokesperson stated, “Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We’re rolling out measures to prevent this from happening again.”

This leak comes just days after Fortune reported that Anthropic had inadvertently made thousands of files publicly accessible, including a draft blog post detailing an upcoming model known internally as “Mythos” or “Capybara,” which reportedly raises cybersecurity concerns.

Software engineer Gabriel Anhaia, who published an analysis of the leaked code, emphasized the importance of the incident for development teams. He noted that a source map file included in the npm package was intended for debugging, mapping minified code back to the original source. “Including one in a production npm publish effectively ships your entire codebase in readable form,” Anhaia wrote. He urged engineering teams to ensure that such files are excluded from their publish configurations, warning that a single misconfigured .npmignore or files field in package.json can expose sensitive information.

As experts examined the newly available source code, many expressed admiration for the quality of the work. Prominent tech blogger Robert Scoble remarked on social media, “Notice no one said the code is slop. In every painful moment, there are always gifts. The gift is that we all know now that Anthropic’s code is pretty damn good.”

However, the leak presents a significant advantage to Anthropic’s competitors, who can now gain insights into the workings of one of the company’s most successful products. In a rapidly evolving AI landscape, this exposure could give rival firms a clearer view of the features and capabilities that make Claude Code appealing to users.

The incident serves as a stark reminder of the vulnerabilities inherent in software development, particularly in the fast-paced world of artificial intelligence. With the stakes higher than ever, companies must exercise heightened vigilance in their development processes to prevent similar breaches. As Anthropic moves forward, it will need to reinforce its protocols to safeguard against future leaks while maintaining its competitive edge in the burgeoning AI market.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility