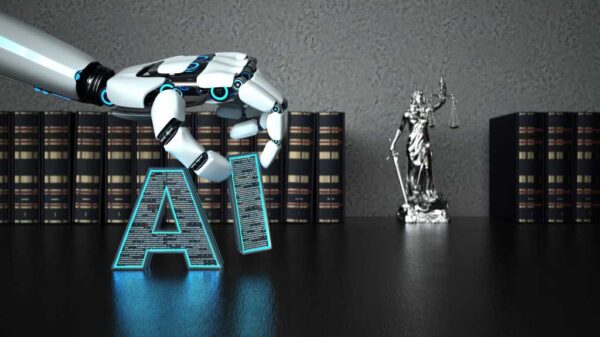

Anthropic, the AI startup co-founded by former OpenAI chief Dario Amodei, has secured a preliminary injunction against the Pentagon in a legal battle concerning its designation as a “supply chain risk.” This ruling, issued by US District Judge Rita Lin in California, temporarily prevents the Department of War from labeling the company as a threat to national security due to its AI model, Claude. However, industry experts caution that the fight is far from over, as significant risks to Anthropic’s business remain.

The 43-page order by Judge Lin stated that the Trump administration acted improperly in designating Anthropic as a supply chain risk, which has never before been applied to an American company. This classification put at stake Anthropic’s approximately $200 million contract with the Pentagon and strained its partnerships with other federal agencies. The ruling highlighted that at least three contractors ended their collaborations with Anthropic, and deals worth over $180 million fell through, despite being nearly finalized.

Legal experts and lobbyists suggest that the preliminary injunction does little to eliminate the uncertainty clouding both Anthropic and the broader tech sector. Notably, a parallel case is pending in the DC Circuit Court of Appeals, where the designation is under scrutiny. The Pentagon’s supply chain risk designation is anchored in two statutes, one of which, 41 USC 4713, is exclusively within the DC Circuit’s jurisdiction. Until that court issues its ruling, the designation remains effectively in place.

Charlie Bullock, a lawyer and senior research fellow at the Institute for Law and AI, remarked, “Practically speaking, not that much has changed on the supply chain designation for Anthropic due to this preliminary injunction. I think a lot of the public reaction to this is premature and doesn’t reflect an understanding of the actual situation.” He emphasized the possibility that the DC Circuit could rule differently from Judge Lin, leaving the cloud of uncertainty hanging over Anthropic.

The implications extend beyond Anthropic itself. A senior official at a tech trade association conveyed to Politico that “as long as the cases and appeals are pending, businesses will not have 100% clarity and certainty regarding the impact of [the Pentagon’s] use of the supply chain designation in this way.” This ongoing ambiguity creates a precarious environment for other tech companies operating in sensitive sectors.

Paul Lekas, head of global public policy at the Software and Information Industry Association, noted, “A cloud remains over the business community.” In this context, former national security official Saif Khan stated, “After yesterday’s ruling, at least one of the supply chain risk designations is gone. But for Anthropic, from a business perspective, you need both of them gone before it actually helps you. This is really unpredictable. So it’s a frustrating situation for Anthropic.”

The ongoing legal saga highlights significant challenges for AI companies navigating the intersection of technology and national security. As the government weighs the implications of AI deployment in military applications, startups like Anthropic find themselves in a precarious position, caught between innovation and regulation. The outcome of the DC Circuit’s ruling will likely have far-reaching consequences, shaping the landscape for AI technologies in the defense sector and beyond.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility