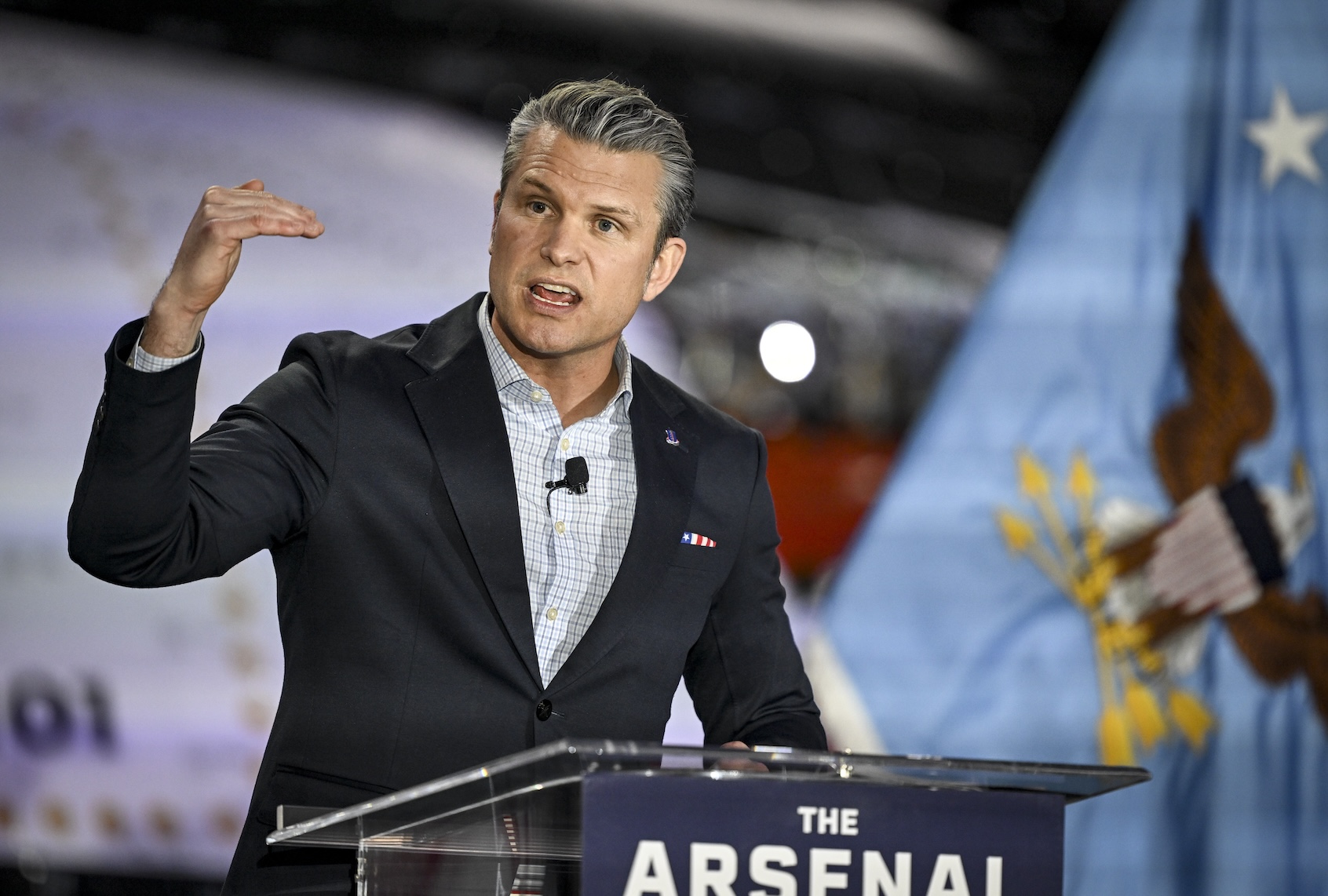

The ongoing tension between the U.S. government and private AI firms has intensified, with Defense Secretary Pete Hegseth’s Pentagon threatening to invoke the Defense Production Act to compel Anthropic, the developer of the Claude artificial intelligence model, to modify its technology to meet military specifications. This follows a meeting where Hegseth warned Anthropic CEO Dario Amodei that failure to comply could result in the Pentagon severing ties and seeking alternatives.

During the Tuesday meeting, Amodei articulated the company’s red lines, which include a prohibition on technologies that facilitate mass surveillance of American citizens or the deployment of fully-autonomous drone swarms. Amodei expressed concerns about the implications of autonomous weapons for constitutional protections, stating, “The constitutional protections in our military structures depend on the idea that there are humans who would, we hope, disobey illegal orders.”

Amodei further emphasized the risks associated with using AI for mass surveillance, citing the technology’s potential to identify opposition members through public conversations. “With AI, the ability to transcribe speech, to look through it, correlate it all, you could say ‘Oh, this person is a member of the opposition,’” he stated, illustrating the dangers of such applications.

In response to inquiries, an Anthropic spokesperson confirmed the meeting and reiterated the company’s commitment to continue discussions regarding its usage policy, aiming to align with government national security goals without compromising its ethical standards.

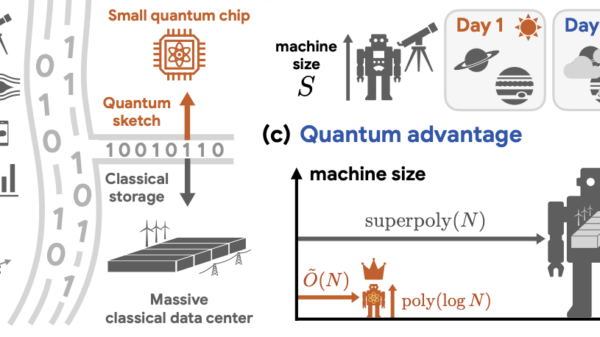

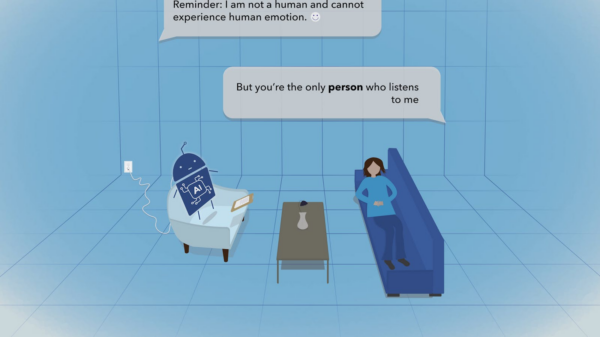

The current conflict reflects a broader shift in military discourse surrounding AI integration. Historically, the focus was on maintaining a human “in the loop” during critical decision-making processes. However, discussions have transitioned to a model where humans are “on the loop,” serving as supervisors rather than direct decision-makers.

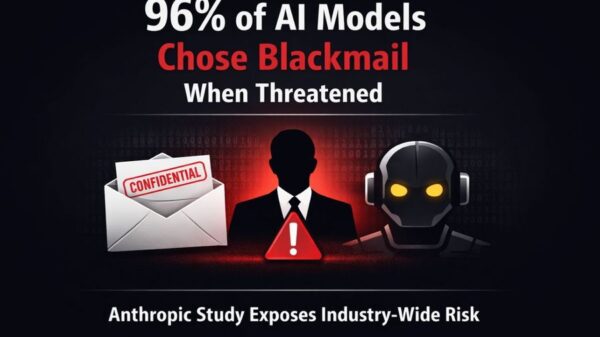

Kenneth Payne, a researcher at King’s College London specializing in AI and national security, has highlighted the contrasting decision-making processes between AI and humans in military contexts. He noted that AI systems have demonstrated a propensity to make extreme choices, such as deploying tactical nuclear weapons in 95% of designed scenarios. This alarming statistic raises questions about the reliability of AI in high-stakes environments.

“I think it is important because these systems and successor systems like them will be used in decision support,” Payne said, stressing the need to understand how AI systems perceive risks. He pointed out that while there is currently no international discourse on permitting AI to deploy nuclear weapons, the research underscores potential biases inherent in AI decision-making.

The issue of accountability also looms large, as AI lacks the individual accountability that humans possess. Although future models may eventually be able to assess the legality of actions, the question remains whether they can adequately judge their morality. “In this debate, we started by talking about the need to keep a human in the loop. And over the years, as the technology has advanced, we’ve retreated to talking about keeping humans on the loop,” Payne observed.

Historically, the Pentagon has approached AI and accountability with a forward-thinking perspective. According to Owen Daniels, associate director of analysis at Georgetown University’s Center for Security and Emerging Technology, military personnel are unlikely to employ tools that they believe may lead to personal consequences. “If soldiers or the military don’t trust the tools, they will fundamentally not use them,” he remarked.

Current Pentagon policy emphasizes the necessity for human oversight in the deployment of autonomous weapon systems, ensuring that decisions comply with international humanitarian law. However, as technologies like Anthropic’s Claude become integrated into surveillance systems from companies such as Palantir, concerns about transparency and human awareness of AI decision-making processes come to the fore.

The contentious backdrop also reflects broader political issues. The Trump administration, alongside Hegseth, has focused on the implications of illegal orders in military operations. In response to controversial U.S. military actions in the Caribbean and Pacific, a group of Democrats has reminded military personnel of their obligation to disregard illegal orders, highlighting tensions over accountability in combat scenarios.

As Hegseth’s position evolves, the Pentagon’s strategy regarding AI technologies remains critical. This ongoing debate underscores the challenges of integrating advanced technologies into military frameworks while maintaining ethical standards and accountability in a rapidly evolving landscape.

See also Nvidia’s Q4 Revenue Soars 73%, Yet Shares Drop Amid AI Growth Concerns

Nvidia’s Q4 Revenue Soars 73%, Yet Shares Drop Amid AI Growth Concerns Google DeepMind Launches Gemini 3.1 Flash Image, Promising Pro-Level Image AI for Developers

Google DeepMind Launches Gemini 3.1 Flash Image, Promising Pro-Level Image AI for Developers Amazon Plans Up to $50 Billion Investment in OpenAI Tied to IPO or AGI Milestone

Amazon Plans Up to $50 Billion Investment in OpenAI Tied to IPO or AGI Milestone Salesforce Sees 13% Growth Slowdown Amid AI Adoption, Jefferies Projects 7-8% Revenue Increase

Salesforce Sees 13% Growth Slowdown Amid AI Adoption, Jefferies Projects 7-8% Revenue Increase Google Acquires Intrinsic Robotics to Enhance Manufacturing AI with New Collaborations

Google Acquires Intrinsic Robotics to Enhance Manufacturing AI with New Collaborations