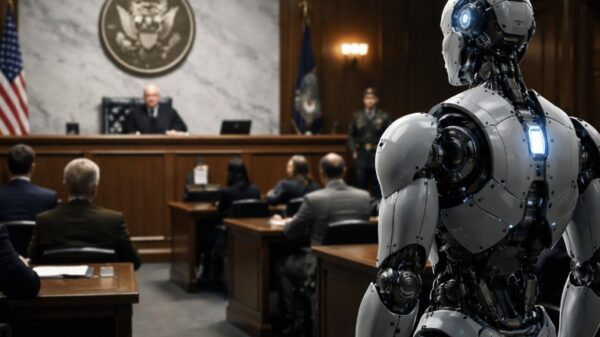

Staff members in the United States Senate have been officially authorized to utilize only three generative AI chatbots for work-related tasks—ChatGPT, Gemini, and Microsoft Copilot. This decision was communicated through an internal memo issued by the Senate’s Sergeant at Arms’ Chief Information Officer. The document, first reported by The New York Times and later obtained by Business Insider, outlines the platforms that Senate staff are permitted to use when handling official data.

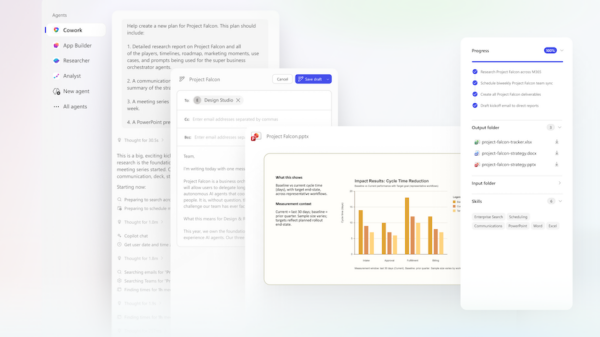

Among the approved tools, Microsoft Copilot received particular attention in the memo due to its integration with the Senate’s existing Microsoft 365 software environment. The AI assistant can assist staff with routine administrative and research tasks, including drafting and editing documents, summarizing large volumes of information, preparing talking points and briefing materials, and conducting research or analysis. Copilot operates within Microsoft’s secure government cloud infrastructure and will not access Senate data unless the information is deliberately shared within a prompt. The note also clarified that the system cannot automatically search internal drives, emails, Teams chats, or shared folders.

Notably absent from the Senate’s approved list is Grok, created by xAI. Another widely used AI assistant, Claude, has also not yet been authorized for use. Internal Senate IT discussions suggest that the tool remains under review. The company behind Claude, Anthropic, has reportedly been involved in a policy dispute with the administration of Donald Trump over restrictions tied to potential use of its technology in areas such as mass surveillance and autonomous weapons.

The approval framework adopted by the Senate presents a more cautious approach compared to the United States House of Representatives, which has already permitted staff to use four AI tools: ChatGPT, Gemini, Copilot, and Claude. According to the POPVOX Foundation, the Senate’s narrower list reflects a more measured strategy toward incorporating artificial intelligence into government operations, as lawmakers and administrators continue to evaluate the technology’s security, privacy, and policy implications.

This cautious route taken by the Senate illustrates growing concerns surrounding the use of AI in governmental tasks. As the rapid evolution of AI technology accelerates, various institutions grapple with how to integrate its capabilities while safeguarding sensitive information. The decision to limit the use of AI tools may serve as a reflection of the regulatory landscape and the ongoing debates about ethical considerations in AI deployment.

As the landscape for AI in government continues to evolve, the implications of this decision may extend beyond the walls of the Senate. The choices made today could shape the future of AI integration across various sectors of public administration, influencing not only how tasks are accomplished but also how data privacy is maintained. The Senate’s decision highlights the importance of navigating the balance between leveraging advanced technology and ensuring the integrity of governmental processes.

This measured approach invites scrutiny from both advocates for technological advancement and those concerned about the potential risks associated with AI. As discussions deepen around AI’s role in governance, the dialogue between innovation and caution will likely guide the trajectory of future policies, ensuring that while technology is embraced, public trust and security are preserved.

First Published on March 12, 2026, 17:42:09 IST

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility