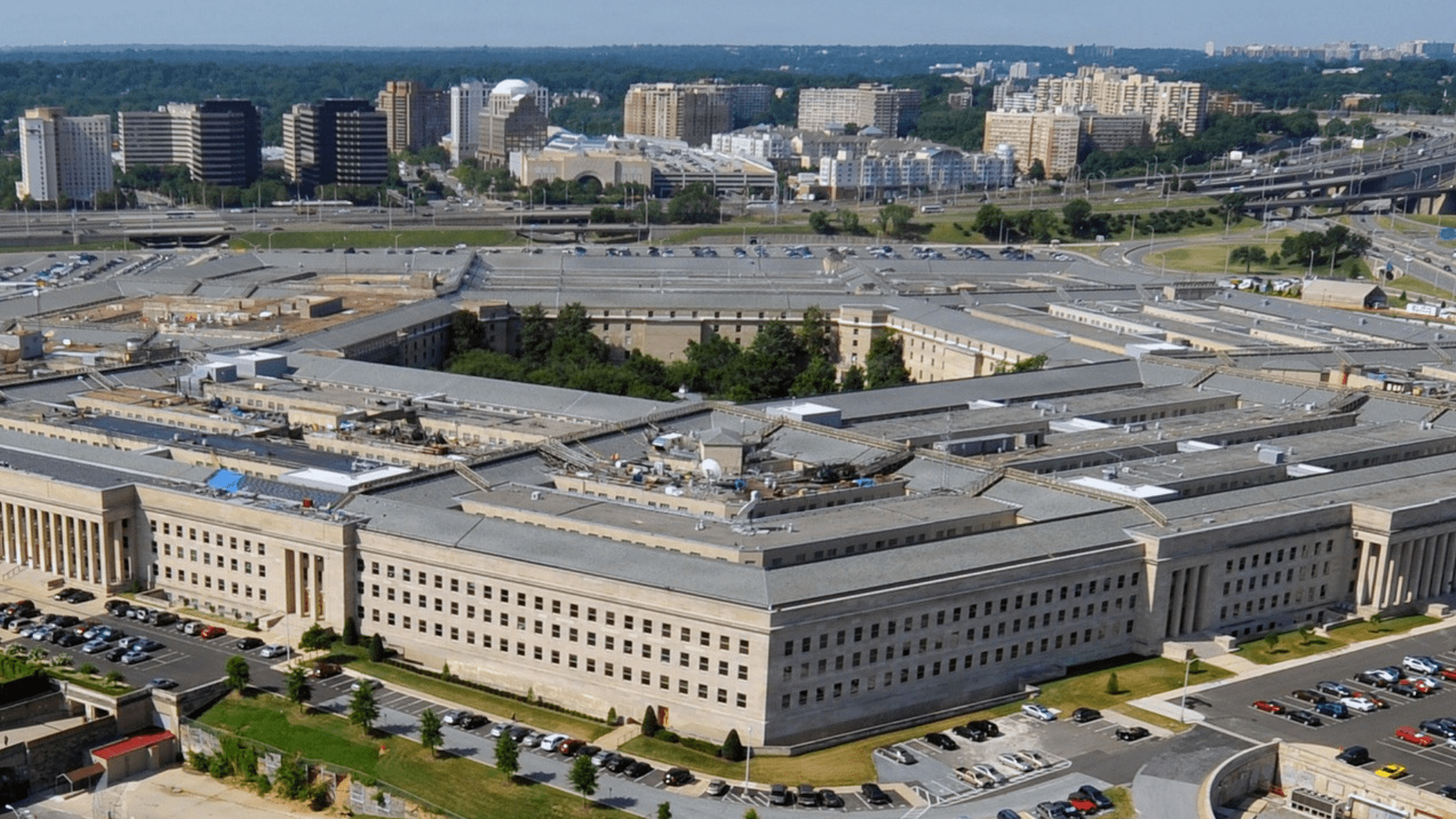

Caitlin Kalinowski, the hardware lead at OpenAI, has resigned from her position after voicing concerns regarding the company’s recent partnership with the U.S. Department of War. In a post shared on X, Kalinowski indicated that her departure followed OpenAI’s decision to deploy its AI models on the Pentagon’s classified cloud networks, a move she believes was made too hastily without adequate internal or public discourse on its broader implications.

Kalinowski emphasized that while artificial intelligence can be pivotal in enhancing national security, certain ethical and governance boundaries require more extensive consideration. She pointed to critical issues such as the surveillance of Americans without judicial oversight and the potential development of lethal autonomous systems lacking clear human authorization. “These are too important for deals or announcements to be rushed,” she stressed in a follow-up post.

In the wake of Kalinowski’s resignation, OpenAI defended its approach to the partnership, asserting that it includes additional safeguards designed to limit the application of its technology. The company reiterated its commitment to prohibiting domestic surveillance and the deployment of autonomous weapons. In a statement to Reuters, OpenAI acknowledged that its involvement in this sector could provoke strong opinions and indicated its intention to continue engaging with various stakeholders, including employees, government representatives, and civil society groups, as the conversation develops.

OpenAI had revealed its partnership with the Pentagon just over a week ago, following unsuccessful negotiations between the Department of War and Anthropic, another AI firm, which had sought assurances against the use of its technology for mass surveillance or fully autonomous weaponry. OpenAI CEO Sam Altman emphasized in a post that the contract includes protections mirroring those that were contentious in Anthropic’s discussions.

Altman underscored that the agreement reflects two of OpenAI’s core safety principles: a ban on domestic mass surveillance and the necessity for human accountability in the use of force, particularly in autonomous systems. He noted that the Department of War has codified these principles within its laws and policies, affirming their incorporation into the contract.

“We also will build technical safeguards to ensure our models behave as they should, which the Department of War also wanted,” Altman wrote on X. He mentioned that OpenAI intends to deploy fail-safe mechanisms to enhance model safety and will operate exclusively on cloud networks. Furthermore, Altman urged the U.S. Department of the Treasury to extend similar terms to all AI companies, suggesting that such conditions should be industry standards. He expressed a preference for resolving conflicts through pragmatic agreements rather than through legal or governmental avenues.

During an all-hands meeting, Altman reportedly informed employees that the government would allow OpenAI to develop its own “safety stack” to prevent misuse of its technology. He assured that if an AI model declines to perform a specific task, the government would not compel the company to override that decision.

The evolving dialogue around AI and national security reflects broader societal concerns about the ethical implications of deploying advanced technologies in military applications. As companies like OpenAI navigate these complex waters, the balance between innovation and responsibility will be a focal point of scrutiny from stakeholders across the board.

See also Trump Administration Proposes New Export Rules Linking AI Chip Sales to U.S. Data Center Investments

Trump Administration Proposes New Export Rules Linking AI Chip Sales to U.S. Data Center Investments Intel Expands AI-Native 6G Alliances, Enhancing Confidential Computing Strategy

Intel Expands AI-Native 6G Alliances, Enhancing Confidential Computing Strategy Synthetic Diamond Market Surges as AI Computing Drives $90 Billion Heat Dissipation Opportunity

Synthetic Diamond Market Surges as AI Computing Drives $90 Billion Heat Dissipation Opportunity Legal AI Systems Risk Cross-Border Errors Due to Misleading Outputs, Warns TransLegal CEO

Legal AI Systems Risk Cross-Border Errors Due to Misleading Outputs, Warns TransLegal CEO AI-Powered Productivity Tools Launch to Automatically Manage Digital Distractions

AI-Powered Productivity Tools Launch to Automatically Manage Digital Distractions