The rise of Artificial Intelligence (AI) is transforming the global landscape, with companies specializing in AI tools becoming some of the most valuable corporations, surpassing the GDPs of numerous countries. This disruption is reshaping social, commercial, and political dynamics, particularly in the media industry, which is grappling with new challenges stemming from AI’s capabilities. The evolution of journalism, essential for healthy democracies, is undergoing changes that many consumers may not immediately recognize.

Understanding AI’s impact on information environments and its political implications necessitates a basic grasp of generative AI (GenAI) and its operational mechanisms. This knowledge is critical as the influence of AI on the information we consume continues to grow.

The foundation of GenAI lies in vast data collection, involving text, images, videos, and sounds sourced from crawling and scraping the internet. This data encompasses journalism, academic outputs, and public web content, often supplemented by commercially licensed materials. The legality of these data collection practices remains ambiguous, sparking copyright and privacy litigation globally and igniting policy debates over the conditions under which data can be accessed. Creatives have voiced concerns as their work constitutes the backbone of the substantial revenues generated by multinational AI firms.

However, mere access to data is insufficient for AI technologies. Converting raw data into meaningful training datasets requires extensive computational processes and human effort. Data workers engage in tasks like labeling, cleaning, tagging, and annotating, thereby creating semantic links that enable GenAI models to respond meaningfully to user prompts. Much of this labor is outsourced to lower-cost countries such as Kenya, India, and China, where workers often receive low wages and endure substandard labor conditions. Once prepared, these datasets are employed to train AI models through machine learning techniques.

Understanding how generative AI operates reveals significant distinctions from human learning. What is termed ‘machine learning’ is fundamentally a process of statistical pattern recognition, characterized by iterative adjustments to numerous internal values until predictions align closely with expected outcomes. After training, models like those powering ChatGPT can generate coherent text when prompted, but they do so without genuine comprehension of the subject matter. AI systems do not possess semantic knowledge; they operate as pattern-modeling engines that predict plausible continuations of given prompts.

The predictive capabilities that render generative AI powerful also introduce a degree of unreliability. These systems may produce outputs that appear fluent and coherent, but they do not equate to verification or truth. When tasked with generating content, AI can quickly synthesize information or create photorealistic images that may misrepresent reality. If trained on biased or incomplete data, AI systems may “hallucinate” content, producing outputs that look accurate but are fundamentally flawed. This distinction is crucial for journalism, which is predicated on truth and verification, rather than mere plausibility.

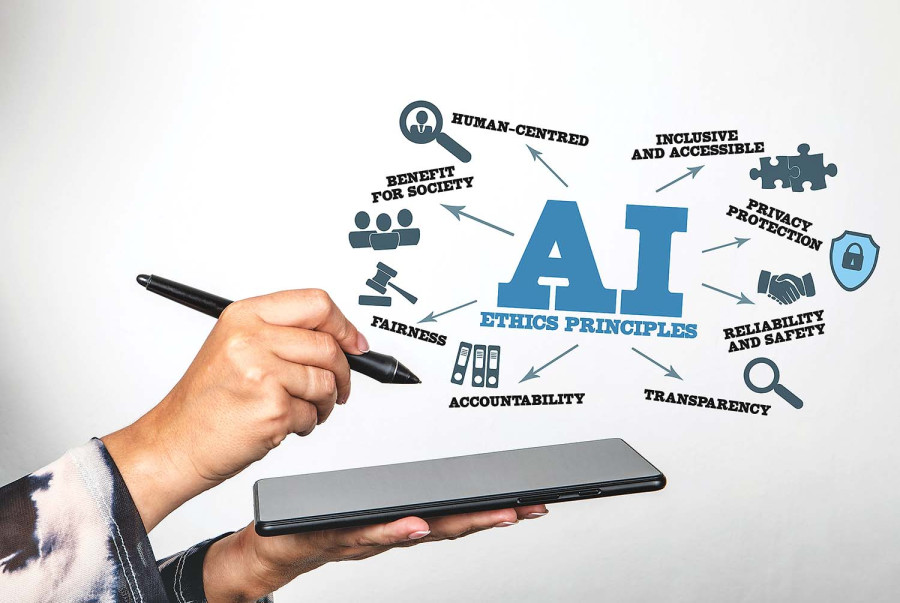

As AI-generated content proliferates, the challenge for journalists and audiences lies in verifying its accuracy. The lack of clear labeling or context for AI outputs complicates the distinction between reporting and simulation, as well as between fact and fiction. The future of journalism hinges on institutions’ ability to adapt to and govern AI’s use effectively. This entails establishing new editorial standards and verification processes, alongside increased transparency regarding the data, labor, and energy that underpin these systems.

The pressing question is not if AI will reshape journalism, but rather how democratic societies can safeguard against AI eroding trust in public institutions. Concerned citizens must prioritize the provenance of their information and recognize that human capacities for verification cannot match the rapid output of chatbots that produce questionable text, data, and images. Without robust protocols and oversight mechanisms, society risks further undermining the foundational principle of shared facts, essential for rational discourse and subsequent actions.

See also Google Limits Nano Banana Pro to 2 Daily Photos; OpenAI Cuts Sora Video Generations to 6

Google Limits Nano Banana Pro to 2 Daily Photos; OpenAI Cuts Sora Video Generations to 6 Google Limits Free Use of Nano Banana AI Image Generator to Two Images Daily Amid High Demand

Google Limits Free Use of Nano Banana AI Image Generator to Two Images Daily Amid High Demand Study Reveals Generative AI’s Creative Limits: Capped at Average Human Level

Study Reveals Generative AI’s Creative Limits: Capped at Average Human Level AI Study Reveals 62% Success in Bypassing Chatbot Safety with Poetry Techniques

AI Study Reveals 62% Success in Bypassing Chatbot Safety with Poetry Techniques Imagiyo AI Image Generator Launches Lifetime Subscription for $34.97, 93% Off Until Dec. 14

Imagiyo AI Image Generator Launches Lifetime Subscription for $34.97, 93% Off Until Dec. 14