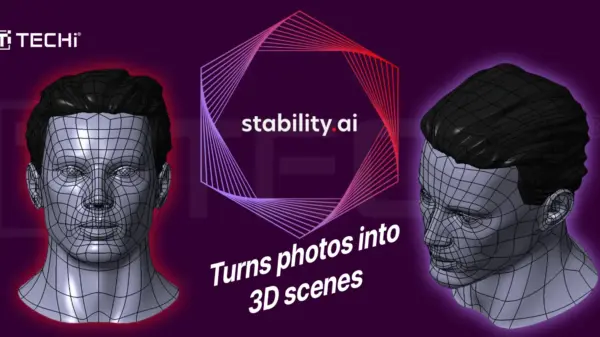

Artificial intelligence (AI) image generators are evolving rapidly, with new systems capable of producing high-quality images using significantly fewer processing steps. Researchers from the University of Surrey’s Institute for People-Centred AI and Stability AI have introduced a model known as Stable Diffusion 3.5 Flash (SD3.5-Flash), which is designed to operate efficiently on consumer devices like smartphones and laptops. This innovation highlights a shift from cloud-based processing to local execution, addressing issues of speed, privacy, and environmental sustainability.

In a study released on March 4 and uploaded to the arXiv database on September 25, 2025, the researchers explained the mechanics behind SD3.5-Flash. Traditional AI image generators typically rely on a diffusion technique that begins with random pixel noise, refining it through 30 to 50 iterative steps, each demanding considerable computational resources. Consequently, these models often necessitate the power of expansive graphics processing unit (GPU) clusters stationed in remote data centers.

SD3.5-Flash distinguishes itself by compressing this process down to just four steps. This remarkable efficiency allows the model to produce images while running entirely on local hardware, thus eliminating reliance on remote servers. Hmrishav Bandyopadhyay, a doctoral researcher at the University of Surrey and a developer of the model, emphasized the technical challenges involved in maintaining quality while reducing the number of processing steps. “Achieving this level of efficiency is technically challenging, as it requires compressing a diffusion model to run in only a few steps while maintaining quality,” he stated.

The implications of this localized approach are manifold. First and foremost is the issue of privacy; since all processing occurs on the device, users’ prompts and generated images are not transmitted to external servers, thereby minimizing risks associated with data exposure. Furthermore, the reduction in processing steps not only accelerates image generation but also mitigates network latency, potentially making the process nearly instantaneous.

Environmental concerns also come into play. Traditional cloud-based AI systems consume substantial energy and water resources, leading to a significant environmental footprint. In contrast, running lightweight models like SD3.5-Flash locally could lead to considerable reductions in energy demands associated with cloud processing.

According to Yi-Zhe Song, director of the SketchX Lab at the University of Surrey, the overarching objective is to enhance accessibility and practicality in AI tools. “SD3.5-Flash puts a powerful creative tool directly in users’ hands while keeping their data private and reducing the energy demands associated with cloud processing,” he said. The research team conducted rigorous testing against conventional diffusion pipelines to assess whether the model’s reduced processing steps impacted image quality. Results demonstrated that SD3.5-Flash can deliver image fidelity comparable to traditional systems, validating the model’s effectiveness.

Lenovo has already taken steps to integrate this innovative technology into its upcoming on-device AI platform, Qira. This platform aims to bring advanced AI capabilities to consumer devices, making features such as AI image generation feasible on laptops, tablets, and smartphones without requiring an internet connection. Lenovo’s recent announcement of Qira-compatible devices suggests that consumers could soon experience the advantages of this technology firsthand.

If successful, the integration of SD3.5-Flash into everyday devices may indicate a broader shift in the deployment of generative AI tools. Rather than relying solely on centralized systems, future AI applications may increasingly operate on the edge, directly embedded in personal devices. This transition represents a significant move toward making generative AI not only more efficient but also more accessible to a wider audience.

As research to compress large models without sacrificing quality continues, SD3.5-Flash suggests that the gap between powerful AI systems and consumer-grade hardware is narrowing. If companies like Lenovo follow through with their product plans, the next generation of AI creativity tools may reside not in the cloud but in the pockets of users, revolutionizing how individuals engage with generative AI technology.

See also India and Taiwan Collaborate to Strengthen AI Infrastructure with $10B Investment

India and Taiwan Collaborate to Strengthen AI Infrastructure with $10B Investment AMD and Upstage Expand Partnership, Deploy AMD Instinct MI355 GPUs for Sovereign AI in Korea

AMD and Upstage Expand Partnership, Deploy AMD Instinct MI355 GPUs for Sovereign AI in Korea AI Software CrossSense Wins £1M for Smart Glasses Aiding Dementia Patients

AI Software CrossSense Wins £1M for Smart Glasses Aiding Dementia Patients U.S. Army Seeks AI-Powered Tech to Counter Uncrewed Threats by March 2031

U.S. Army Seeks AI-Powered Tech to Counter Uncrewed Threats by March 2031