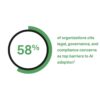

Risk-based classification is emerging as the cornerstone of compliance for AI governance, according to recent analyses of frameworks set to take effect by 2026, including the EU AI Act, NIST AI RMF, Colorado AI Act, and ISO 42001. These regulatory structures impose obligations that are tiered according to the associated risks of AI systems, with stricter requirements for those with higher potential for harm. The critical first step for businesses is to conduct a comprehensive inventory of all AI systems in operation—whether developed in-house, procured from vendors, or integrated into third-party products. Alarmingly, over half of organizations currently lack such an inventory, rendering compliance largely unattainable. Conversely, possessing this inventory can significantly simplify governance.

Employment-related applications of AI are under the tightest scrutiny across various jurisdictions. Technologies used in hiring, promotions, performance evaluations, and workforce management face stringent obligations. This includes AI tools for screening résumés, analyzing video interviews, scoring candidates, and managing shift schedules. Regulatory measures like Illinois’ video interview consent rules, New York City’s bias audit requirements, and the EU AI Act’s classification of hiring algorithms as high-risk underscore that compliance is not optional for businesses leveraging AI in employment contexts; these rules are already in effect.

As the regulatory landscape evolves, the demand for transparency in AI systems is shifting from a voluntary practice to a mandatory obligation. More jurisdictions are now requiring disclosures when AI significantly influences decisions that affect individuals. This encompasses customer-facing AI interactions, automated pricing, content personalization, and financial decisions like credit or insurance approvals. With California’s AI Transparency Act and Utah’s disclosure requirements already operational, businesses that have not revised their customer-facing disclosures, terms of service, or user experience to reflect AI involvement risk falling behind.

AI governance is increasingly becoming a competitive imperative, not just a regulatory obligation. Large enterprises, healthcare organizations, and investors are embedding questions about AI governance into their vendor due diligence processes. The SEC’s Investor Advisory Committee has even advocated for enhanced disclosures regarding board-level AI governance. Notably, firms like Partners Healthcare and Mass Mutual are already inquiring about prospective vendors’ AI risk management practices before finalizing contracts. For small and medium-sized businesses aiming to secure enterprise deals, demonstrating a robust AI governance framework is evolving into a prerequisite for revenue generation, rather than merely a compliance checkbox.

The complexity of compliance is unlikely to diminish in the near future. The current regulatory environment—characterized by overlapping state laws, EU enforcement actions, federal ambiguities, and swiftly changing standards—has become the new norm. This climate reflects heightened public concern over algorithmic bias, data misuse, and AI-fueled discrimination, and such concerns are expected to persist. As 2026 approaches, organizations can anticipate increased regulatory scrutiny and penalties for those that have neglected AI governance. Companies that prioritize regulatory compliance and governance will likely gain a structural advantage over less-prepared competitors, positioning themselves favorably in an increasingly competitive marketplace.

See also Bank of America Warns of Wage Concerns Amid AI Spending Surge

Bank of America Warns of Wage Concerns Amid AI Spending Surge OpenAI Restructures Amid Record Losses, Eyes 2030 Vision

OpenAI Restructures Amid Record Losses, Eyes 2030 Vision Global Spending on AI Data Centers Surpasses Oil Investments in 2025

Global Spending on AI Data Centers Surpasses Oil Investments in 2025 Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge

Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge Investors Must Adapt to New Multipolar World Dynamics

Investors Must Adapt to New Multipolar World Dynamics