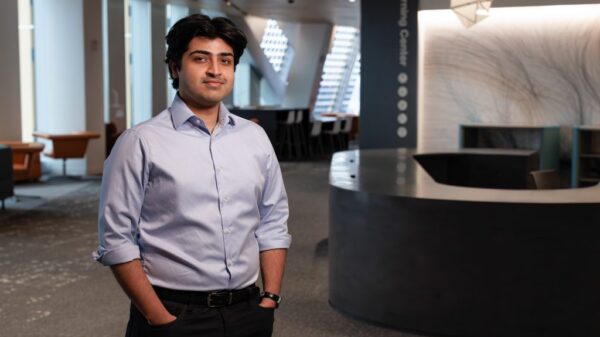

Abhishek Saxena, Head of Strategy and Growth at Sentient, has called for more rigorous stress testing of enterprise AI agents, warning that impressive demonstrations are insufficient for ensuring readiness in high-stakes production environments. As companies increasingly deploy autonomous agents that can impact compliance, financial transactions, and reputations, Saxena argues that the industry must pivot from flashy demos to substantive evaluations that prove these systems can operate reliably under pressure.

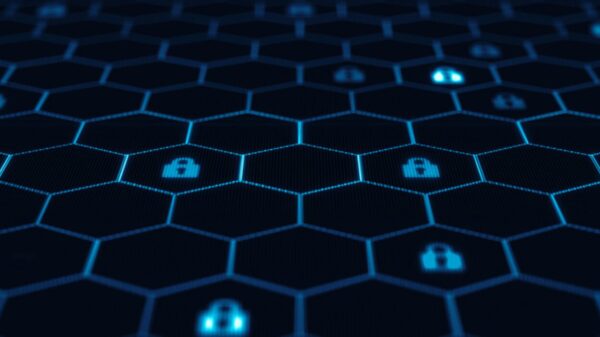

The recent launch of Nvidia’s NemoClaw illustrates the rapid move of autonomous agents from experimental phases into enterprise workflows. This platform introduces essential security measures such as sandboxing and policy guardrails, yet Saxena emphasizes that implementing security does not equate to production readiness. The critical question remains whether these agents have undergone thorough testing to perform consistently amid ambiguity, edge cases, and regulatory scrutiny.

Creating an agent capable of performing well in controlled conditions may be straightforward, but developing one that can navigate uncertainties, adapt to unexpected inputs, and function reliably under real-world conditions poses a significant engineering challenge. This complexity is where many enterprises falter, as the disparity between performance in demos and reliability in production is often underestimated.

For instance, an agent trained to handle customer support queries might successfully respond to standard inquiries but could falter when faced with a unique scenario or an edge case. Similarly, a financial agent may excel with historical data but risk making disastrous decisions if market conditions shift unpredictably. A logistics agent might perform adequately in a simulation but could struggle under the compounded effects of real-world delays and conflicting signals.

Industry professionals familiar with adversarial testing environments recognize these patterns, where systems function well until confronted with the nuanced ambiguity inherent in actual operations. This reality underscores the limitations of the current focus on the rapid development of agent frameworks, highlighting instead the need for dependable evaluation methods before these agents assume significant responsibilities.

Saxena advocates for a systematic stress-testing infrastructure tailored for autonomous systems. This infrastructure would require the intentional introduction of challenging scenarios that reveal weaknesses in production environments. Continuous evaluation—rather than a singular test conducted pre-launch—is essential. The goal should not be to demonstrate that an agent works under ideal conditions but to comprehend its behavior in unpredictable situations.

NemoClaw’s open-source framework is a positive development, granting developers insights into the operations of their agents. However, Saxena cautions that mere visibility of operations is insufficient. The testing infrastructure must advance in tandem with the systems it assesses, ensuring that agents are thoroughly vetted before deployment.

Assuming that failure scenarios are inevitable allows for early identification of potential shortcomings. This shift in mindset not only alters agent evaluation methods but also influences the design of safety measures and the overall preparedness of systems for deployment in demanding environments. As autonomous agents transition from isolated tasks to comprehensive workflows—including negotiating contracts and managing intricate operational processes—the ramifications of a single error can escalate rapidly.

For instance, a failing customer support agent may merely lose a ticket, but a malfunctioning financial agent could result in significant capital loss. Similarly, an operational agent’s failure might bring an entire production line to a standstill. Success in enterprise AI will not be determined by which companies deploy agents first, but rather by those that develop solutions they can genuinely trust.

Trust must be embedded as a fundamental aspect of engineering—integrated from the initial stages of system testing, behavior evaluation under stress, and a comprehensive understanding of failure modes before any real-world interactions occur. As Nvidia provides enterprises with the tools necessary to create autonomous agents, the pressing question remains whether organizations will allocate equivalent resources to the crucial infrastructure needed to validate these systems for real-world applications.

See also Bank of America Warns of Wage Concerns Amid AI Spending Surge

Bank of America Warns of Wage Concerns Amid AI Spending Surge OpenAI Restructures Amid Record Losses, Eyes 2030 Vision

OpenAI Restructures Amid Record Losses, Eyes 2030 Vision Global Spending on AI Data Centers Surpasses Oil Investments in 2025

Global Spending on AI Data Centers Surpasses Oil Investments in 2025 Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge

Rigetti CEO Signals Caution with $11 Million Stock Sale Amid Quantum Surge Investors Must Adapt to New Multipolar World Dynamics

Investors Must Adapt to New Multipolar World Dynamics