More than a quarter of IT leaders in Ireland and the UK are concerned about the challenges posed by deepfake technology, with 27% expressing fears over their ability to detect such attacks in the coming year. This statistic emerges from a survey conducted by Storm Technology, now part of Littlefish, which involved 200 IT decision-makers and underscores the growing apprehensions surrounding the security implications of rapid AI adoption.

The survey results indicate that the anxiety surrounding deepfake detection is particularly heightened among larger enterprises, where 33% of respondents reported concerns, compared to 23% in smaller businesses. Data breaches were identified as the most pressing issue, cited by 34% of IT leaders, followed closely by concerns over data protection (33%) and the risks associated with adversarial cyber-attacks (31%).

In addition to deepfake threats, the prevalence of shadow AI—defined as the use of unsanctioned or unapproved tools—has emerged as a significant concern among IT leaders. One in four respondents listed this as a top worry, while half acknowledged that employees within their organizations are using such tools. Notably, 55% of respondents admitted to utilizing unsanctioned AI platforms themselves, and 42% expressed doubts about the safety of their company data when inputting it into these applications. Only 60% of organizations have established clear guidelines regarding which AI tools are permitted for use.

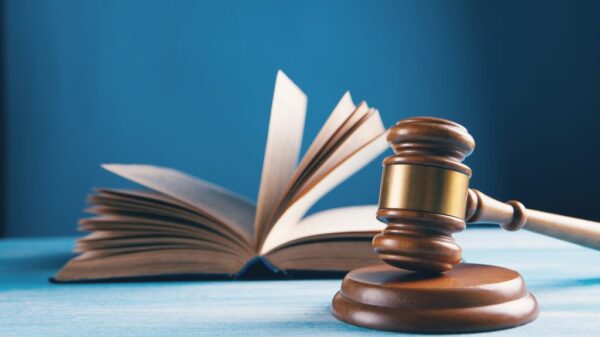

The survey also highlighted substantial governance gaps. Almost one-third of companies lack a strategy to manage risks associated with AI, and 21% of IT leaders do not have a high degree of trust in AI tools. Among Irish respondents, the concern is even more pronounced, with 35% believing their governance measures are inadequate, compared to 28% overall. Approximately four in five participants agreed that their organizations need to enhance regulations governing AI tools.

Data readiness poses an additional challenge, with a quarter of IT leaders indicating that their business data is not adequately prepared for AI applications. Furthermore, 23% reported that their data governance policies are insufficient to support secure AI adoption. As a result, 78% believe that a dedicated project focused on data readiness is essential.

Sean Tickle, Cyber Services Director at Littlefish, emphasized the urgency of addressing these issues, stating, “AI is rapidly reshaping the enterprise landscape, but the speed of adoption is outpacing the maturity of governance. When nearly a third of organizations lack a strategy to manage AI risk, and over half of IT leaders admit to using unsanctioned tools, it’s clear that shadow AI isn’t just a user issue – it’s a leadership one.”

Tickle further noted, “Deepfake threats, data governance gaps, and a lack of trust in AI platforms are converging into a perfect storm. To stay secure and competitive, businesses must invest in visibility, policy clarity, and data readiness – because without those, AI becomes a liability, not a differentiator.”

The findings from this survey reflect a broader trend in the evolving landscape of technology, where the rapid adoption of AI must be matched with adequate governance and security measures. As organizations strive to harness the benefits of artificial intelligence, addressing these challenges will be crucial to mitigating risks and ensuring sustainable growth in the digital age.

See also Agentic AI Expands Cyber Threat Landscape, Challenging Enterprise Security Strategies

Agentic AI Expands Cyber Threat Landscape, Challenging Enterprise Security Strategies Trump Administration Announces Cybersecurity Reset, Cuts CISA Budget by 17% and Focuses on AI Threats

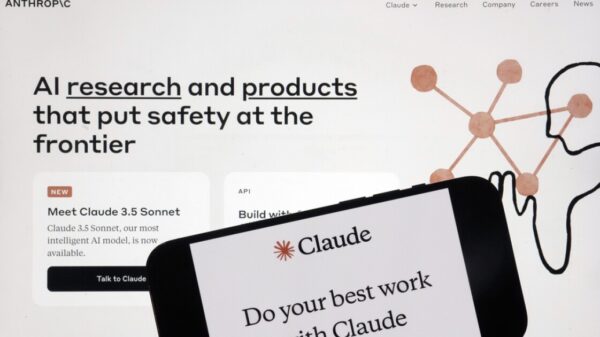

Trump Administration Announces Cybersecurity Reset, Cuts CISA Budget by 17% and Focuses on AI Threats Chinese Hackers Use Anthropic’s Claude AI for 90% of Major Cyberespionage Campaign

Chinese Hackers Use Anthropic’s Claude AI for 90% of Major Cyberespionage Campaign Microsoft’s Digital Crimes Unit Targets AI-Driven Cyber Threats with $20B Strategy

Microsoft’s Digital Crimes Unit Targets AI-Driven Cyber Threats with $20B Strategy First Trust NASDAQ CEA Cybersecurity ETF Surpasses $11B in Assets by 2025

First Trust NASDAQ CEA Cybersecurity ETF Surpasses $11B in Assets by 2025