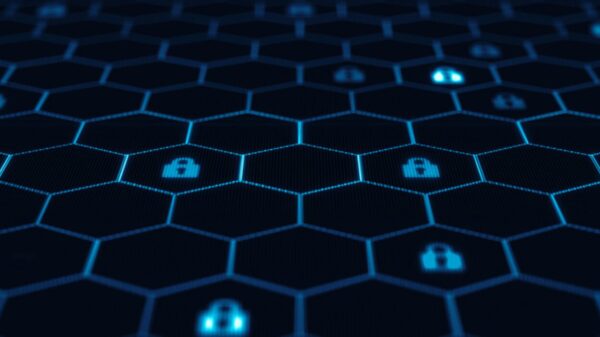

As generative AI (GenAI) and agentic AI gain traction in enterprise environments, their integration has shifted from experimental use to critical deployment. These sophisticated systems now autonomously engage with sensitive data, interact with various APIs, initiate workflows, and collaborate across different agents in organizational settings. This evolution necessitates a robust observability framework, aiming to ensure visibility into the operations of these AI tools to manage risks, validate adherence to policies, and maintain effective operational control.

Observability is increasingly recognized as a foundational requirement for the security and governance of AI systems in production. However, many organizations still grapple with understanding its significance and effective implementation. This disconnect creates potential vulnerabilities at a time when thorough visibility is paramount. In February, Yonatan Zunger, Microsoft’s Corporate Vice President and Deputy Chief Information Security Officer, discussed the expansion of Microsoft’s Secure Development Lifecycle (SDL) specifically addressing AI-related security challenges, emphasizing the importance of observability in this context.

The complexity of AI systems complicates traditional observability models. Unlike conventional software, where applications execute predictable logic, GenAI operates on probabilistic principles, making decisions based on a variety of inputs that can significantly impact outcomes. For instance, consider a scenario where an email agent requests information from a research agent, which inadvertently retrieves corrupted content. Despite no alerts being triggered from traditional performance metrics, a breach of trust has occurred, illustrating the inadequacy of standard observability measures. This example underscores the necessity of updating observability practices to capture a more comprehensive understanding of AI system behavior.

Effective AI observability hinges on the ability to monitor and interpret actions taken by AI systems from development through deployment. Traditional models focus on discrete inputs, while AI systems require a contextual understanding of inputs derived from diverse sources, including user prompts, conversation histories, and external data. Capturing the full context of how these inputs influence system behavior is pivotal for governance and operational oversight. Traditional observability metrics, which often track isolated requests, must evolve to encompass the broader narrative of AI interactions, particularly as multi-turn engagements can lead to unforeseen failures.

In this evolving landscape, observability frameworks must adapt to incorporate AI-native signals, refining key components such as logs, metrics, and traces. Logs should detail the identity context, timestamps, prompts, and responses, while metrics need to account for both traditional performance measures and AI-specific metrics like token usage and retrieval frequency. Traces are essential for tracking the entire journey of requests, enabling teams to diagnose failures effectively.

Moreover, AI observability should integrate two additional core components: evaluation and governance. Evaluation focuses on the quality of AI outputs, ensuring they are grounded in reliable source material and that agents operate as intended. Governance assesses system behavior against predefined policies and standards, ensuring compliance and accountability. This integration facilitates a more proactive approach to risk management and incident response, allowing organizations to adapt quickly to the evolving AI landscape.

The SDL framework offers a structured approach for organizations to operationalize AI observability. Five strategic steps can enhance observability in AI development workflows: establishing clear observability standards, incorporating telemetry from the outset, capturing comprehensive contextual information, establishing behavioral baselines for monitoring deviations, and managing the landscape of enterprise AI agents effectively. These practices not only bolster security but also enhance the overall quality and reliability of AI systems in production.

As organizations continue to integrate AI technologies, enhancing observability can transform opaque model behavior into actionable security insights, significantly improving proactive risk detection and reactive incident investigation. When embedded within the SDL, observability becomes an engineering control, allowing teams to define requirements, implement them during design, and verify their effectiveness prior to deployment. By adapting traditional monitoring approaches, organizations can ensure their AI systems are not only functional but also secure and compliant, ready to meet the challenges of an increasingly complex operational environment.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks