Anthropic has officially launched Computer Use through its Claude Cowork and Claude Code products, marking a significant evolution for the Claude model. This update, available to subscribers on the Claude Pro and Max plans, enables the AI to autonomously control Mac environments, execute terminal commands, manage files, and handle complex technical tasks remotely. As a result, Claude transitions from a primarily conversational assistant to an autonomous agent capable of navigating file systems and running applications.

The introduction of the Claude Dispatch feature further enhances user interaction, allowing for communication with Claude agents through mobile or web chat interfaces. This feature permits remote control of AI agents, offering a capability similar to that of OpenAI’s OpenClaw. The Claude team has rapidly expanded its functionalities, shipping an impressive 74 features in just 52 days. While each feature may seem incremental, the cumulative impact is considered revolutionary, positioning Claude Cowork as an AI digital worker capable of executing intricate, multi-step tasks across various software.

Meanwhile, Google has unveiled Gemini 3.1 Flash Live, a low-latency multi-modal model optimized for real-time voice and video interactions. This advanced model can process text, images, audio, and video, returning immediate spoken responses. According to Google’s model card, Gemini 3.1 Flash Live has achieved a notable 95.9% score on the BigBench audio benchmark, outperforming both the Grok Voice Agent and earlier Gemini models. It is accessible via API, the Gemini app, and an expanded Search Live experience.

In another development, Mistral has released Voxtral TTS, an open-weight multilingual text-to-speech model that supports emotionally expressive speech in nine languages. Designed for scalability, Voxtral TTS can be downloaded from Hugging Face and utilized on local servers, facilitating data-sensitive enterprise applications. This new model is lightweight and competes with ElevenLabs Flash, particularly in human preference tests.

Unsloth Studio has also made strides, updating its platform to offer a 10x faster local interface along with desktop shortcuts and auto-parameter detection. This web UI allows users to train and run models locally across multiple operating systems, supporting a range of tasks from chat to training and multimodal file exports.

On the edge of AI technology, Reka AI has launched Reka Edge, a 7B multimodal vision-language model optimized for quick performance on edge devices. Capable of processing image or video inputs alongside text, Reka Edge is designed for image understanding, video analysis, and object detection. It is now available on both OpenRouter and Hugging Face.

Market Shifts and New Launches

Modular has announced that Mojo 26.2 can run FLUX.2 image generation in under a second and at significantly reduced costs. This high-performance, Python-like language is equipped to support GPU optimizations in AI stacks, promising a fundamentally different cost structure for image generation at scale.

In design technology, Figma has entered beta testing for its AI agents, introducing the Figma Model Context Protocol server through the use_figma tool. These agents can design directly on live Figma canvases while comprehending the user’s existing design system, thereby assisting teams in automating UI components and layout tasks with precision.

Luma Labs has released Uni-1, a sophisticated AI image generation model that creates images while simultaneously processing thoughts and supports iterative interaction. This model allows for collaborative editing in a chat-style format and can generate infographics, positioning it at the frontier of AI capabilities.

Meanwhile, Cohere has launched Transcribe, an open-source automatic speech recognition model that achieves a best-in-class 5.42% word error rate, supporting 14 languages for local and enterprise-friendly applications. This model is expected to have a wide range of use cases in speech recognition technologies.

In the generative audio space, Google DeepMind has introduced Lyria 3, the most advanced model yet for music generation, capable of composing full three-minute tracks with control over structural elements such as intros and choruses. It can even compose from images, integrating SynthID watermarking for traceability, marking a notable step forward in production-ready music workflows.

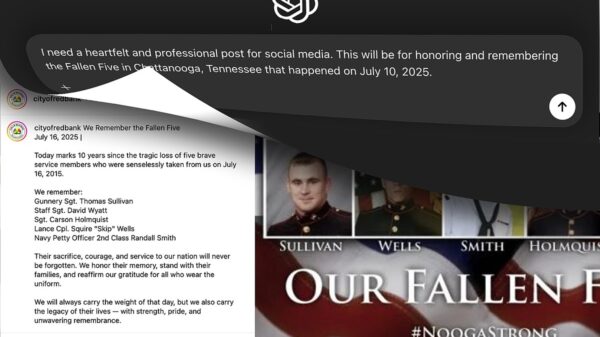

Amidst these advancements, OpenAI has announced a suite of new safety tools aimed at protecting younger users, including the open-source teen safety classifier called gpt-oss-safeguard-20b. This initiative coincides with an updated Model Spec that incorporates “Under-18 Principles” and introduces new parental controls for ChatGPT.

As these developments unfold, it is evident that the AI landscape is rapidly evolving. With advancements in generative models, autonomous agents, and safety measures, companies are shaping the future of AI integration across various sectors. The cumulative impact of these innovations will likely redefine user interaction and productivity in the coming years.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature