As advancements in artificial intelligence (AI) continue to blur the lines between reality and fabrication, experts are sounding alarms about the implications of this technology. A study published last year in the *Communications of the Association for Computing Machinery* revealed that people can distinguish AI-generated images from real photos only 51% of the time, roughly equivalent to chance. This growing difficulty in discerning authentic content has catalyzed a rise in fraudulent activities, such as increased returns in online retail and significant financial losses related to deepfakes, which amounted to over $200 million in just three months of 2025.

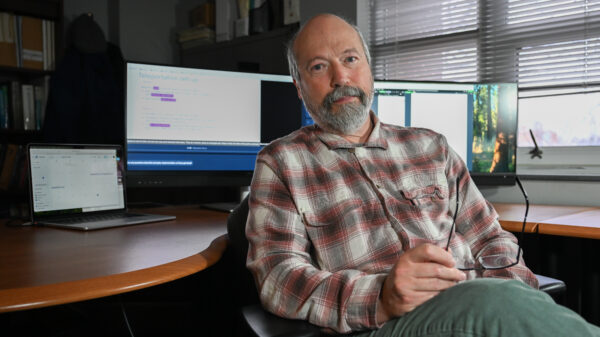

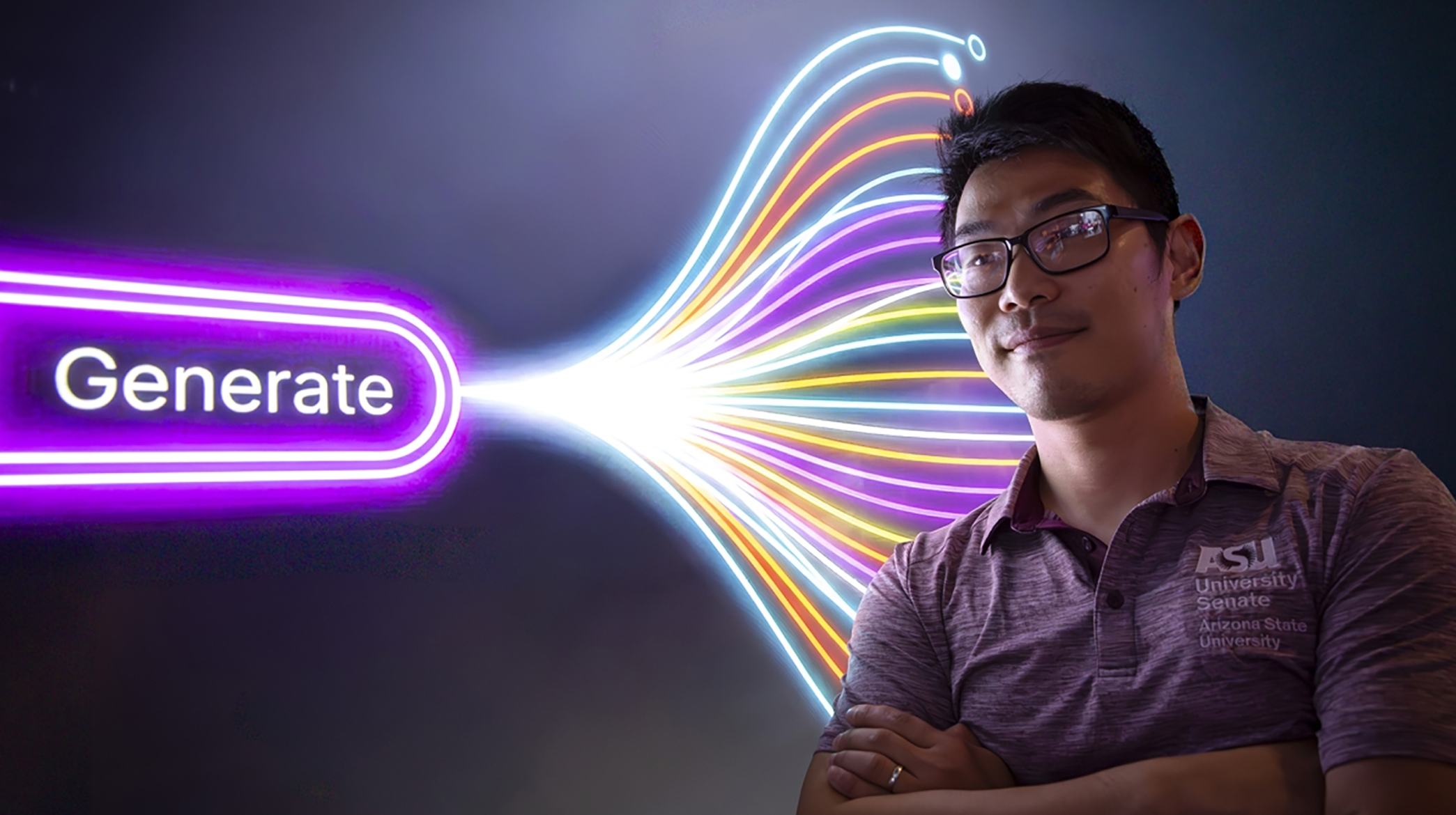

At the forefront of addressing these issues is Yezhou “YZ” Yang, a researcher at Arizona State University (ASU). Yang is leading initiatives to develop technical standards aimed at making AI-generated media identifiable, an essential step as the technology evolves. His work focuses on the concept of embedding detectable signals—akin to digital fingerprints—into the media created by generative AI systems. “It’s like a wireless protocol,” Yang explained. “If everyone agrees to the protocol, then every model generating images would embed something like a watermark that detectors can read later.”

Yang’s research dates back to 2020 and is based on identifying subtle statistical patterns left behind by generative models—patterns that are not visible to humans but can be detected by machines. However, as AI models grow more sophisticated, these detectable traces are becoming increasingly elusive, prompting Yang to consider broader solutions beyond mere detection.

His latest research delves into a concept known as machine unlearning, which aims to teach AI systems to selectively forget problematic data or harmful concepts. Traditional retraining of AI models can take months and be financially burdensome, but machine unlearning offers a more efficient alternative by specifically targeting unwanted information. “Whatever data is learned—the good and the bad—it sticks. Unlearning gives us a way to go back and fix that,” Yang noted.

Yang’s team has been among the early contributors applying unlearning techniques to text-to-image models, an area that has not received as much attention as large language models. One notable project, named Robust Adversarial Concept Erasure (RACE), focuses on removing sensitive concepts, such as explicit imagery, from generative models while making it difficult for users to recover them through adversarial prompts. This method strengthens previous attempts by anticipating and blocking potential recovery strategies.

In another initiative called EraseFlow, Yang’s team treats unlearning as an evolving process, reshaping how an AI model generates images over time. Instead of merely blocking certain outputs, this system redirects the model away from unwanted concepts while maintaining overall image quality. These innovative approaches suggest a future where AI systems are not only transparent but also adaptable post-deployment, a capability that holds significant implications for privacy and regulation.

Yang is also committed to ensuring that these technical advancements reach beyond academic circles. His team collaborates with organizations like the Coalition for Content Provenance and Authenticity and the World Privacy Forum. Their goal is to foster international discussions around AI transparency and data rights, aiming to establish common standards for the behavior of AI systems throughout their entire lifecycle.

“The technology starts with computer scientists. But the impact on society requires a much bigger conversation,” Yang remarked, underscoring the need for a comprehensive dialogue on these essential issues.

As AI-generated media becomes more realistic and widely disseminated, the challenge extends beyond identifying fakes. It’s crucial to maintain trust in an environment where content can be fabricated or altered. Yang’s vision includes developing systems that can not only identify synthetic media but also adapt and self-correct over time. “At some point, society will have to solve this,” he asserted. “We can’t have a world where anyone can generate convincing fake evidence.”

Ross Maciejewski, the director of ASU’s School of Computing and Augmented Intelligence, echoed Yang’s sentiments, emphasizing that addressing the risks associated with AI is not merely a technical challenge, but a societal one. “Our school is uniquely positioned to bring together the research, policy, and real-world partnerships needed to tackle these issues,” he stated, highlighting the importance of initiatives like Yang’s in steering critical discussions while developing scalable solutions.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature