Since the public release of ChatGPT in November 2022, the rapid advancement of generative artificial intelligence (AI) is transforming education. Tools that generate text, images, and other outputs are enabling students to outsource creative thought to machines, prompting educators to question the authenticity of student work and the cognitive skills necessary for future learning and problem-solving. Teachers have legitimate concerns about maintaining students’ capabilities as independent thinkers in this evolving landscape.

For decades, Bloom’s Taxonomy has served as a guide for educators to gauge cognitive demand in the classroom. Developed in 1956 and revised in 2002, this framework categorizes learning from lower-order skills like remembering and understanding to higher-order skills including applying, analyzing, evaluating, and creating. However, the emergence of generative AI raises critical questions: does this hierarchical model still resonate with the skills educators should prioritize? Should Bloom’s framework be discarded altogether?

Traditionally, creation—synthesizing ideas from one’s own knowledge—was regarded as the peak of cognitive challenge. Today, a well-crafted prompt can enable a student to generate text, images, or data analysis almost instantaneously, shifting creation earlier in the educational process. This evolution has led to a more fluid interaction with the cognitive hierarchy, as students can now navigate between different levels of thinking based on their needs and the outputs of AI.

The most complex cognitive tasks in this new paradigm involve formulating effective questions, structuring prompts, and critically assessing AI-generated content. This process requires planning—designing clear prompts and anticipating potential errors—monitoring the AI’s outputs for accuracy and relevance, and evaluating the results through critique and revision. Effectively leveraging human-machine collaboration allows students to remain engaged decision-makers in their learning, balancing their reasoning with AI assistance.

The traditional model of moving from the lower-order thinking skills to the higher-order ones does not align with how today’s learners interact with generative AI.

This collaboration mirrors traditional revision and teamwork, where learners have always oscillated between creating, evaluating, and refining. However, the unique challenges posed by AI—its speed, scale, and opaque reasoning—complicate this process. Students must now manage a collaborator capable of producing polished work without transparency, making metacognitive oversight both crucial and more challenging compared to human collaboration.

In this new context, the foundational skills of remembering and understanding become continuous prerequisites. Students must frequently reference factual and conceptual knowledge to verify and integrate information throughout the iterative cycles of creation and evaluation. A more accurate representation of cognitive skills in a generative AI environment might resemble a vertical helix, illustrating continuous cycles of judgment, revision, and synthesis as learners develop expertise in both content and collaboration with AI.

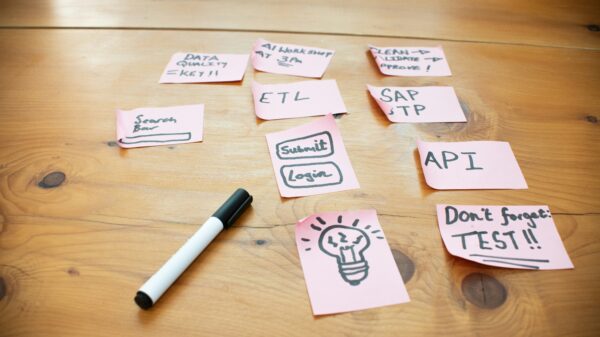

For practical illustration, consider a 7th-grade student tasked with writing an argument about why Harriet Tubman should be featured in a museum exhibit on the Underground Railroad. The student begins by reviewing notes and primary sources about Tubman’s life and impact (remember/understand) and drafts an initial outline. He then crafts a prompt for AI assistance: “Review this draft argument for why Harriet Tubman deserves to be featured in a museum exhibit about the Underground Railroad. The assignment requires three reasons supported by historical facts. Does my draft meet these requirements? What details could I add?”

The AI responds with feedback and suggestions (create), but upon analyzing the output (evaluate/analyze), the student identifies factual inaccuracies regarding Tubman’s achievements. He refines his prompt for more precise assistance, then applies the AI’s insights to enhance his draft with accurate details, integrating his arguments with the AI’s corrections and his own reflections.

As generative AI increasingly shapes students’ educational experiences, educators face the task of helping students develop strong foundational skills while preparing them to collaborate effectively with these tools. This necessitates intentional pedagogical strategies that promote initial competence without AI, progressing to strategic collaboration where students evaluate, question, and synthesize AI assistance with their reasoning.

While Bloom’s Taxonomy continues to provide a framework for assessing cognitive demand, it requires reimagining to better reflect learning dynamics in a generative AI context. The solution lies not in abandoning Bloom but in emphasizing iterative cycles of judgment, critique, and synthesis. Educators must create tasks that reveal students’ thinking by requiring them to assess outputs, identify biases, refine prompts, and integrate AI-generated content with their insights. By equipping students with traditional competencies alongside AI literacy, educators prepare them to be adept navigators of this human-machine collaboration, a skill set crucial for their future.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature