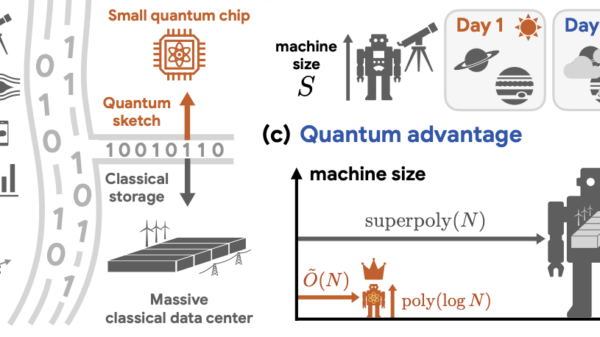

MegaTrain is making waves in the realm of artificial intelligence development with its open-source runtime, which allows the training of large-scale models on a single workstation-class GPU. Detailed findings shared in the Hugging Face research repository reveal a significant advancement: the capability to train language models with over 100 billion parameters using just one GPU. This is achieved by offloading optimizer state into host RAM and streaming weights to the GPU as needed, effectively overcoming the constraints of traditional high-bandwidth memory (HBM) capacity.

This shift towards single-node training for 120 billion parameter models comes as researchers face diminishing returns from large multi-node GPU clusters. As communication latencies and networking overhead increase, local memory-first strategies like MegaTrain provide a more efficient and cost-effective alternative for teams focused on domain-specific model adaptation and long-context research.

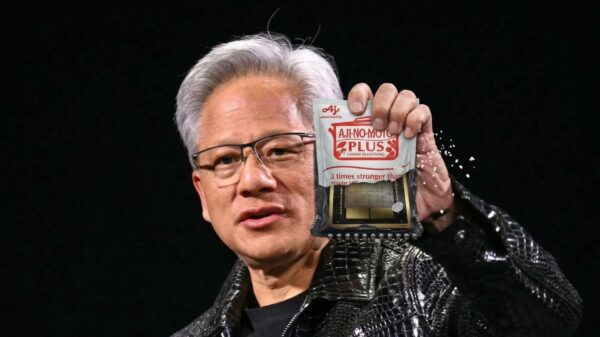

The MegaTrain preprint released on April 6, 2026, outlines a memory-centric architecture that leverages host RAM to store persistent model states while utilizing the GPU for computation. The authors report key experiments, including successful training of a 120 billion parameter model on a single NVIDIA H200 GPU with 1.5TB of host RAM, and a long-context run with a 7 billion parameter model operating at a context length of 512k. This architecture allows smaller research teams, often constrained by the availability of cluster resources, to engage in high-performance AI training.

MegaTrain showcases several performance milestones that challenge the status quo of distributed training. Notably, the project achieved a 1.84 times performance improvement over ZeRO-3 CPU offload configurations during tests with 14 billion parameters. The architecture effectively decouples the dependence on GPU memory capacity by treating host RAM as the primary storage for model state, while the GPU acts as a transient compute engine. This innovative approach mitigates memory bottlenecks traditionally seen in GPU-accelerated training.

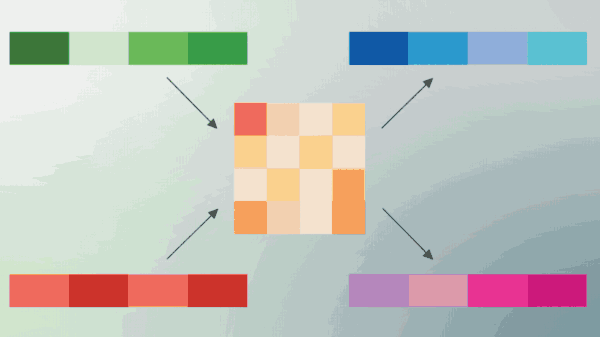

The system’s architecture prioritizes I/O throughput over VRAM capacity, facilitating a more efficient training process. By implementing a pipelined approach to weight streaming, MegaTrain ensures that the GPU remains continually engaged during training cycles. This strategy employs double buffering, which enables concurrent data transfer and computation, thereby minimizing downtime.

However, MegaTrain’s architecture is not without its challenges. While it simplifies orchestration by reducing the reliance on cross-node communication, the need for optimized streaming remains crucial to maintain the efficiency of compute processes. The architecture must carefully balance the latency introduced by weight streaming against the benefits of reduced collective communication.

Market Context

As large-scale GPU clusters increasingly struggle with synchronization issues that degrade throughput, MegaTrain’s model presents a timely solution. Researchers have documented a trend where throughput suffers as the number of nodes increases, exacerbated by the challenges of managing physical infrastructure such as networking bandwidth, power delivery, and thermal management. These practical constraints often overshadow the theoretical capabilities of the hardware.

The comparative advantages of MegaTrain’s architecture become more apparent when considering the hardware it utilizes. While the NVIDIA H200 and the GH200 class systems offer significant performance benefits through their high-speed interconnects, the project emphasizes that achieving such results requires substantial investment in server-class memory and advanced interconnect technologies. As the demand for high-performance AI training increases, MegaTrain serves as a reminder that effective solutions must consider a broader range of constraints beyond mere computational power.

Looking ahead, the implications of MegaTrain’s capabilities extend beyond academic research. The ability to train large models on a single node could redefine procurement strategies for AI labs, where memory capacity may emerge as a more critical consideration than the number of GPUs. Moreover, teams may increasingly favor local setups for sensitive data experiments, promoting a shift towards privacy-focused AI practices.

In summary, MegaTrain’s innovative approach not only challenges existing paradigms of AI training but also signifies a potential shift in how researchers and organizations engage with resource limitations. By prioritizing host memory and optimizing GPU utilization, MegaTrain invites the AI community to reconsider the economics of AI infrastructure, fostering opportunities for smaller teams to contribute to advancements in large-scale model training.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature