In February 2026, a team of four theoretical physicists published a paper on the non-peer-reviewed repository site Arxiv, alongside co-author Kevin Weil, a product manager at OpenAI. This paper is notable for its claim of being the first scientific work to credit a generative artificial intelligence (AI) tool as an author, although AI itself is not recognized as an author by Arxiv or other peer-reviewed journals, which stipulate that a computer program cannot assume responsibility for the contents of a paper.

The physicists assert that the key intellectual breakthrough in their work was facilitated by ChatGPT 5.2, a paid generative AI tool they extensively utilized during their research. They engaged with the AI by posing questions and discussing their research topic, which revolved around a particle physics problem involving gluons, a type of subatomic particle. Despite the complexity of the mathematical question, which is not easily accessible to those outside the field, the scientists expressed surprise and admiration for the AI’s solution, which they verified over a week and found to be correct.

OpenAI operates with both a not-for-profit foundation and a private company, currently the highest-valued in the world. Weil has been actively marketing the AI tool for scientific applications, highlighting its potential for major breakthroughs. He specifically collaborated with the physicists to achieve the first AI-co-authored paper, emphasizing the tool’s role in the research process.

For those unfamiliar with ChatGPT, the experience of interacting with it can indeed feel uncanny, as it responds to prompts with what appear to be genuinely novel ideas. This capacity to generate original mathematical concepts does not seem far-fetched, given the current landscape of generative AI, which permeates various spheres of our digital lives. The evolution of this technology since ChatGPT’s initial release in 2022 has been rapid, and its outputs can often closely mimic human-generated text.

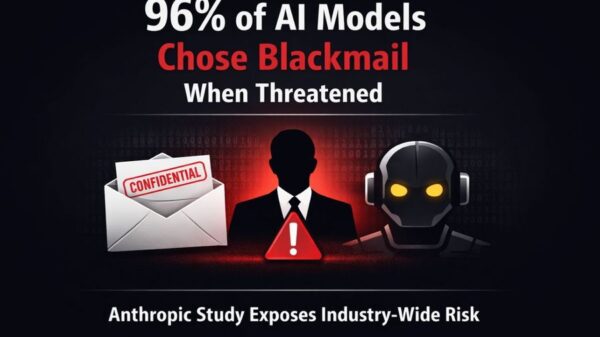

Recent studies across scientific disciplines indicate that reviewers frequently struggle to distinguish between AI-generated abstracts and those authored by humans. Given the restrictive nature of scientific abstracts, this lack of differentiation is not entirely surprising. The moment we live in, characterized by the pervasive influence of AI-generated creativity, raises questions about the origin of ideas and the extent of AI’s involvement in the creative process.

The ubiquity of generative AI tools prompts a necessary dialogue regarding ownership and the implications of allowing megacorporations to control these technologies. In an ideal scenario, AI ownership would reflect a more equitable distribution of resources. However, AI serves fundamentally as a tool, albeit one that can lead to what experts describe as “unintentional cognitive offloading.” This phenomenon mirrors the ease with which we rely on GPS for navigation or digital devices for basic calculations.

Determining the appropriate extent of reliance on AI remains a critical challenge. The potential applications of generative AI in the creative process range from initial idea generation to drafting and refining final outputs, all of which have significant implications for scientific writing. In their research, the physicists extended the use of AI beyond mere writing assistance, employing it to explore various mathematical techniques from historical archives to address their problem.

If AI-aided exploration contributes meaningfully to the creative process, it is worth celebrating its capacity to enhance efficiency and innovation. This technology has the potential to democratize access to creativity, allowing more individuals to participate in cultural contributions. Nevertheless, it is essential to recognize that communication encompasses more than just the act of writing coherent sentences.

Scientific research itself is fundamentally a communicative act, linking theoretical inquiry with practical experimentation. The distinction remains clear: a computer program lacks personhood and, therefore, cannot be held accountable for the intent and understanding inherent in scientific exploration. This underscores the need for caution regarding the cognitive offloading to AI, particularly as we navigate the thin line between efficiency and the erosion of meaningful engagement in our work.

As generative AI technology evolves, so too must our understanding of its implications, including the environmental costs associated with its development and deployment. The rapid pace of change, often under the aegis of morally ambiguous corporations, necessitates a thoughtful approach to how each individual chooses to integrate these tools into their intellectual pursuits. Perhaps the evolution of AI in scientific research will serve as a reflection of personal style and intent, allowing for a resurgence in fundamental scientific exploration while safeguarding critical thinking and human intent.

This piece, consistent with all Science & Society columns as of February 24, 2026, was written without the aid of generative AI tools, with the exception of standard search functionalities.

See also MIT’s New TLT Method Doubles LLM Training Speed While Preserving Accuracy

MIT’s New TLT Method Doubles LLM Training Speed While Preserving Accuracy AI Detection Tools Struggle to Identify Deepfakes, Reveals New Testing Findings

AI Detection Tools Struggle to Identify Deepfakes, Reveals New Testing Findings Google Launches Nano Banana 2 with 4K AI Image Generation and Enhanced Text Clarity

Google Launches Nano Banana 2 with 4K AI Image Generation and Enhanced Text Clarity Mercury 2 Launches with 1,000 Tokens/Second Speed, Outpaces Haiku by 500%

Mercury 2 Launches with 1,000 Tokens/Second Speed, Outpaces Haiku by 500% Google Launches Nano Banana 2, Merging AI Image Tools for Enhanced Visual Generation

Google Launches Nano Banana 2, Merging AI Image Tools for Enhanced Visual Generation