The proliferation of synthetic media poses a significant threat to journalism’s core mission: providing accurate and reliable information to the public. As generative AI technology evolves, its ability to produce long-form news content, opinion pieces, and investigative narratives blurs the line between human reporters and machine-generated material. For contemporary newsrooms, maintaining credibility in a “Post-Truth” era requires more than traditional fact-checking; it demands a thorough technological review of every submission. Integrating high-precision AI detectors into editorial workflows has transitioned from being a luxury to a necessity for digital publishers aiming to uphold the integrity of the fourth estate.

Trust remains the most fragile asset in journalism; once it is eroded, recovery becomes a challenging endeavor. Several major media outlets have faced backlash after publishing AI-generated articles without proper disclosure, leading to public distrust. These pieces frequently exhibit factual inaccuracies, biased viewpoints, and a lack of the nuanced context that only human journalists can provide. When readers realize they have been consuming machine-generated content presented as human-authored, the psychological impact is profound, suggesting that the publication prioritizes clicks and volume over authenticity. This disconnect contributes to a decline in subscriber loyalty and an emergence of “news fatigue,” compelling audiences to disengage from all digital information.

The challenge for editors is twofold: they must harness technology for efficiency while ensuring the essence of their publication remains rooted in human effort. AI models do not operate as objective analysts; they reflect the biases inherent in their training data, often distorting narratives in subtle yet perilous ways. Moreover, AI’s propensity to confidently assert false information—referred to as “hallucination”—poses a direct threat to journalistic integrity. Traditional fact-checking efforts may catch minor errors, but they often fail to identify the systemic absence of “original reporting” in AI-generated drafts. Advanced verification tools can analyze the text’s structure, identifying “linguistic fingerprints” characteristic of specific AI models. By flagging these sections, editors can require original sources or direct quotes, ensuring that real human involvement is evident in the reporting process.

The issue of “deepfake text” represents a new frontier in misinformation. While deepfake videos have garnered attention, the generation of deepfake text is arguably more dangerous due to its ease of production and potential for widespread distribution. Malicious actors can leverage large language models (LLMs) to create thousands of fake “local news” sites that promote particular political or corporate agendas, often presenting them as legitimate news sources. To differentiate themselves from this “gray media,” established news organizations must adopt policies of radical transparency. By employing detection technology to authenticate their content as human-generated, they can create a “verified” brand, possibly leading to the emergence of a digital equivalent to “Fair Trade” or “Organic” labels in journalism—a “Human-Verified” badge that assures readers they are receiving insights from real, accountable professionals.

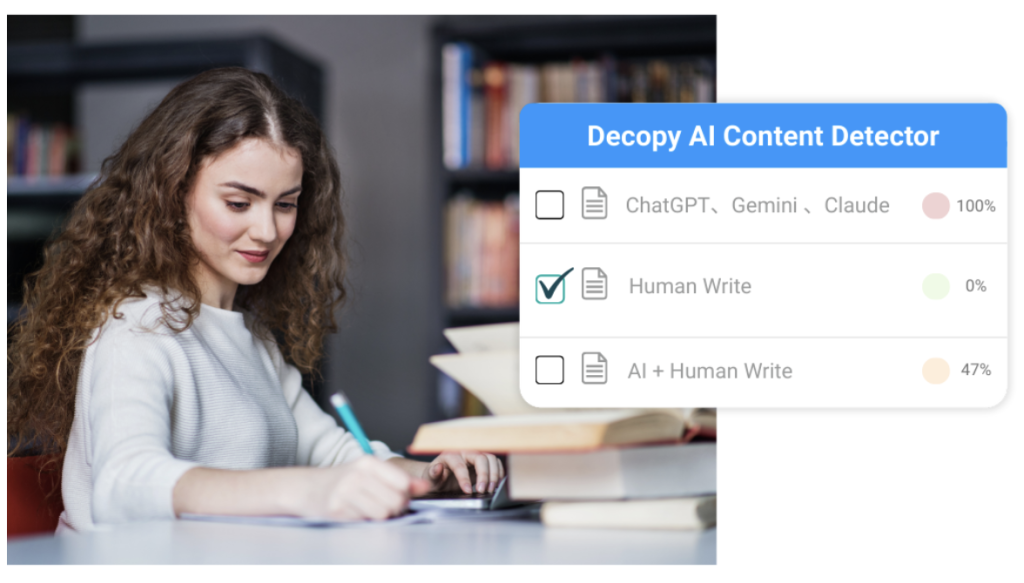

The integration of AI into the newsroom should not aim to eradicate its use but to redefine its role. AI proves beneficial for tasks such as transcribing interviews, summarizing lengthy reports, or analyzing vast datasets. However, it is essential to establish a clear boundary between data processing and narrative creation. A modern editorial workflow should encompass several stages, including data gathering through AI analysis of public records, human synthesis via journalist interviews, drafting by the journalist with potential AI assistance for structure, a verification audit to ensure the dominant “human voice,” and transparent disclosure about AI’s role in the content creation process.

As major global elections approach and complex geopolitical issues unfold, the demand for verified information is poised to increase. Social media platforms, search engines, and news aggregators face mounting pressure to filter out low-quality, synthetic content. Publications that proactively verify their content are likely to gain favor with algorithms and audiences alike. Investing in a robust AI content detector symbolizes a commitment to the audience and a belief in the enduring importance of human observation and accountability. Journalism has historically served as a means of holding power accountable through the eyes of a witness. In an age characterized by endless digital echoes, the most powerful stance a newsroom can adopt is to reaffirm its identity as a witness—not merely an algorithm.

See also OECD’s Andreas Schleicher Advocates Strict AI Regulations for Educational Success

OECD’s Andreas Schleicher Advocates Strict AI Regulations for Educational Success Runway Research Reveals 90% of Users Can’t Distinguish AI-Generated Videos in New Study

Runway Research Reveals 90% of Users Can’t Distinguish AI-Generated Videos in New Study Huawei Researchers Unveil Roadmap to Overcome 10 Key Challenges in Diffusion Language Models

Huawei Researchers Unveil Roadmap to Overcome 10 Key Challenges in Diffusion Language Models Studio Ghibli Search Launches AI-Powered Scene Finder Using Text and Images

Studio Ghibli Search Launches AI-Powered Scene Finder Using Text and Images GPT Proto Launches Kling o1 and 2.6 API, Offering 35-50% Lower Pricing for AI Video Generation

GPT Proto Launches Kling o1 and 2.6 API, Offering 35-50% Lower Pricing for AI Video Generation