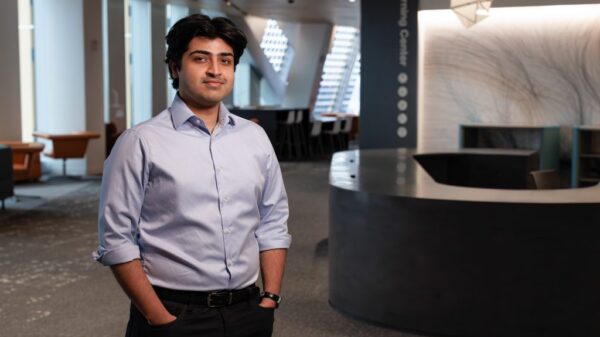

Prof. Iro Armeni of Stanford University presented a groundbreaking talk on generative vision models for 3D reconstruction and synthesis, showcasing innovative techniques aimed at enhancing robotic assembly and architectural design. The presentation took place recently at a technology symposium, where Armeni detailed three distinct paradigms designed to advance machine perception in the built environment.

At the heart of her talk was a novel 3D rectified flow matching model, which has been trained from scratch specifically for robotic assembly applications. This model emphasizes the optimization of flow-based trajectories, enabling precise geometric reasoning critical for robotics in construction and manufacturing sectors. By refining the way robots perceive and interact with their environments, Armeni’s work aims to improve efficiency and accuracy in automated assembly lines.

In addition to this, Armeni described an architectural adaptation of video diffusion models that enhances the process known as 3D Gaussian Splatting (3DGS). By integrating specialized encoding modules into a foundation model, her approach effectively bridges the gap between two-dimensional temporality and three-dimensional spatial consistency. This advancement is particularly significant as it allows for more realistic rendering and interaction with digital environments, which is essential for various applications in virtual reality and architectural visualization.

Furthermore, Armeni introduced a test-time optimization technique for 3D style transfer, utilizing pretrained large 3D generative models to align disparate geometries. This innovative technique allows for sophisticated manipulation of visual styles across different 3D structures, enhancing the creative possibilities for architects and designers. By leveraging these pretrained models, her research group is poised to automate and streamline the lifecycle of sustainable, data-driven environments.

Armeni, who leads the Gradient Spaces group at Stanford, has a rich academic and professional background that informs her research. With a PhD in Civil & Environmental Engineering and a minor in Computer Science from Stanford, she previously served as a Postdoctoral Fellow at ETH Zurich. Her multidisciplinary foundation also includes an MSc in Computer Science and an MEng in Architecture and Digital Design. This diverse expertise enables her to effectively bridge the gap between generative AI and architectural engineering.

Beyond academia, Armeni’s experience as an architect and consultant in both private and public sectors enriches her approach to machine perception and generative design. Her contributions to the field have been recognized through various accolades, including the Google Research Scholar Program and the ETH Zurich Postdoctoral Fellowship. These prestigious honors reflect her commitment to advancing technology in ways that promote sustainable and adaptable living spaces.

As Armeni’s research continues to evolve, the implications for industries spanning robotics, architecture, and urban planning are profound. The integration of generative vision models not only promises to enhance the efficiency of building processes but also aims to reshape the way we envision and interact with both physical and digital spaces. With the increasing importance of sustainable practices in construction and design, her work stands at the forefront of a new era in which data-driven solutions become indispensable in the development of future environments.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature