This story was originally published by CalMatters. Sign up for their newsletters.

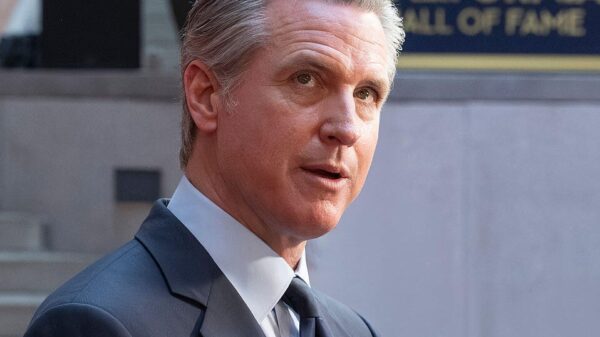

California Governor Gavin Newsom signed an executive order on Monday aimed at establishing a framework for the use of artificial intelligence (AI) by state agencies, particularly in light of the recent designation of San Francisco-based AI company Anthropic as a “supply-chain risk” by the U.S. Department of Defense. This designation, which followed a dispute over contract clauses that restricted the military’s use of Anthropic systems for domestic mass surveillance and fully autonomous weaponry, effectively limits the company’s ability to compete for certain federal contracts. A recent court ruling has temporarily blocked the designation.

The new executive order by Newsom reflects a growing concern regarding the implications of AI technology, as it seeks to guide state employees in the ethical deployment of AI tools while promoting their accelerated use. California, home to many leading AI firms, is already a frontrunner in AI regulatory measures.

The order directs state agencies to develop standards related to AI’s potential to generate child sexual abuse material, infringe upon civil liberties, and violate discrimination laws. It aims to ensure that state employees have access to “vetted GenAI tools” and requires an update to the State Digital Strategy to explore ways generative AI can enhance government transparency and accessibility of services for Californians. Additionally, the order mandates guidance on watermarking AI-generated imagery and videos.

This initiative comes as over 20 state departments are working on Poppy, a generative AI assistant designed to assist state employees, while half a dozen other agencies are experimenting with AI to support various social initiatives, including homelessness assistance and business support. Newsom’s office has indicated that the current federal administration, particularly during Donald Trump’s presidency, has rolled back protective measures related to AI, prompting California to take a more proactive stance.

“Unlike the Trump administration, California remains committed to ensuring that AI solutions adopted and deployed by the state cannot be misused by bad actors,” the governor’s office stated in a press release detailing the executive order.

At the federal level, the previous administration had implemented executive orders that discouraged state-level AI regulation and encouraged federal agencies to use AI for streamlining processes and reducing regulatory burdens. The White House recently introduced an AI policy framework aimed at Congress, advocating a relaxed regulatory approach without addressing critical issues such as bias and discrimination.

This is not Newsom’s first foray into AI governance; earlier in 2023, he signed an executive order focused specifically on generative AI, which powers applications like ChatGPT and Midjourney. That order similarly called for increased AI utilization by state agencies alongside the establishment of appropriate safeguards.

The governor’s approach to AI regulation is closely monitored by both union leaders and technology donors, especially as he faces increasing pressure for worker protection measures related to AI technology ahead of the upcoming midterm elections. Union representatives have stated that they will not support Newsom’s potential presidential run without strong commitments to worker rights in the face of advancing technology.

As California continues to shape its AI regulatory landscape, the implications of such measures could reverberate beyond state lines, influencing national discussions on AI governance and ethical practices within the rapidly evolving tech sector.

See also AI Technology Enhances Road Safety in U.S. Cities

AI Technology Enhances Road Safety in U.S. Cities China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year

China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake

AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support

JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery

Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery