AI governance has emerged as a key operational concern for organizations globally, pushing frameworks like AI TRiSM and guidelines such as the OWASP LLM Top 10 into the forefront of enterprise risk discussions. This shift signifies a substantial transformation in AI application, as models are no longer restricted to lab environments or limited proof-of-concept projects; they are now integrated into critical products, workflows, and decision-making processes.

Despite this progress, many AI governance initiatives face significant challenges. These issues are not necessarily due to the frameworks being inherently flawed; rather, they stem from the assumptions that underpin them—assumptions that frequently do not hold true in real-world enterprise contexts. Both AI TRiSM and OWASP assume that organizations have a clear understanding of where AI is deployed and possess sufficient control over those systems to implement governance consistently. However, this scenario is rarely the case in practice.

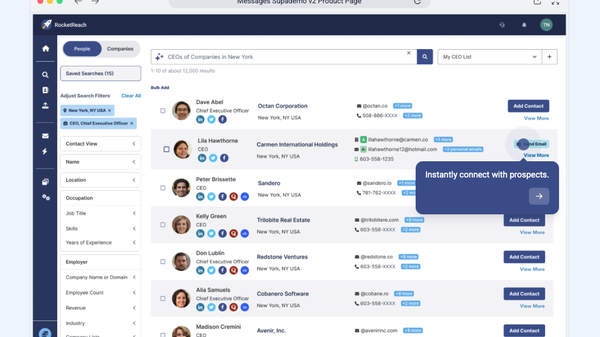

AI adoption tends to be fragmented and rapid, with capabilities surfacing within SaaS platforms without explicit deployment decisions. Developers often introduce models through APIs, open-source libraries, and embedded services, while business users may adopt AI-driven workflows to tackle immediate challenges, frequently bypassing formal approval processes. This behavior, though not malicious, gives rise to an increasing layer of shadow AI—AI usage that lacks centralized visibility, ownership, and governance. Consequently, when governance frameworks are applied, they often fail, addressing only the visible aspects of AI while leaving a significant portion of exposure unmanaged.

AI TRiSM, while comprehensive in its approach to evaluating trust, risk, and security, requires foundational conditions that many organizations have yet to establish. Those implementing AI TRiSM often find themselves limited to conducting risk assessments, maturity scores, and policy recommendations. This limitation does not reflect a lack of depth in the framework itself but rather a deficit in foundational visibility and ownership structures necessary for its full capabilities to be activated. Without such a foundation, AI TRiSM assessments can become episodic and incomplete, focusing solely on formally reviewed systems while unofficial or unknown AI usage continues to thrive in parallel.

Discussions surrounding AI risk frequently center on the model level—examining how models are trained, their behavior, and potential vulnerabilities. While this focus is understandable, much of the risk in enterprise environments arises not solely from the models themselves but from how AI is integrated, connected, and operated across various systems and teams. For example, AI embedded in third-party SaaS platforms presents different risks compared to a model operating within a controlled internal environment. The complexity increases further with the introduction of agentic AI, where systems can autonomously take actions toward specific goals. In such cases, oversight becomes challenging without a clear understanding of where these AI agents operate and who is responsible for them.

This is where AI Exposure Management plays a critical role. It addresses the fundamental conditions that governance frameworks need to function effectively at scale. AI Exposure Management begins by establishing a continuously updated view of where AI exists throughout the enterprise, including in SaaS platforms, development pipelines, APIs, agents, and employee workflows. Such visibility is not a one-off inventory exercise; it must adapt as tools and team experiments evolve.

Moreover, defining ownership and accountability is crucial. Governance falters when no one is responsible for the outcomes associated with AI assets. AI Exposure Management ensures that each asset has clearly defined business, technical, and risk ownership, serving as the foundation for accountability, decision-making, and remediation. When risks are identified, a designated owner is responsible for addressing them.

Lastly, AI Exposure Management translates risk insights into actionable outcomes. It provides the operational layer necessary to turn assessments into real-world results. This does not replace AI TRiSM but rather complements it, ensuring that when risks are identified or OWASP-related issues arise, there is a clear owner, a defined workflow, and a path to resolution.

Concerns about AI governance slowing innovation are prevalent, yet unclear governance structures tend to hinder progress far more than explicit rules. Vague expectations can lead organizations to either halt their initiatives or operate outside established systems, allowing shadow AI to flourish. By fostering a deeper understanding of where AI exists, who owns it, and how risk decisions are operationalized, organizations can shift governance from theoretical discussions to practical implementation. AI Exposure Management serves as the connective tissue allowing governance to keep pace with modern AI adoption, ensuring that organizations move beyond merely documenting risk to actively managing it.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health