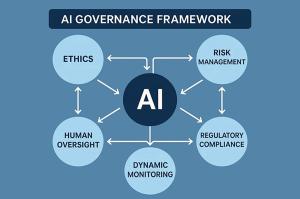

As artificial intelligence (AI) becomes increasingly integrated into business decision-making processes, the associated issues of accountability, liability, and insurability are evolving from theoretical discussions to practical necessities. Corporate leaders, risk managers, and insurers are now confronted with the pressing question of how to manage the risks that AI presents in a landscape where legal, regulatory, and technical frameworks are still in flux.

This timely discourse, led by Professor Anat Lior as part of Willis Towers Watson’s ongoing research into AI liability through the Willis Research Network, builds upon prior analyses from Insuring the AI Age. It draws from extensive empirical research and engages closely with insurers, regulators, and technology developers to shed light on how liability for AI-related harm is beginning to take form. The findings highlight the gaps in existing insurance models, the emergence of new strategies, and the areas still shrouded in uncertainty.

For organizations currently implementing or underwriting AI systems, understanding these dynamics is crucial. Coverage gaps, regulatory divergence, litigation risks, and the evolving role of insurance are all critical themes that speak to the practical implications of AI liability today.

The evolving landscape of AI risk illustrates a significant challenge. Traditional insurance frameworks have yet to fully categorize AI-related risks. Research by Professor Lior indicates that while some insurers remain cautious—citing a lack of claims data and relying on existing technology or cyber policies—others, particularly startups and innovative departments, are taking proactive steps to develop AI-specific solutions. For risk managers, this suggests an ongoing experimentation phase within the market, where ambiguities and coverage gaps persist, especially regarding novel AI applications that extend beyond well-defined areas like autonomous vehicles.

Compounding these challenges is a fragmented regulatory environment. The impending EU AI Act aims to reshape compliance expectations, yet its practical enforcement and implications for insurance remain uncertain. In the United States, the absence of a cohesive regulatory framework adds another layer of complexity. Risk managers are advised to keep a close watch on legislative developments and the responses from insurers, as changes in regulation could rapidly alter liability exposures and policy requirements.

Moreover, traditional risk models are increasingly being called into question. Existing actuarial methodologies may not adequately capture the complexities associated with AI, particularly as new technologies and use cases emerge. While some risks can still be managed with conventional approaches, others—such as those posed by generative AI or autonomous systems—demand innovative thinking. The emergence of guarantee policies, which emphasize performance failure over accident-based liability, is one potential solution. This evolution prompts risk managers to evaluate whether current policies adequately address the unique characteristics of AI or if tailored solutions are necessary.

Litigation trends are also reshaping the insurance landscape. High-profile legal cases involving generative AI and liability for AI-related harm are increasingly affecting underwriting and policy design. Insurers are becoming more attentive to the outcomes of such litigation, which may establish precedents for coverage and compensation. For risk managers, staying abreast of these trends is essential for anticipating potential claims and ensuring that coverage remains robust.

Insurance can play a pivotal role in fostering safe AI adoption by providing a financial safety net for unforeseen outcomes. However, the conversation underscores the need for enhanced collaboration between insurers, technology experts, and regulators. Risk managers may find it beneficial to advocate for explicit policy language regarding “silent AI” risks and seek affirmative coverage statements from insurers. Engaging in industry forums and multidisciplinary dialogues can help organizations navigate emerging risks and the evolving regulatory landscape.

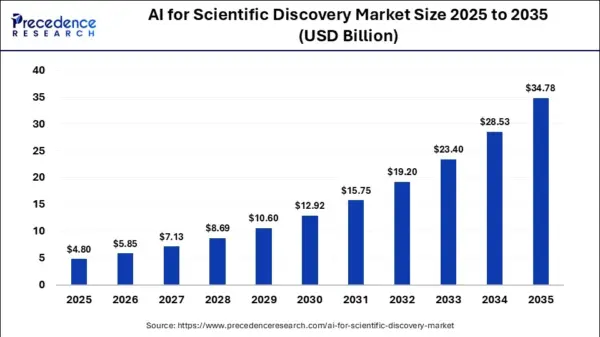

Looking to the future, the advent of emerging technologies such as quantum computing is expected to further complicate the risk landscape. The intersection of AI and quantum technology will introduce new uncertainties that require risk managers to remain adaptable and informed. The insurance market may see a bifurcation in how it addresses AI risk, either through standalone policies or by incorporating AI risk into broader insurance products, influenced by ongoing market and regulatory developments.

Taken together, these insights highlight a critical message for risk managers and insurers: AI liability is an immediate and dynamic concern, demanding proactive engagement. While the insurance sector is beginning to respond through experimentation and the introduction of new products, much of the landscape remains unsettled, particularly as novel applications of AI continue to emerge. Willis Towers Watson’s collaboration with Professor Lior reflects a broader commitment to equipping clients with the insights needed to navigate this uncertainty effectively. By merging legal scholarship with market intelligence and practical advisory expertise, this work aims to support organizations in anticipating liability exposure and shaping governance frameworks that foster innovation while upholding accountability.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health