The rapid advancement of artificial intelligence is reshaping various aspects of modern life, but it also raises significant privacy concerns. Incidents involving major technology firms are illustrating the precarious balance between digital innovation and personal privacy, a value that many Americans hold dear.

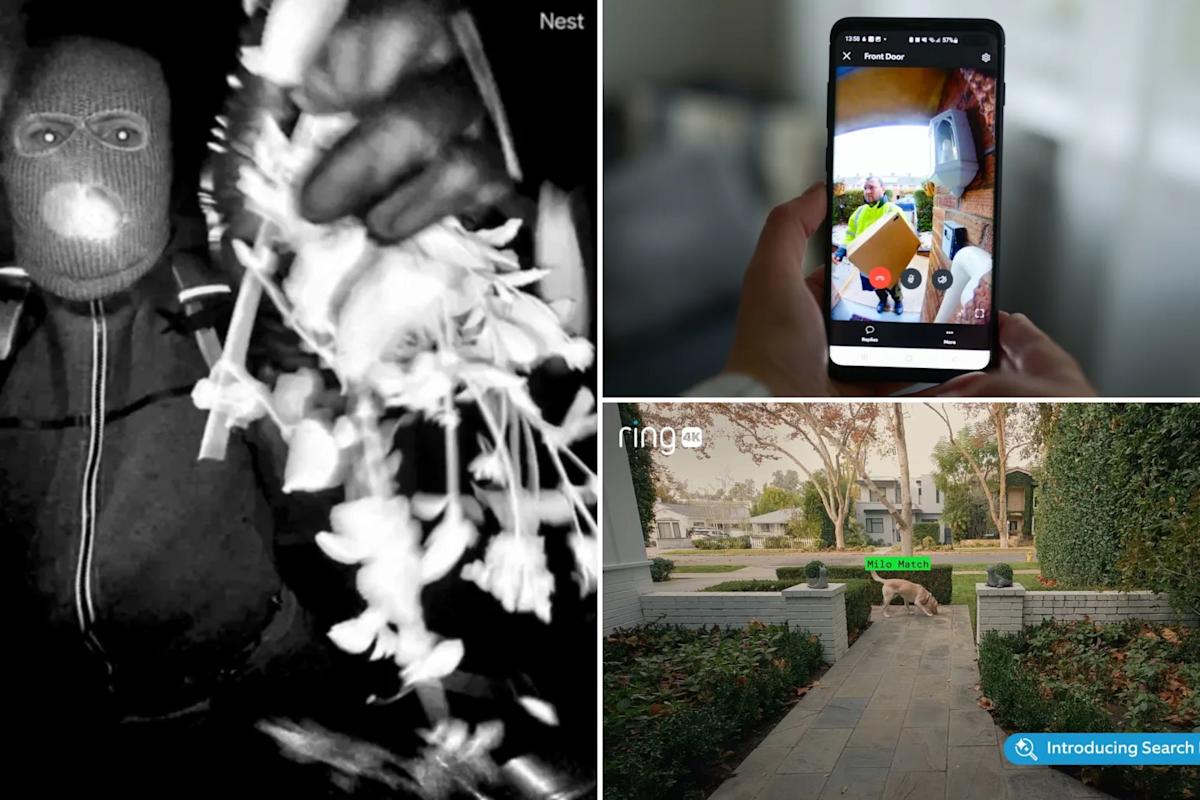

Amazon’s doorbell camera company, Ring, recently faced backlash for a Super Bowl advertisement that highlighted its technology’s role in tracking a lost dog. Instead of celebrating the service, the ad drew criticism from privacy advocates who interpreted it as a step toward a pervasive AI-enhanced surveillance system that could be exploited by law enforcement.

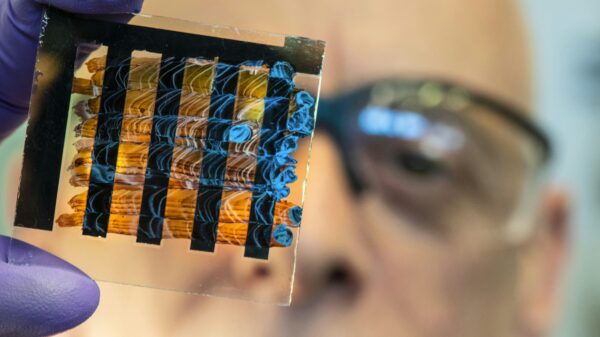

In a separate incident, the FBI’s ability to recover footage from a Nest camera during an abduction case in Tucson raised eyebrows. Although law enforcement claimed the data was inaccessible due to the family’s lack of a paid subscription, they later revealed that residual data from backend systems had facilitated the recovery. This instance has sparked questions about the extent to which such companies can retain or retrieve user data.

Ring’s CEO has since had to apologize for the implications of the company’s extensive camera network, even as monitoring homes and neighborhoods remains central to its business model. Following the controversy, Ring canceled its partnership with Flock Safety, a firm involved in license plate recognition for law enforcement.

Meanwhile, OpenAI, the organization behind ChatGPT, faced criticism for its handling of a user account linked to alleged violent behavior. The company banned the account but did not inform law enforcement. Critics argue that the storage of personal data by AI chatbots and smart home devices raises serious privacy issues, with personal information possibly being used against users.

As the popularity of doorbell cameras and AI systems surges, some consumers seem willing to sacrifice privacy for convenience. “The sales pitch is peace of mind, but the actual cost — your privacy — may be greater than you care to pay,” commented an industry expert.

Concerns over privacy are not new. The Meta company recently paid a $725 million fine for privacy violations, a cost seen by some as merely the price of doing business for large tech firms. “Fines like this are like affirmations for these big tech firms,” stated Sree Sreenivasan, CEO of Digi Mentors, noting that such penalties often appear trivial in the context of corporate earnings.

Experts warn that privacy laws in the United States are insufficient to protect consumers. Peter Jackson, a privacy attorney, pointed out that most people lack a clear understanding of how exposed they are to data breaches and misuse. Current penalties for violations are seen as inadequate, especially when companies like The Walt Disney Company settle for relatively small amounts compared to their overall revenue.

The erosion of privacy is framed as an inherent aspect of many tech companies’ business models, with consumer data becoming a valuable commodity. Arash Vakil, a business professor, remarked that users have become accustomed to trading their data for free services, often without fully realizing the implications. “If the product is free, then you are the product,” he cautioned.

Despite these challenges, many consumers seem resigned to the trade-off, as opting out of modern conveniences increasingly equates to opting out of contemporary life. “People want all the convenience and all the privacy but don’t do anything about the privacy,” Sreenivasan noted. This dynamic has roots in earlier internet practices, such as accepting cookies for a better online experience.

As AI technologies continue to evolve, so too do the complexities of privacy. The lack of clarity surrounding AI chatbot interactions raises questions about the confidentiality of the information shared. “There’s a personality there that’s driving these responses,” observed Michel Paradis, a lawyer specializing in AI law, emphasizing the potential risks associated with disclosing sensitive information to chatbots.

The challenge remains for tech companies to navigate the intersection of innovation and privacy responsibly. With lawmakers slow to address these issues, the future landscape of privacy is uncertain. Experts like Paradis are cautiously optimistic, suggesting that society will eventually adapt, as it has with other transformative technologies. However, the path forward involves grappling with the implications of an increasingly connected digital world.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health