A bipartisan coalition of researchers, former officials, and civic leaders has unveiled a detailed roadmap for artificial intelligence governance, asserting that the United States can no longer afford to improvise its approach. The Pro-Human Declaration, endorsed by hundreds of prominent figures, establishes a strict, pro-safety framework amidst a notable rift between the Pentagon and AI firm Anthropic, which has highlighted the inadequacies of America’s current AI regulations.

According to organizers, public sentiment is swiftly shifting towards the need for regulatory “guardrails.” They cite national polling indicating that over 95% of Americans oppose a reckless rush toward superintelligence, posing the question of whether Congress will act on this sentiment. The declaration aims to catalyze such action.

The document presents a stark choice: the future of AI could either involve replacing human decision-making with opaque systems or developing AI that enhances human capabilities while ensuring people remain in control. It outlines five essential pillars: human control, anti-monopoly safeguards, protection of the human experience, civil liberties, and real legal accountability for developers.

Among its strongest provisions are a proposed moratorium on superintelligence development until scientific consensus on safety is achieved and explicit democratic authorization is secured. It calls for mandatory “off-switches” and operational oversight for powerful AI models, as well as a prohibition on architectures that allow for self-replication, autonomous self-improvement, or resistance to shutdown. The core message is clear: do not build what cannot be controlled, and ensure safety before scaling.

This approach draws parallels to existing regulatory frameworks in other sectors. For instance, drug manufacturers cannot market a compound without clinical evidence and regulatory approval. The declaration argues for similar pre-deployment testing in AI, advocating for standardized evaluations and auditable documentation. Initiatives like the National Institute of Standards and Technology’s AI Risk Management Framework and the European Union’s AI Act already emphasize pre-market conformity checks for higher-risk systems. The proposed roadmap aims to align U.S. practices with these established standards.

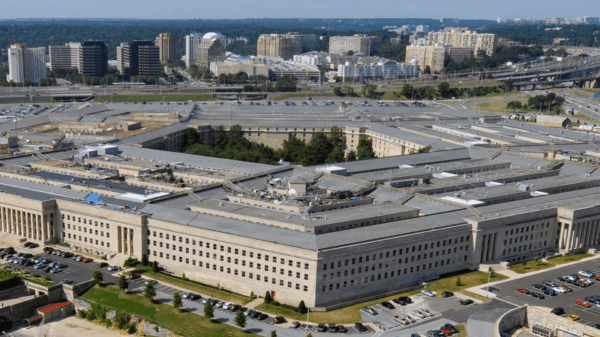

The timing of the declaration is significant, coinciding with an unusual public dispute over control of advanced AI technologies. Following a clash over access terms, the Pentagon designated Anthropic as a “supply chain risk,” while OpenAI established a separate arrangement with defense officials. This incident has highlighted how vendor policies, rather than democratically set regulations, currently dictate the boundaries of government use and safety measures. As one policy analyst noted, this marks a pivotal debate about who controls advanced systems.

Child safety emerges as a critical concern for the coalition. The declaration advocates for mandatory pre-release testing of chatbots and companion applications aimed at minors to assess potential risks, including self-harm prompts and emotional manipulation. With federal surveys revealing record highs in teen depressive symptoms, the need for minimum safety standards—the AI equivalent of seat belts—becomes increasingly compelling.

The list of signatories is intentionally diverse, including former national security leaders, technologists, and representatives from both conservative and progressive backgrounds. The unified stance is clear: regardless of political affiliation, humans must maintain final authority over systems that can influence markets, national defense, and the information landscape.

Implementation Strategies

Implementing these guidelines does not necessitate creating new policies from scratch. The White House has already mandated reporting for high-compute training runs and safety tests for dual-use capabilities. Regulators could align these thresholds with standardized, third-party evaluations, require incident reporting for model failures, and enforce auditable training and inference logs for advanced systems.

Accountability measures could include applying product liability and negligence standards to AI-related harms, necessitating safety cases before deployment in high-risk scenarios, and mandating independent red-teaming and post-market surveillance. Insurance companies may emerge as crucial enforcers, able to assess risk and demand stronger controls as a condition of coverage, thereby accelerating the adoption of best practices without the need for new regulatory bodies.

The roadmap also aims to address the concentration of power in the AI industry, where a few companies control the majority of resources and data. To mitigate this, it suggests opening access to secure public computing through national labs, expanding data partnerships that preserve privacy, and enforcing interoperability, all of which could facilitate safety research while reducing reliance on a small number of powerful players. On an international level, collaboration through standards bodies and model evaluation benchmarks could help minimize regulatory loopholes.

As AI safety regulations take shape, there are potential trade-offs to consider. Critics argue that a moratorium on certain research could hinder innovation or drive it overseas, while supporters contend that unregulated advancements may lead to more significant problems. The latest Stanford AI Index estimates private AI investment at tens of billions annually, indicating that capital is likely to seek clarity in regulations. Predictable rules can help stabilize rather than stifle progress. The World Economic Forum highlights that 44% of workers’ skills may be disrupted in the coming years, underscoring the necessity for comprehensive worker upskilling and transition strategies alongside regulatory frameworks.

Even proponents of the declaration recognize the challenges ahead: establishing a scientific consensus regarding the risks of superintelligence, defining what constitutes “self-replication” in code, and ensuring genuine public involvement in the process. However, these challenges are viewed as engineering and governance hurdles, not justifications for inaction. The central question remains: can developers provide evidence that powerful systems operate within human-defined limits, and can society effectively shut them down if they do not?

This roadmap is a critical call for policymakers to solidify pre-deployment testing, clarify human control, and secure democratic consent before advancing capabilities. In an industry known for its rapid pace, this declaration urges a thoughtful approach, emphasizing the importance of making informed choices while time allows.

See also Vietnam Enacts Landmark AI Law, Introducing Risk-Tiered Regulation for Companies

Vietnam Enacts Landmark AI Law, Introducing Risk-Tiered Regulation for Companies AI Transforms Business Travel in North America, Streamlining Policies and Enhancing Compliance

AI Transforms Business Travel in North America, Streamlining Policies and Enhancing Compliance INTERSCHUTZ 2026 Showcases AI and Civil-Military Innovations for German Emergency Services

INTERSCHUTZ 2026 Showcases AI and Civil-Military Innovations for German Emergency Services OpenAI Reveals Shadow AI Risks: 75% of Employees Use Unauthorized AI Tools

OpenAI Reveals Shadow AI Risks: 75% of Employees Use Unauthorized AI Tools Texas Enacts Responsible AI Governance Act, Impacting Employers and AI Use

Texas Enacts Responsible AI Governance Act, Impacting Employers and AI Use