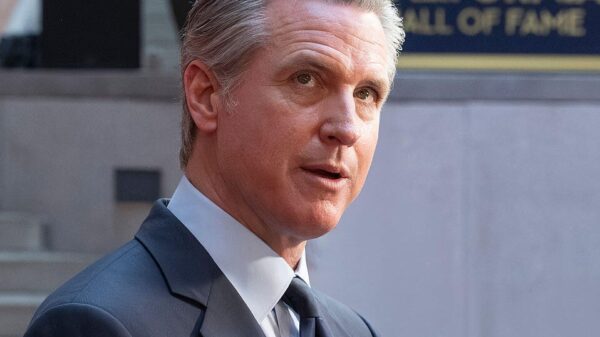

California Governor Gavin Newsom signed an executive order on Monday aimed at enhancing the state’s approach to vetting and procuring artificial intelligence (AI) technologies. This move positions California in direct opposition to the recently announced national AI policy framework by the Trump administration. With its status as the world’s fourth-largest economy and home to 33 of the top 50 privately held AI companies, California holds a unique position to challenge federal directives.

The executive order mandates that California’s Department of General Services and Department of Technology create new certification requirements for AI companies that wish to engage in business with the state. Within 120 days, these agencies are expected to propose standards that require vendors to demonstrate their safeguards against illegal content, algorithmic bias, and ensure civil rights protections, which include free speech and protections from unlawful surveillance.

In contrast, the White House’s AI policy framework, released earlier this month, advocates for limiting state-level regulations that it deems “burdensome.” The Trump administration argues that AI development is an interstate issue with significant foreign policy and national security implications, asserting that individual states should not have the authority to impose their own regulations.

Newsom’s executive order directly counters this federal stance by instructing the state’s Chief Information Security Officer to scrutinize any federal classifications of AI companies as supply chain risks. The order grants California the authority to override these federal designations if deemed inappropriate. This means that if the Trump administration seeks to prevent California from purchasing AI products from a particular vendor, the state retains the right to continue those purchases.

The federal framework adopts a more hands-off approach, opposing the establishment of a new federal regulatory body for AI and instead prioritizing industry-led standards and current regulatory agencies. While it acknowledges important issues like child safety and intellectual property, the framework’s principal concern is maintaining U.S. leadership in the global AI landscape.

In a significant departure from the federal approach, Newsom’s order emphasizes consumer protection. It requires companies to provide written explanations of how they prevent the misuse of their technology prior to selling to the state. The order also advocates for guidelines on watermarking AI-generated images and videos, a likely response to the growing issue of deep fakes circulating online.

As a financial incentive for AI companies, the executive order calls for the development of a public-facing AI tool designed to help Californians navigate government services related to life events such as job searching or applying for disaster relief. This initiative could generate substantial revenue for AI companies that provide the underlying technology necessary to support these services.

The question of federal preemption remains unresolved. Should Congress act on the White House recommendations and enact legislation to override state AI laws, California’s executive order could face legal challenges. As of now, the contrasting views on AI regulation reveal a growing divide: Washington favors rapid innovation with minimal oversight, while Sacramento insists on accountability and ethical practices for AI companies seeking state contracts.

The ongoing conflict illustrates the complexities of AI governance, with consumers caught in the crossfire of a burgeoning debate that is far from conclusion. As both sides prepare for a protracted confrontation, the future of AI regulation hangs in the balance, with implications that could shape the industry for years to come.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health