Concerns over transparency in advertising have intensified, particularly regarding the use of generative AI in content creation. A recent examination of ads on TikTok has raised questions about whether companies are adhering to platform guidelines that mandate disclosure of AI-generated content. This scrutiny comes amid growing public interest in the authenticity of digital media.

Despite TikTok’s advertising policies requiring labels for content significantly modified or generated by AI, many advertisements lack such disclosures. This issue was highlighted by an observation of ads from Samsung, which featured promotions for the Galaxy S26 Ultra. While similar videos on other platforms like YouTube did include AI usage disclosures, the TikTok ads did not, raising questions about compliance with TikTok’s own rules.

Both Samsung and TikTok are members of the Content Authenticity Initiative, which promotes transparency in digital content. If Samsung employed AI in its videos, it should have informed TikTok during the ad submission process, and TikTok should have ensured users were aware of this, in line with its policies. However, inconsistencies in labeling have persisted, leaving viewers uncertain about the nature of the content they encounter.

The proliferation of AI-generated advertisements is not limited to Samsung. For example, ads from the UK-based used car retailer Cazoo transitioned to include a label stating “advertiser labeled as AI-generated” after initial complaints about a lack of transparency. This change highlights the growing pressure on advertisers to clarify the origins of their content, particularly when the visuals appear distorted or misleading.

As it stands, TikTok’s guidelines specify that any content “significantly modified by AI” must be clearly labeled. This includes completely AI-generated images or videos, or content that misrepresents reality, such as a subject performing actions they did not actually undertake. Advertisers are advised to utilize TikTok’s own AI label or provide their disclaimers to fulfill this requirement.

Despite these regulations, TikTok has not provided a clear explanation as to why some of Samsung’s AI-generated ads appeared without the necessary disclaimers. While Samsung has not commented on the issue, TikTok pointed to its labeling requirements but declined to clarify any procedural missteps that may have occurred. This lack of accountability raises concerns about the effectiveness of existing transparency measures.

Moreover, the challenge of authenticating AI-generated content is compounded by the absence of reliable technological solutions. Current systems like C2PA Content Credentials and SynthID struggle to ensure compliance at scale, requiring widespread cooperation from all stakeholders to function effectively. The potential for misinformation in advertising could increase without robust verification methods in place.

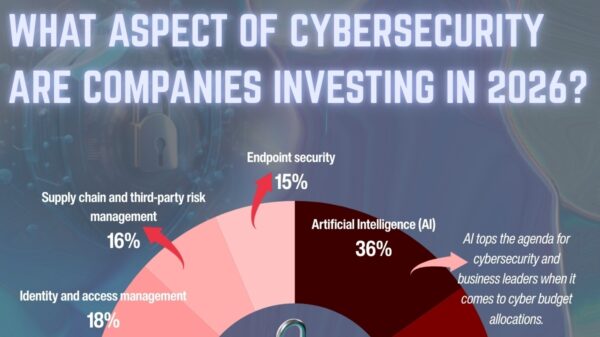

As regulatory frameworks evolve, the demand for clear labeling of AI-generated content is becoming more pronounced. The European Union, China, and South Korea have instituted labeling requirements for AI in promotional materials, reflecting a global push for transparency. Failure to comply with these regulations could result in penalties for companies that do not adapt.

The implications are significant. If platforms like TikTok and advertisers such as Samsung cannot maintain transparency in their AI usage, it risks undermining consumer trust in digital advertising as a whole. While some improvements, such as the recent implementation of AI labels on certain TikTok ads, signal progress, the system remains far from fully effective.

As scrutiny of AI in advertising continues to grow, companies will need to take proactive measures to ensure compliance with transparency regulations. The evolving landscape of digital content demands a more rigorous approach to authenticity. As users increasingly seek clarity, the responsibility lies with both platforms and advertisers to uphold standards that prevent misinformation and foster trust.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health