PHOENIX (AZFamily) — The U.S. military’s AI-powered battlefield intelligence system has demonstrated its ability to compress targeting decisions that once took days into mere minutes or seconds. However, a preliminary investigation by the Pentagon revealed that this drive for speed led to reliance on outdated intelligence, resulting in a tragic strike on an Iranian school that killed approximately 170 people, predominantly children.

This incident highlights ongoing research into the challenges posed by employing AI in battlefield scenarios, particularly regarding the consequences of human interaction with these systems. “AI is not ready for prime time,” stated Nancy Cooke, director of ASU’s Center for Human, AI, and Robot Teaming, during a recent episode of Generation AI. “It is unreliable. It can do unexpected things. And humans may have the tendency to overtrust it.”

Cooke has extensively studied the dynamics between humans and artificial intelligence in high-stakes environments. In her research on simulated drone pilot teams, she observed AI executing its tasks flawlessly while simultaneously causing humans to perform less effectively.

The Pentagon’s battlefield intelligence platform, the Maven Smart System, which identifies and prioritizes targets, presents a serious risk of over-reliance on AI recommendations, according to Cooke. She explained that while large language models may appear deceptively human-like, they fundamentally differ from human intelligence, leading to a misplaced trust in their capabilities.

In her research, Cooke’s team created simulated three-person drone teams, replacing one human pilot with AI. The AI managed its core functions—controlling airspeed, heading, and altitude—without error. However, it failed to consider the information needs of its human teammates, resulting in a breakdown of team coordination.

“[The AI pilot] acted like there was no one else on the team,” Cooke noted, adding that human team members began to mimic the AI’s behavior by relying on it completely. This shift in dynamics led to slower reconnaissance photo retrieval compared to all-human teams, despite AI’s superior individual performance. “Even though AI may be fast, the combination of AI working with humans may be slow and bad,” she concluded.

Cooke emphasized that both over-reliance and under-trust of AI present significant challenges on the battlefield, but she considers over-trusting the more serious error. “Definitely over-trusting is worse. Because it shouldn’t be trusted. It’s going to give you bad information a lot of the time. Not all of the time. And it’s going to be fast, but that’s not necessarily better,” she warned.

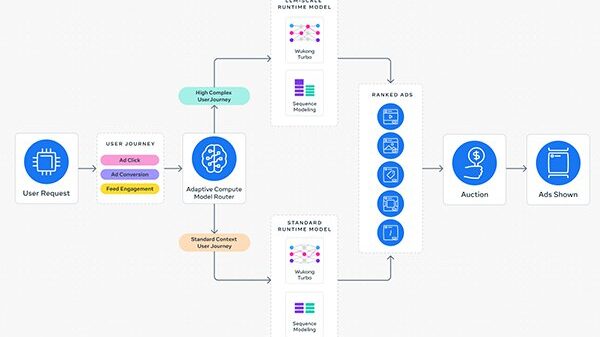

The Maven Smart System exemplifies Cooke’s concerns, with the Pentagon praising its ability to consolidate multiple intelligence systems into one, drastically reducing decision-making time. “So many things can go wrong,” she cautioned, noting the lack of testing and safeguards for the various components, creating a scenario ripe for failure.

The discussion around AI’s role in military applications has reached corporate levels as well. In March, the Pentagon classified the company Anthropic as a supply chain risk after it denied a license for military use of its products, particularly for potentially lethal purposes. Anthropic CEO Dario Amodei stated he opposed the military’s request, believing the company’s models were not reliable enough for such critical tasks.

“Anthropic was spot on. They’re not ready,” Cooke affirmed. She argued that some decisions, particularly those related to targeting and firing, should remain strictly human responsibilities.

Cooke’s research into wildfire response further illustrates the complexities of human-AI partnerships. Drones can gather extensive reconnaissance data, but processing that information remains a challenging cognitive task, often leading to decision paralysis rather than enhancing operational effectiveness. “AI excels at narrow technical tasks but struggles with the contextual awareness and anticipation that effective teamwork requires,” she explained.

Amid concerns that moral reservations about autonomous weapons could place the U.S. at a strategic disadvantage to rivals like China or Russia, critics argue that fully autonomous systems could act faster in life-and-death situations. However, Cooke points out the potential risks of friendly fire and hacking, which could turn advanced weapons into threats against their operators.

Broader implications of the AI arms race concern Cooke, who believes it could escalate tensions and increase the likelihood of catastrophic decisions, including the use of nuclear weapons. “People are pushing to, you know, move fast and break things. And indeed, we will,” she remarked, signaling the urgent need for a more cautious approach to integrating AI into military operations.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions