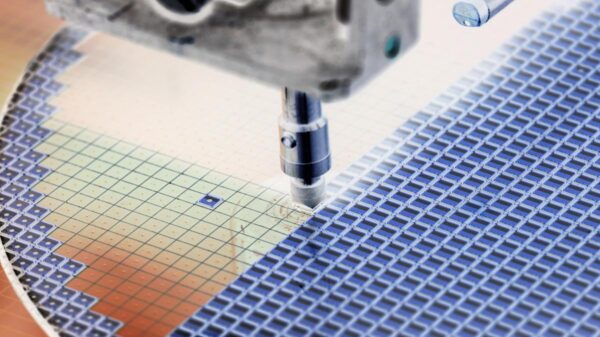

As artificial intelligence models like ChatGPT and Gemini continue to advance, their reliance on significant amounts of RAM has led to soaring memory chip prices, creating a global shortage that has affected everything from data centers to consumer laptops. However, a recent breakthrough from Google, known as TurboQuant, promises to change the landscape of AI performance.

Unveiled in advance of the ICLR 2026 conference, TurboQuant is a specialized compression algorithm tailored specifically for Large Language Models (LLMs). Google claims this innovative method can reduce the memory required to operate an AI model by as much as six times, effectively enabling the AI to retain its previous computations using a fraction of the physical hardware it previously needed.

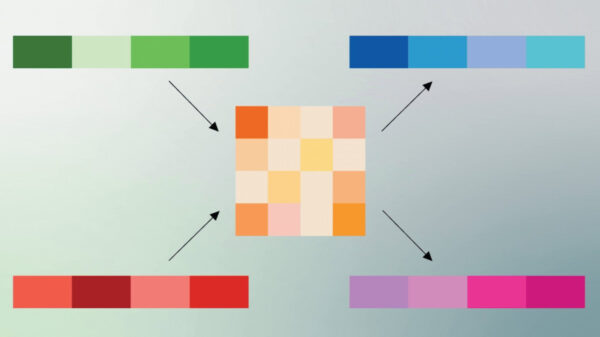

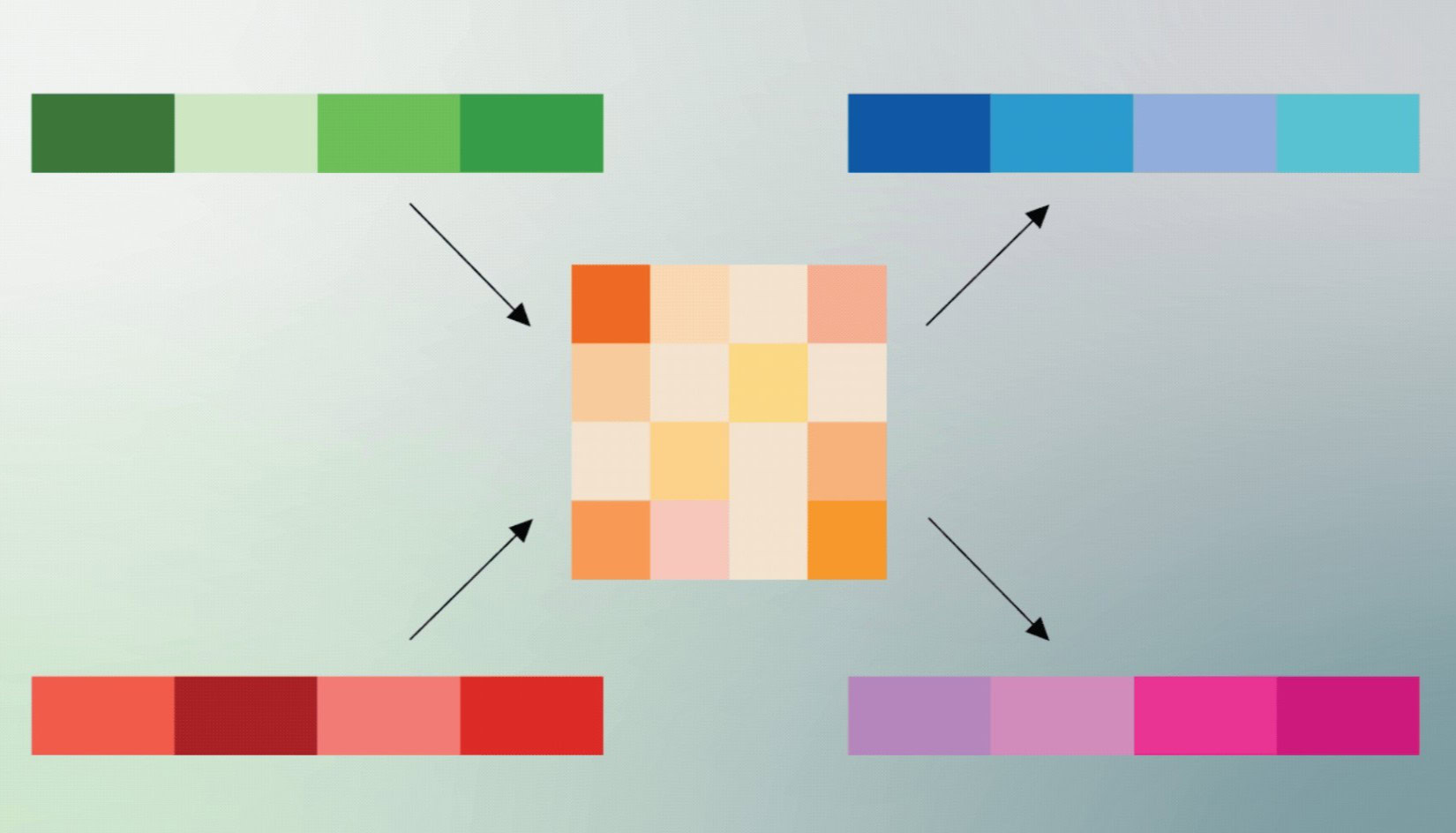

The efficiency of TurboQuant is particularly noteworthy. AI models typically utilize a “Key-Value cache” to store context, which prevents the need to reprocess an entire conversation with each new inquiry. This cache, however, is notorious for consuming large amounts of RAM. According to TechCrunch, TurboQuant employs advanced “quantization” techniques, a method that simplifies the data utilized by the AI without sacrificing accuracy. The result is akin to efficiently packing a suitcase to accommodate six times more items without adding weight. Google asserts these techniques operate near “theoretical lower bounds,” suggesting they represent a peak in efficiency as dictated by physical laws.

The announcement of TurboQuant sent shockwaves through the stock market, impacting major chip manufacturers such as Samsung, SK Hynix, and Micron, whose shares saw significant declines. Investors are concerned that if AI models can suddenly reduce their memory requirements by up to 80%, the relentless demand for high-cost RAM chips might finally diminish.

Despite the optimism surrounding TurboQuant, many analysts caution that the so-called “RAM crisis” is not resolved. While this breakthrough enhances current models’ efficiency, it also paves the way for the development of even more ambitious AI projects. Experts from SemiAnalysis pointed out to CNBC that removing a bottleneck often leads developers to create more powerful systems that will eventually utilize the additional available resources.

While TurboQuant represents a significant laboratory achievement, it is not yet ready for widespread use in consumer technology. Large-scale deployment is expected to take time, especially since many memory contracts for the upcoming year have already been secured by major corporations. However, the breakthrough signals a much-needed ray of hope in the ongoing global RAM shortage.

If artificial intelligence can achieve sixfold improvements in efficiency through software innovations alone, there is potential for a notable easing of the supply crunch well before the decade concludes. The implications of this advancement extend beyond just memory markets, as it could redefine the capabilities of AI technology in the coming years.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions