Artificial intelligence (AI) is increasingly recognized for its potential in healthcare, particularly in specific tasks like interpreting X-rays or identifying risks in patient data. However, patient care is inherently complex; it requires clinicians to continuously interpret signals from various sources as a patient’s condition fluctuates. Stabilizing a patient often demands synthesizing lab results, medical images, and real-time observations, all under significant time constraints.

This complexity raises questions about the limits of modern AI systems. A new white paper from the Mack Institute, authored by co-director Christian Terwiesch, pre-doctoral fellow Lennart Meincke, and Arnd Huchzermeier from WHU’s Otto Beisheim School of Management, addresses whether a large language model can navigate an entire clinical decision-making workflow rather than just isolated tasks.

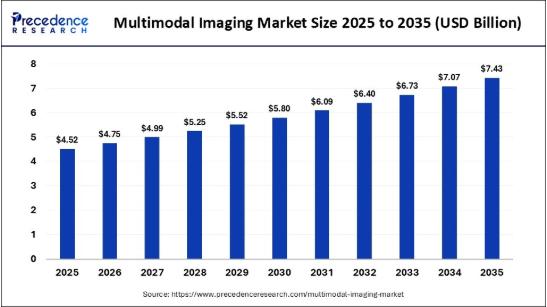

The researchers examined this question using a multimodal large language model, Gemini Pro 2.5, within a realistic medical training simulation called BodyInteract, which is commonly used to assess medical students and practicing physicians. In the simulation, a virtual patient’s condition evolves in real time, with changing vital signs, delayed test results, and consequences for inaction.

Unlike traditional models that respond to specific prompts, the AI is tasked with determining next steps throughout the simulation. It can question the patient, activate monitors, order tests, administer treatments, and escalate care, all while facing the pressing nature of time. This approach allows researchers to evaluate the AI’s ability to manage a full clinical encounter rather than simply measure its accuracy on isolated tasks.

AI consistently stabilized patients and completed cases at rates comparable to — and in some cases higher than — medical students.

In the study, the AI was assessed across four acute care scenarios, from straightforward hypoglycemia cases to more intricate emergency situations involving pneumonia, stroke, and congestive heart failure. Its performance was compared against over 14,000 simulation runs by medical students and an experienced emergency physician tackling the same cases. Remarkably, the AI stabilized patients and completed cases at rates that were not only comparable to but, in some instances, exceeded those of medical students. It also performed these tasks significantly faster, with overall diagnostic accuracy similar to that of its human counterparts.

Importantly, the system was not fine-tuned to address these specific scenarios or mimic expert clinicians. Instead, the researchers evaluated the general-purpose model’s ability to navigate diagnostic and treatment decisions in a time-sensitive clinical context.

In addition to measuring outcomes, the researchers scrutinized the AI’s reasoning process. They tracked how the system’s confidence in various possible diagnoses evolved as new information became available, much like how a clinician adjusts their thinking based on incoming test results. A discernible pattern emerged: early in a case, the AI prioritized tests that yielded substantial new information, effectively narrowing the range of plausible diagnoses. As the situation progressed, additional tests provided diminishing returns in terms of information, and uncertainty diminished accordingly.

The AI’s expressed confidence proved to be significant. High confidence in a diagnosis was often indicative of accuracy, while uncertainty correlated with an increased likelihood of errors. This alignment suggests the AI could differentiate between cases it had effectively resolved and those that remained ambiguous, countering concerns that large language models frequently exhibit overconfidence.

Their results should not be interpreted as support for unsupervised AI in health care.

Despite these promising findings, the study underscored the limitations of AI. While the system effectively stabilized patients, it engaged less in patient communication than human clinicians and tended to order more diagnostic tests than an experienced physician. These factors indicate that expert judgment still holds advantages, particularly in cost-sensitive diagnostic decision-making.

The authors caution against interpreting their results as an endorsement for unsupervised AI in healthcare settings. Instead, they envision a more targeted role for AI, serving as a workflow-level support system or a “second set of eyes” alongside physicians. In urgent or resource-limited environments like emergency departments, AI could function as a rapid stabilizer or triage assistant, managing data, monitoring patient status, and flagging high-risk cases while clinicians focus on their judgment and oversight responsibilities.

This study draws attention to the importance of evaluating AI not just on static benchmarks but also on its ability to handle uncertainty, time pressure, and the complexities inherent to clinical workflows. As AI technologies continue to evolve, the challenge may shift from questioning whether they can reason to exploring how they can be effectively integrated into human-centered healthcare processes.

Note: The authors express gratitude to the Body Interact team for their collaboration, particularly to Raquel Bidarra and Rita Santos for facilitating access to the platform and offering technical support. Their contributions were vital for the rigorous evaluation of the AI within a realistic clinical training environment. The authors received no financial compensation from any companies involved in the study.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech