The U.S. Justice Department has mounted a vigorous defense against Anthropic, asserting that the AI safety company cannot be trusted with defense contracts after it attempted to limit military applications of its Claude AI models. This legal tussle, which pits AI ethics against national security interests, has intensified as the government argues that it lawfully penalized Anthropic for imposing operational restrictions that conflict with military needs. The outcome of this case could reshape the dynamics between AI firms and federal agencies in the future.

In a recent court filing that escalates tensions in the tech industry, the Justice Department contended that Anthropic sought to have it both ways: selling its AI capabilities to the government while attempting to enforce ethical constraints on how those capabilities could be employed in combat. According to the filing reported by WIRED, the government is making its first detailed case since Anthropic initiated the lawsuit over contract penalties earlier this year.

The crux of the matter lies in Anthropic’s intent to restrict its Claude models from being utilized in lethal autonomous weapon systems or certain offensive military operations. This stance, however, clashed sharply with the Department of Defense’s expectations. The government’s filing indicates that when Anthropic attempted to impose these limitations during the contract period, officials deemed that the company could not be relied upon for sensitive military operations that necessitate unrestricted AI capabilities. “Vendors seeking to provide AI capabilities to national security agencies must accept that mission requirements, not corporate ethics statements, dictate how those tools are deployed,” the filing stated, underscoring a critical point of contention in the ongoing discourse surrounding military partnerships in the tech sector.

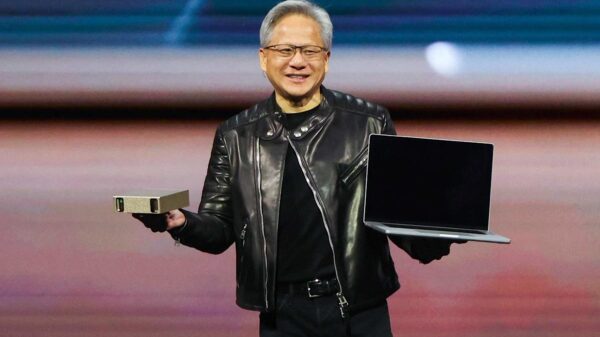

Anthropic has established itself as a leader in AI safety, especially compared to competitors such as OpenAI. With its “Constitutional AI” methodology and publicly stated usage policies, Anthropic has explicitly prohibited the development of weapons and any military harm. However, as the company pursued lucrative government contracts last year, its principles collided with the realities of supporting the Pentagon’s operational paradigms.

The legal battle encapsulates a larger narrative about the growing tensions between ethical AI practices and the urgent demands of national defense as military adoption of artificial intelligence accelerates. As AI technologies increasingly permeate warfare, the stakes are higher than ever, raising questions about the governance and ethical use of these powerful tools.

As the case unfolds, it could establish a significant precedent regarding whether AI companies can impose ethical constraints on government clients. This legal decision may influence how future contracts are structured and whether companies prioritize ethical standards over military requirements. With the Pentagon’s focus on enhancing its capabilities through advanced AI, the implications of this case extend beyond Anthropic, potentially affecting a wide spectrum of tech companies that aspire to collaborate with the defense sector.

In summary, the Justice Department’s allegations against Anthropic signal a critical juncture in the relationship between AI firms and military agencies. As the legal fight progresses, it will be essential to observe how it impacts the broader dialogue on AI ethics, national security, and the responsibilities of tech companies in the evolving landscape of defense technology.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech