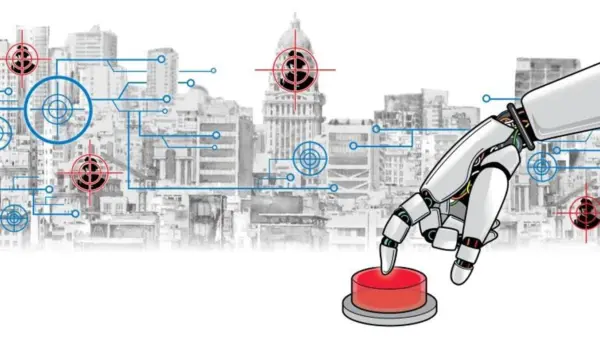

The increasing integration of artificial intelligence (AI) in military operations is sparking profound ethical concerns about the future of warfare. A recent escalation in military activities against Iran by the United States and Israel, fueled by advanced AI technologies, raises questions about human accountability in combat decisions. Reports suggest that on February 28, the US and Israeli forces launched an unprecedented operation, striking 1,000 targets within 24 hours — double the scale of military actions during the 2003 Iraq War and surpassing initial strikes in the 1991 Operation Desert Storm.

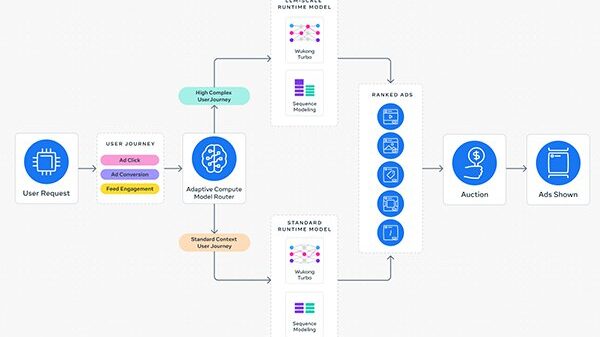

The efficiency of these airstrikes is largely attributed to the utilization of the Maven intelligent system, developed by Palantir in 2018. This AI-driven platform analyzes vast amounts of data to identify and prioritize military targets. Additionally, the integration of the large language model Claude, created by Anthropic, allows for real-time processing and synthesis of frontline intelligence, generating actionable targets for military operations.

Brad Cooper, head of US Central Command (CENTCOM), confirmed on March 11 that the military has employed various AI tools in the Iran conflict to enhance data processing capabilities, although he did not specify which tools were utilized. The platform reportedly generates hundreds of potential targets, matching them with suitable military units and munitions based on strategic value, while also simulating tactical scenarios and assisting with battle damage assessments.

Israel’s military is similarly leveraging AI technologies, utilizing systems like Lavender and Gospel to inform target selection and geographical analysis during combat operations in Gaza. While AI excels at processing data rapidly, significantly shortening the “kill chain” — the timeline from target identification to strike execution — concerns about the implications of these technologies persist.

AI technologies can process immense datasets in mere minutes, allowing for quicker decisions that traditionally required extensive human analysis. This improvement reduces the manpower needed for such operations, enabling military personnel to focus on higher-level strategic decisions. Drones and ground robots equipped with AI are used for surveillance and combat tasks, enhancing the safety of soldiers by delegating dangerous responsibilities to machines.

However, experts warn that reliance on AI in military applications presents significant risks. AI systems are susceptible to hacking and manipulation, which could lead to the dissemination of false intelligence. Furthermore, the tendency of AI to produce plausible but inaccurate results — a phenomenon known as “hallucination” — poses a serious threat in high-stakes environments like warfare. Peter Bentley, an honorary professor at University College London, emphasized the difficulty in discerning whether AI-generated findings are factual or fabricated, which could lead to disastrous misjudgments in targeting decisions.

The ethical ramifications of AI in military contexts extend to accountability. If an AI system makes an error leading to civilian casualties, the question arises: who is responsible? Manoj Harjani, a research fellow at the S. Rajaratnam School of International Studies, highlighted the complexities in assigning blame when AI operates independently. A recent incident on the first day of the Iran conflict resulted in a missile hitting an elementary school, claiming around 175 lives due to outdated targeting data. The US has yet to acknowledge responsibility for this tragedy, stating only that an investigation is ongoing.

Cooper reiterated that final decisions regarding strikes remain in human hands, asserting that regardless of the investigation’s outcome, accountability lies with people, not AI systems. As military applications of AI advance, critics warn that failure to establish regulatory frameworks could lead to an arms race where autonomous weapons operate without sufficient oversight. While some nations engage in discussions regarding the ethical use of AI in military settings, progress toward binding international agreements remains slow.

The international community has debated the deployment of AI in armed conflict for over a decade, with forums such as the UN Group of Governmental Experts on Lethal Autonomous Weapon Systems (GGE on LAWS) examining relevant issues. However, as noted by Mei Ching Liu, an associate research fellow at RSIS, current discussions lack the mandate for legally binding treaties, limiting the potential for significant regulatory advancements.

Experts like Bentley stress the necessity for humans to retain control over AI systems, particularly in life-or-death scenarios. He likened the situation to driving a train, advocating for the importance of maintaining control rather than succumbing to the rapid progression of military AI technologies being pursued by other nations. As the world grapples with the implications of AI in warfare, the pressing question persists: will humanity retain control over these technologies, or will they be governed by machines devoid of ethical considerations?

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech