A data protection company based in Singapore has issued a warning to organizations about the risks of their confidential business information potentially training external artificial intelligence systems. This advisory follows a significant controversy surrounding the design platform Figma and a related class-action lawsuit filed in the United States.

The situation escalated in 2024 when designers criticized Figma’s Make Design feature, an AI tool designed to generate interface layouts, after it produced screens resembling Apple’s iOS Weather app. This incident raised serious questions about whether Figma’s AI features had been developed using user-generated content without the necessary consent. Although Figma ultimately disabled the tool and denied claims that customer files had been employed for training, the company acknowledged that it had not sufficiently reviewed its design components prior to launch.

This year, new allegations emerged, asserting that millions of user-created designs, including corporate prototypes and product layouts, were utilized to create Figma’s AI capabilities. These claims contributed to the ongoing class-action lawsuit in the U.S., wherein plaintiffs argue that Figma breached confidentiality expectations and failed to adequately disclose its data-use practices.

In response to these developments, Straits Interactive, a regional provider specializing in data protection and AI governance training, highlighted that the incident underscores the risks of exposing sensitive material through the use of free, freemium, or AI-embedded tools in the workplace. “Many corporate users assume their data remains private simply because they are using a trustworthy brand or because the AI feature appears as a ‘free add-on.’ That assumption is dangerous,” stated Kevin Shepherdson, CEO of Straits Interactive. “If your employees are using free AI tools or apps that have new untested AI features, your confidential data may already be training someone else’s model.”

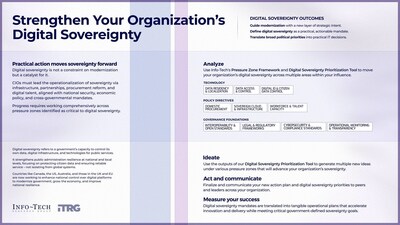

Shepherdson emphasized that the issue should not be regarded merely as a software glitch but rather as a failure of governance. He noted that all interactions with AI systems should be viewed as data-sharing events that can have long-term implications. Straits Interactive expressed concerns about the risks associated with employees inputting contracts, financial data, or client information into AI-enabled tools that have not received approval from legal or IT departments.

The organization also pointed out the potential data flows embedded in various applications, including design software, office tools, web browsers, and messaging platforms, where AI features may log or store content for purposes of model enhancement. This highlights the urgent need for companies to evaluate the tools their employees use and implement strict guidelines regarding data sharing.

The implications of these incidents extend beyond Figma, as they reveal a broader trend within the tech landscape where privacy concerns related to AI continue to grow. As businesses increasingly integrate AI technologies into their workflows, the demand for transparent data governance is becoming more pronounced. Organizations must ensure that employees are aware of the potential risks of using AI tools and the importance of safeguarding sensitive information.

As the litigation against Figma progresses, it is likely to prompt discussions across various industries about the ethical use of AI and the handling of proprietary data. This case serves as a critical reminder for organizations to reassess their data management policies in an era where AI capabilities are rapidly evolving and becoming more pervasive in the business environment.

See also Westport Entrepreneur Launches ThoughtPartnr to Help Small Businesses Embrace AI

Westport Entrepreneur Launches ThoughtPartnr to Help Small Businesses Embrace AI Allens Boosts Non-Partner Fee-Earners to Record High Amid Rising AI Tool Adoption

Allens Boosts Non-Partner Fee-Earners to Record High Amid Rising AI Tool Adoption RADPAIR and Fovia AI Launch Voice-Controlled Agentic AI for Radiology Workflows

RADPAIR and Fovia AI Launch Voice-Controlled Agentic AI for Radiology Workflows Fovia AI Launches F.A.S.T.® aiCockpit® at RSNA 2025 to Enhance Radiology Workflows

Fovia AI Launches F.A.S.T.® aiCockpit® at RSNA 2025 to Enhance Radiology Workflows Check Point Partners with Microsoft to Enhance AI Security for Enterprise Workflows

Check Point Partners with Microsoft to Enhance AI Security for Enterprise Workflows