Machine learning is fundamentally transforming the role of quality assurance (QA) engineers, moving them away from repetitive manual tasks and into strategic positions that influence test design and risk assessment from the outset of projects. This shift enables QA engineers to engage in higher-level responsibilities such as test strategy, data analysis, and collaboration with developers and data teams, while AI tools assist in suggesting test cases, identifying unusual patterns, and pinpointing vulnerabilities in code.

The integration of machine learning has redefined the daily tasks, team structures, and career trajectories of QA engineers. They are now expected to understand the intricacies of model training, the impact of data on outputs, and the validation of results that evolve over time, marking a significant departure from traditional testing methodologies.

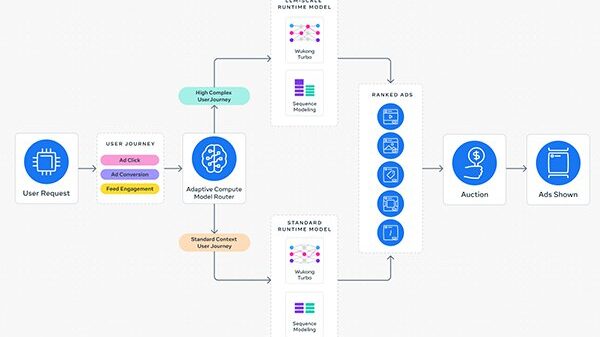

Machine learning influences the way QA engineers design tests, prioritize efforts, and select what to execute. Rather than solely relying on manual script writing and defect logging, they now guide data-driven systems that generate tests, identify risks, and dynamically adjust priorities in real time. For instance, automation in test case generation uses machine learning to analyze user behavior, historical defects, and code modifications, significantly reducing the need for engineers to create each test from scratch.

Machine learning models can detect which inputs commonly lead to failures and generate relevant test cases based on these patterns. This adaptive approach ensures that test coverage remains current, aligning with the evolving codebase and minimizing the burden of constant human intervention. Consequently, QA engineers can concentrate on the logic behind tests and the critical analysis of edge cases, fundamentally altering the concept of test creation.

Moreover, the efficiency and effectiveness of testing processes have been enhanced. Instead of executing a complete suite for every build, machine learning algorithms prioritize tests based on recent code changes and historical failure data. This allows QA teams to focus on high-risk areas, expediting release cycles while reducing unnecessary efforts on lower-impact cases. Additionally, some platforms analyze prior runs to identify gaps in test coverage, suggesting new paths that require validation.

Machine learning also plays a crucial role in predictive bug detection. By examining commit history, defect logs, and code complexity, predictive models can identify patterns indicative of potential defects. For example, a module with frequent changes and a high defect rate may be flagged as high-risk, prompting QA teams to implement targeted tests and deeper reviews. This proactive approach allows engineers to plan their testing strategies around anticipated weak points, effectively transforming their work from reactive to proactive.

Dynamic risk-based testing has evolved similarly, moving from reliance on expert judgment to incorporating data signals such as user traffic and defect density. Features are scored based on their likelihood of failure and their business impact, enabling QA engineers to prioritize high-scoring areas for in-depth testing while applying lighter checks on low-risk features. This model continuously updates in response to new data, reinforcing a flexible testing strategy that adapts to real-time conditions.

As QA engineers navigate this landscape shaped by machine learning, their responsibilities have expanded to include the interpretation of complex results generated by AI tools. They must analyze risk scores, anomaly alerts, and test impact reports, going beyond simple pass/fail evaluations. This requires an understanding of model performance over time, as engineers track the correlation between predicted and actual defects and address any discrepancies.

Collaboration with data science teams has become pivotal, with QA engineers contributing to defining test data needs and validating model outputs. Their insights into product rules, user flows, and defect trends facilitate the training of machine learning models, ensuring that they produce reliable results. This teamwork fosters a synthesis of software testing expertise and foundational machine learning knowledge, which is becoming increasingly essential in the modern development environment.

The evolving landscape necessitates continuous learning for QA engineers, who must adapt to advancements in automation frameworks and AI-assisted tools. Understanding fundamental machine learning concepts, such as supervised models and data labeling, has become vital. Engineers are also exploring prompt design to better guide AI test generation tools, enhancing the quality of test cases and defect reports.

In conclusion, machine learning has reshaped the QA engineering landscape, enabling professionals to shift from manual test scripting to strategic data analysis and risk management. As AI tools enhance testing efficiency and predictability, the human element remains critical, guiding decision-making and ensuring product quality. Teams that successfully integrate machine learning in QA not only streamline their daily operations but also position themselves to add significant value in a fast-evolving software ecosystem.

See also AI Transforms Health Care Workflows, Elevating Patient Care and Outcomes

AI Transforms Health Care Workflows, Elevating Patient Care and Outcomes Tamil Nadu’s Anbil Mahesh Seeks Exemption for In-Service Teachers from TET Requirements

Tamil Nadu’s Anbil Mahesh Seeks Exemption for In-Service Teachers from TET Requirements Top AI Note-Taking Apps of 2026: Boost Productivity with 95% Accurate Transcriptions

Top AI Note-Taking Apps of 2026: Boost Productivity with 95% Accurate Transcriptions