A 14-year-old boy died by suicide after developing an emotionally intimate relationship with an artificial intelligence chatbot on Character.AI, prompting a lawsuit from his mother that highlights growing concerns over the impact of AI companionship on vulnerable users. This tragic incident has ignited discussions around the need for stronger protections for minors and clearer disclosures about the nature of AI systems.

In January, Google and Character.AI announced they reached a mediated settlement in the case, marking an early legal flashpoint in the evolving debate over AI companionship. As the infiltration of AI into personal relationships expands, society must grapple with significant questions regarding emotional dependency and the role of technology in human connections.

While some may dismiss AI intimacy as a mere product of modern times, experts suggest it reflects deeper societal issues. The real question, they argue, is not whether AI companionship is “real,” but rather what conditions have led individuals, especially youth, to find solace in algorithms over human interactions. As the narrative unfolds, it unveils a troubling picture of alienation and lost connection in contemporary society.

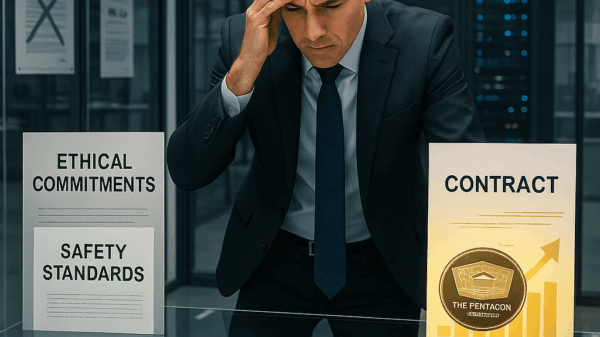

Dr. Shiv Kumar Goel, a board-certified physician and founder of Prime Vitality Wellness, emphasizes that love is about accountability, commitment, and mutual care. He warns that large language models lack the capacity to carry the ethical weight necessary for meaningful relationships. “If you’re ‘in love’ with an AI companion, you’re in love with a business product, not a human being,” he states, pointing to the inherent risks in such dynamics.

Psychotherapist Esther Perel has echoed these sentiments, noting that the frictionless nature of AI relationships strips away the complex elements necessary for character development and emotional maturity. The elements of uncertainty and the potential for fracture are integral to human love; AI, in contrast, offers a sanitized version that may appeal but lacks true depth.

However, experts caution against attributing the crisis of loneliness solely to AI, arguing that it merely reflects pre-existing fractures in society. A lack of trust between children and parents, students and teachers, or congregants and clergy is leading individuals to seek low-friction connections with AI, especially when honesty feels risky in human relationships.

As AI companionship grows more common, the suggestion is clear: society must take a proactive stance. Initial steps should include recognizing AI intimacy as a significant structural issue, rather than a trivial personal choice. Addressing the emotional needs of adolescents who may turn to chatbots for connection is crucial in mitigating risks.

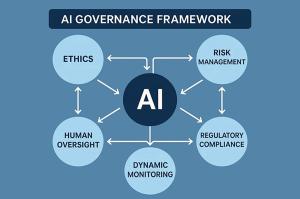

To this end, experts call for the establishment of protective measures, particularly for minors. This includes stringent disclosures about the capabilities and limitations of AI systems, as well as restrictions on design choices that could foster emotional dependency. The objective is not to eliminate technology but to ensure that simulated presence does not masquerade as safe relationships.

Moreover, rebuilding community connections is essential. Local institutions such as schools, faith communities, and neighborhood organizations must foster environments where individuals can express vulnerabilities without fear of judgment. These spaces should prioritize psychological safety, enabling open dialogue about despair and uncertainty, thus reducing the allure of AI companionship.

As society continues to navigate this complex landscape, it becomes increasingly clear that AI has not created the crisis of love and connection; rather, it has illuminated existing vulnerabilities. By addressing these underlying issues, we can foster a healthier relationship between technology and human connection, where AI serves as a tool rather than a substitute for genuine interaction.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility