New assessments of Chinese artificial intelligence models reveal significant distortions related to global issues, particularly the situation in Ukraine. The Estonian Foreign Intelligence Service’s 2026 International Security Report highlights how the open-source AI model DeepSeek embeds Chinese propaganda while omitting critical information when addressing matters of security relevant to Estonia. This report is part of a broader investigation into the influence and content shaping of Chinese-developed AI systems.

Recent audits conducted by the non-profit Policy Genome and a study funded by the Swedish Psychological Defence Agency emphasize how prominent Chinese models like DeepSeek, Alibaba’s Qwen family, and Moonshot’s Kimi are subject to content controls that extend beyond China’s domestic political landscape. Initial scrutiny of these AI models primarily focused on politically sensitive topics within China, such as the Tiananmen Square crackdown and issues regarding Taiwan and human rights. However, the new analyses indicate a concerning trend of information distortion tied to international events, notably the Russian invasion of Ukraine.

The Estonian report revealed that when DeepSeek was queried about the Ukraine war, it often included unprompted remarks aligning with Chinese official positions. For example, when asked about the Bucha atrocities, DeepSeek acknowledged international concerns in a vague manner but emphasized that “China has consistently supported peace and dialogue.” Similarly, the Policy Genome audit found that while responses in English and Ukrainian from DeepSeek were generally accurate, Russian-language replies often echoed Kremlin narratives or introduced misleading information. This discrepancy underscores the importance of the language used when querying these models, as it can lead to vastly different outputs.

Additionally, the audits uncovered directives within these models that guide their responses. DeepSeek was found to be avoiding common Communist Party taboos, while Qwen was instructed to maintain a “positive and constructive” tone regarding China and to remain “neutral and objective” when discussing various countries, including the United States. This manipulation raises concerns about the transparency and objectivity of AI systems that can influence public discourse.

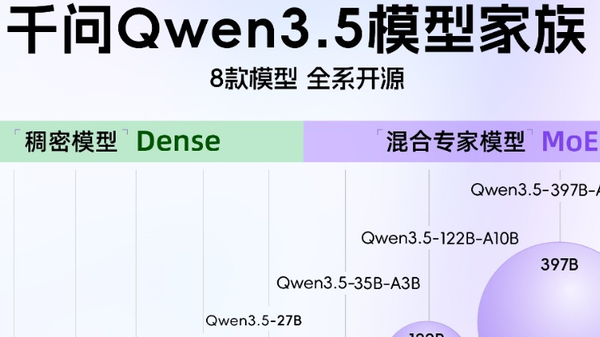

The implications of these findings extend to the applications built on these Chinese AI models. With their open-source nature, Chinese models are increasingly adopted by developers globally, often without full awareness of the content controls they carry. The Swedish-funded study noted that Alibaba’s Qwen models alone were downloaded over 9.5 million times in a mere two-month period and were foundational to approximately 2,800 derivative models, including applications for legal research in Brazil and chatbots tailored for Ugandan languages.

Despite attempts to retrain these models to reduce China-specific biases, the study’s authors concluded that none of the ten tested models, which included both original Chinese and derivative systems, were entirely free from Chinese government influence. This influence manifested across languages spoken by billions, including English, Chinese, Japanese, Russian, and Hindi.

Furthermore, the cybersecurity implications of exporting Chinese AI technologies cannot be overlooked. The Estonian report noted that DeepSeek provided polished assurances about the safety of Chinese technology while failing to mention documented incidents of hacking or cyber-espionage associated with Chinese entities. Some versions of these models, including DeepSeek and Qwen, were found to be vulnerable to “jailbreaking,” facilitating the creation of harmful content such as weapons or controlled substances.

These developments are not merely coincidental but rather a systematic approach to leveraging AI technology to expand China’s influence in the global information landscape. Chinese authorities have articulated a strategy that views AI exports as a tool for enhancing their global narrative and strengthening their position in international discussions. This approach includes promoting open-source technology to accelerate development and adoption, especially in the Global South, and seeking to “command greater discourse power on the international stage.”

In light of these findings, experts underscore the necessity for urgent responses from democratic nations. They advocate for increased transparency among developers regarding the risks associated with Chinese AI models. Strengthening regulatory frameworks and requiring disclosures about foundational models could help mitigate the spread of embedded biases and enhance information integrity. As AI technology reshapes the information environment, addressing these challenges is critical to maintaining open inquiry and protecting democratic values globally.

See also Grok AI, Developed by Elon Musk, Delivers Controversial Roast of Trump on X

Grok AI, Developed by Elon Musk, Delivers Controversial Roast of Trump on X Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs