In a significant alliance, OpenAI, Anthropic, and Google are collaborating to address growing concerns over alleged distillation attacks by their Chinese competitors. This partnership aims to combat a tactic in the artificial intelligence industry where smaller models are trained on the outputs of larger, pre-trained models without permission. The repercussions of such actions have prompted these tech giants to unite and proactively share strategies for counteracting these adversarial practices.

The collaboration emerged from accusations by OpenAI and Anthropic against several Chinese startups, including DeepSeek, which have purportedly engaged in distilling their models. In response to these allegations, the three companies established the Frontier Model Forum, alongside Microsoft, to raise awareness and develop collective strategies against these threats. Distillation without consent is viewed as a serious violation, prompting this unprecedented cooperation among major players in the AI sector.

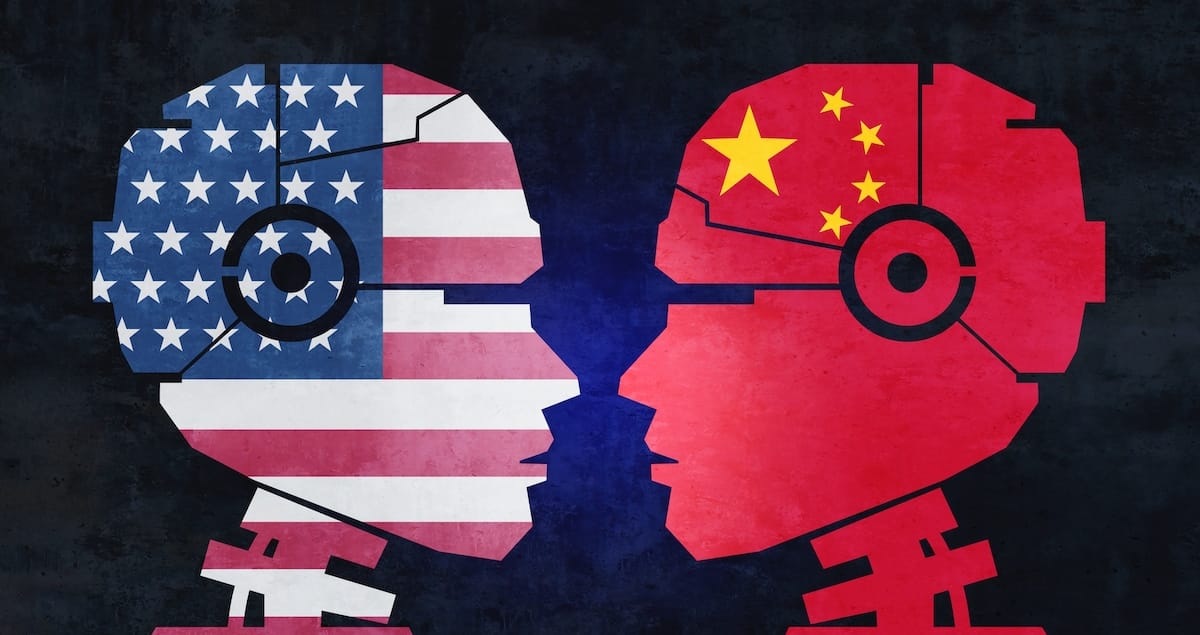

With the geopolitical landscape increasingly defined by competition between the United States and China, this partnership may signal a pivot in how the AI industry operates, emphasizing national security interests over innovation. The urgency of the situation has led to heightened cooperation among tech leaders, who are now prioritizing collective defense against potential security threats posed by unauthorized distillation.

The rise of DeepSeek, a Chinese AI startup, in early 2025, exemplifies the challenges faced by U.S. tech companies. Its DeepSeek-R1 model achieved performance levels comparable to those of established American firms but at a significantly lower cost. This unexpected success triggered a sharp decline in U.S. tech stock values, leading to concerns among major players about maintaining their competitive edge. OpenAI’s CEO, Sam Altman, has accused DeepSeek of “inappropriately” using the capabilities of its models to bolster its own offerings, intensifying scrutiny of distillation practices in the industry.

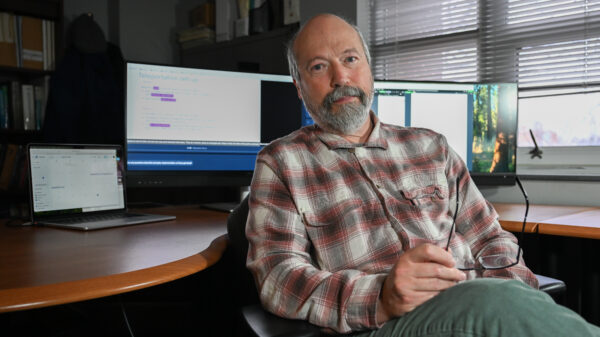

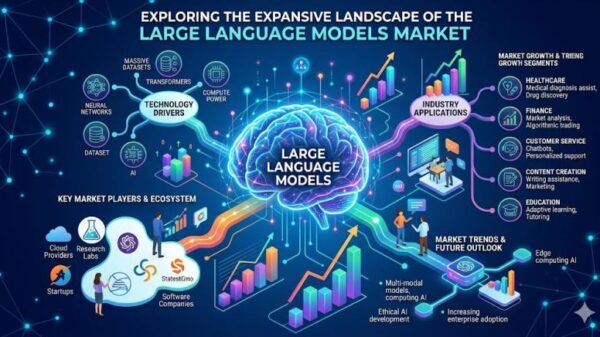

According to the Frontier Model Forum, distillation attacks involve malicious actors training smaller models on the outputs of larger models by manipulating prompts to elicit specific responses. This technique allows competitors to gain insights into how a large model operates, effectively replicating its reasoning processes and capabilities without compensating the original developers. Such practices have raised alarm bells among U.S. tech leaders, who are now vocal in their criticism of DeepSeek and similar startups.

OpenAI and Anthropic have strengthened their claims, with Altman reiterating that DeepSeek continues to exploit the advancements made by American AI laboratories. Anthropic has broadened the accusation, identifying several Chinese AI labs, including Moonshot and MiniMax, as responsible for distilling its Claude models. While Google has not specified its adversaries, it has reported a rise in distillation attacks on its models, indicating a widespread issue that transcends individual companies.

This newfound collaboration among OpenAI, Anthropic, and Google represents a shift in the competitive landscape. As these organizations unite against a common adversary, the narrative in the AI sector is rapidly evolving into one characterized by nationalism and strategic alliances. This approach may not only reshape their individual business strategies but also influence the broader AI ecosystem as firms adapt to the realities of international competition.

The partnership comes at a time when the United States’ position in the global AI race is increasingly tenuous. As nations invest in their own technological initiatives, the risk of falling behind looms large. Reports indicate that while the U.S. remains a leader in AI development, China is making significant strides, particularly in fields like humanoid robotics. The implications of these advancements extend into national security, as companies like Anthropic have restricted the release of their models due to concerns over potential misuse.

As the partnership progresses, it may pave the way for more stringent security measures, reflecting a shift away from open-access models to more controlled environments. Anthropic’s Project Glasswing exemplifies this trend, as the company seeks to conduct limited releases of its Mythos model among select partners to mitigate risks. By restricting access to powerful AI tools, these firms aim to safeguard their innovations from being exploited by competitors.

Stricter regulations may also emerge as a response to the distillation threat. Recent bipartisan legislative efforts in Congress aim to enhance U.S. export controls, targeting technologies that could further empower adversarial nations. If these initiatives gain traction, they could fundamentally alter the relationship between American tech companies and their Chinese counterparts, potentially curtailing collaboration and innovation in the sector.

In conclusion, the alliance between OpenAI, Anthropic, and Google may signal a transformative moment in the AI industry, as companies reassess their strategies in light of security concerns. The emphasis on collaboration among major players could lead to a more fragmented landscape, where access to cutting-edge technologies is carefully controlled to mitigate risks. As the AI race intensifies, the balance between innovation and national security will likely remain a critical area of focus for stakeholders across the globe.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility