The generative AI landscape is evolving rapidly, with new developments emerging from major players. One such surprise comes from Runway, a company renowned for its high-end video synthesis, which has recently launched Runway Characters. This new offering marks a significant pivot into the realm of interactive, real-time AI assistants, showcasing Runway’s ambition to redefine user interaction within digital environments.

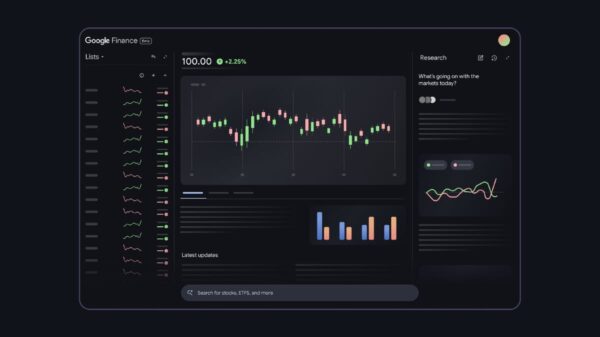

The core technology behind Runway Characters is the GWM-1, touted as a world model capable of understanding the context of virtual worlds and their characters. However, early tests reveal that this promise may not fully align with reality. While the real-time capabilities are commendable, the experience often feels like a sophisticated assembly of various technologies rather than a seamless integration of a unified world model. The system appears to process user intent through a fast Large Language Model (LLM) followed by a diffusion model generating visuals, ultimately supplemented by optimizations like pre-rendered buffers to enhance smoothness.

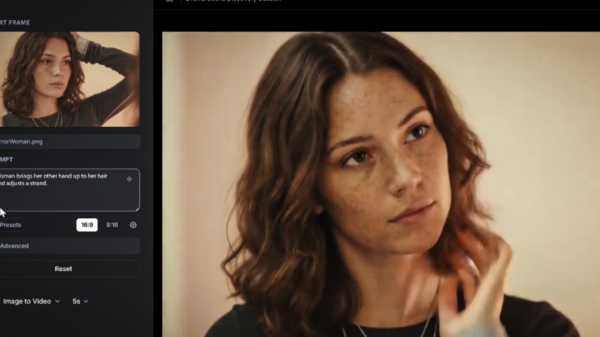

The video output is impressive and represents a notable advancement over current real-time solutions. Nonetheless, users may notice some inconsistencies. For example, when avatars cease speaking, they often revert abruptly to a default, idle animation, exposing the limitations of continuous synthesis. Additionally, in scenarios demanding photorealism, avatars may exhibit erratic eye movements—a common artifact produced by distillation techniques designed for efficiency. While the technology surpasses many of its contemporaries, it still falls short of the ideal of True AI Cinema.

Seamless Customization and Motion Capture

Runway Characters distinguishes itself not just through its underlying technology but through its user-centric design. The platform emphasizes customization, allowing users to create a basic avatar with merely one single input image. This aspect notably simplifies the creation process compared to other tools, which often require extensive datasets and complex setups. In this context, Runway’s offering becomes a game-changer for those seeking interactive assistants.

Customization options are extensive, enabling users to tailor avatars according to their unique needs. Users can define avatar visuals based on an image, select from a library of voice profiles, and assign core personality traits through customizable system prompts. Furthermore, a standout feature is the ability to connect a custom knowledge base to the character, transforming it from a simple chatbot into an insightful interactive wiki—effectively embedding contextual information into a website or application.

Runway’s proactive approach to engage developers is evident in its latest strategy: a remarkable offer of 30 minutes of talk time for testing. This initiative allows users to experience the system firsthand at https://dev.runwayml.com/, an uncommon gesture aimed at fostering user feedback and market presence ahead of potential competition. This approach reflects Runway’s intent to establish itself firmly within the interactive digital assistant landscape.

As the field of generative AI advances, the developments from Runway offer a glimpse into the potential future of interactive digital environments. While there are still hurdles to overcome—particularly in achieving seamless realism—the advancements made by Runway Characters represent a notable step forward. As the company continues to refine its technology, the broader implications for user engagement and AI capabilities could reshape how individuals and businesses interact with digital worlds.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility