NVIDIA unveiled its latest AI initiative, the NVIDIA Vera Rubin platform, during the ongoing GTC event, announcing that seven new chips are now in full production. This platform aims to scale the world’s largest AI factories with a configurable infrastructure optimized for various phases of artificial intelligence, including pretraining, post-training, and test-time scaling, as well as real-time agentic inference. The introduction of the new chips signifies a pivotal moment in advancing AI capabilities and infrastructure.

The Vera Rubin platform integrates a comprehensive suite of components, including the NVIDIA Vera CPU, NVIDIA Rubin GPU, NVIDIA NVLink™ 6 Switch, NVIDIA ConnectX®-9 SuperNIC, NVIDIA BlueField®-4 DPU, and NVIDIA Spectrum™-6 Ethernet switch. According to Jensen Huang, founder and CEO of NVIDIA, this generational leap encompasses seven breakthrough chips and five racks working in unison as a powerful AI supercomputer. “The agentic AI inflection point has arrived with Vera Rubin kicking off the greatest infrastructure buildout in history,” Huang stated.

Dario Amodei, CEO and cofounder of Anthropic, emphasized the growing complexity of AI tasks, noting that enterprises increasingly rely on sophisticated reasoning and agentic workflows for critical decisions. “NVIDIA’s Vera Rubin platform gives us the compute, networking, and system design to keep delivering while advancing the safety and reliability our customers depend on,” he remarked. Similarly, Sam Altman, CEO of OpenAI, pointed out that with the Vera Rubin platform, his organization can run more powerful models at scale, promising faster and more reliable systems for millions.

The AI infrastructure landscape is evolving rapidly, transitioning from discrete chips and standalone servers to fully integrated rack-scale systems and POD-scale deployments. These advancements are driving enhanced performance while improving cost efficiency across diverse industries, from startups to large enterprises. The democratization of AI technology is also seen as a significant benefit, allowing broader access while enhancing energy efficiency to handle demanding workloads.

The NVIDIA Vera Rubin NVL72 Rack is a standout feature of the platform, integrating 72 Rubin GPUs and 36 Vera CPUs linked by NVLink 6, along with ConnectX-9 SuperNICs and BlueField-4 DPUs. This rack is designed to deliver transformative efficiency for training large mixture-of-experts models, achieving up to 10 times higher inference throughput per watt and requiring only a quarter of the GPUs compared to the NVIDIA Blackwell platform. Optimized for hyperscale AI factories, the NVL72 supports seamless scaling with NVIDIA Quantum-X800 InfiniBand and Spectrum-X Ethernet, thereby enhancing GPU cluster utilization and reducing training time and total costs.

In addition to the NVL72, the NVIDIA Vera CPU Rack provides a dense, liquid-cooled infrastructure featuring 256 Vera CPUs. This configuration is essential for reinforcement learning and agentic AI workloads, unlocking scalable, energy-efficient performance. Integrated tightly with Spectrum-X Ethernet networking, these CPU racks ensure synchronization across AI factories, facilitating large-scale model testing and validation.

The introduction of the NVIDIA Groq 3 LPX Rack marks another milestone, addressing the low-latency demands of agentic systems. The LPX rack, featuring 256 LPU processors, is expected to provide up to 35 times higher inference throughput per megawatt. This design aims to maximize efficiency across power, memory, and compute, enabling ultra-premium, trillion-parameter inference capabilities.

The NVIDIA BlueField-4 STX Storage Rack provides AI-native storage infrastructure, seamlessly extending GPU memory across the POD. It is powered by BlueField-4, integrating the Vera CPU and ConnectX-9 SuperNIC. This combination is optimized for managing key-value cache data generated by large language models, significantly enhancing inference throughput and energy efficiency through the new DOCA Memos framework.

Lastly, the NVIDIA Spectrum-6 SPX Ethernet Rack aims to improve rack-to-rack connectivity with options for either Spectrum-X Ethernet or NVIDIA Quantum-X800 InfiniBand switches. Enhanced optical power efficiency and resiliency are key features, positioning the Spectrum-6 SPX to handle the high throughput required in expansive AI factories.

NVIDIA also announced the DSX platform for Vera Rubin, which includes DSX Max-Q for dynamic power provisioning, allowing for a significant increase in AI infrastructure deployment efficiency. This will enable AI factories to flexibly utilize energy sources, thereby unlocking considerable stranded grid power potential.

With broad ecosystem support, Vera Rubin-based products are set to become available from several leading cloud providers, including Amazon Web Services, Google Cloud, and Microsoft Azure, in the latter half of this year. Global system manufacturers such as Cisco and Dell Technologies are expected to offer a range of servers built on the new platform, while AI labs, including Anthropic and OpenAI, are keen to leverage these advancements to train larger, more capable models.

The advent of the NVIDIA Vera Rubin platform represents a significant leap forward in AI infrastructure, heralding a new era of computational capabilities and efficiencies. As industry leaders look to harness these technologies, the implications for AI development and deployment are profound, promising to reshape the landscape of artificial intelligence.

See also Everpure Unveils Advanced AI Storage Solutions with 400M IOPS Performance at GTC

Everpure Unveils Advanced AI Storage Solutions with 400M IOPS Performance at GTC AI-Driven Defense Technologies Face ITAR Compliance Challenges, Impacting Export Controls

AI-Driven Defense Technologies Face ITAR Compliance Challenges, Impacting Export Controls Nvidia Announces Trillion-Dollar AI Sales Forecast, Unveils New Groq Chips and CPUs

Nvidia Announces Trillion-Dollar AI Sales Forecast, Unveils New Groq Chips and CPUs Nvidia Reveals $1-Trillion AI Chip Sales Target as Inference Demand Soars

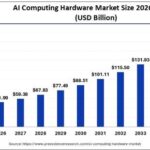

Nvidia Reveals $1-Trillion AI Chip Sales Target as Inference Demand Soars AI Computing Hardware Market Set to Expand from $45.51B to $172.15B by 2035 at 14.23% CAGR

AI Computing Hardware Market Set to Expand from $45.51B to $172.15B by 2035 at 14.23% CAGR