Google DeepMind is advancing healthcare technology with its development of an “AI co-clinician” designed to assist doctors in patient care. Initial simulation studies indicate promising results, though the AI system has not yet matched the performance of seasoned physicians. Additionally, the research highlights limitations of ChatGPT’s voice mode for serious applications, particularly in medical consultations.

The AI co-clinician operates within a framework termed “triadic care,” wherein AI agents support patients under the supervision of doctors, maintaining clinical authority. This collaborative approach aims to enhance patient treatment while ensuring oversight remains in the hands of qualified medical professionals.

To assess the system from a clinician’s viewpoint, researchers collaborated with academic physicians to implement the NOHARM framework, which evaluates two categories of mistakes: errors of commission and errors of omission. In a blind comparison involving 98 primary care queries, doctors favored the AI co-clinician’s responses over leading evidence synthesis tools. The AI co-clinician achieved 67 preferences compared to an existing clinical AI system’s 26, and it outperformed GPT-5.4-thinking-with-search by a score of 63 to 30. Notably, the AI co-clinician made a critical error in only one of the 98 cases evaluated.

The lead was particularly pronounced in medication inquiries. The RxQA benchmark, which includes 600 questions on active ingredients, interactions, and dosages sourced from national drug directories and vetted by licensed pharmacists, posed challenges for primary care physicians. With reference materials, doctors answered 61.3 percent correctly, but this dropped to 48.3 percent without external assistance. The AI co-clinician excelled with a score of 73.3 percent, surpassing GPT-5.4-thinking-with-search, which scored 72.7 percent. The performance gap increased when questions were posed in an open-ended format, typical of real-world searches; here, the AI co-clinician achieved a remarkable quality score of 95.0 percent, compared to 90.9 percent for OpenAI’s model.

In addition to text-based support, Google DeepMind is exploring the AI co-clinician’s capabilities in telemedicine through real-time audio and video interactions. Partnering with physicians at Harvard and Stanford, researchers conducted a randomized simulation study involving 20 synthetic clinical scenarios, 10 doctors acting as patient representatives, culminating in 120 hypothetical telemedicine consultations. The AI co-clinician demonstrated abilities that extend beyond text-only systems, such as correcting a patient’s inhaler technique and guiding patients through shoulder exams to identify rotator cuff injuries.

In patient-facing dialogues, the AI co-clinician employs a dual-agent configuration: a “Planner” module oversees the conversation to ensure the “Talker” agent adheres to safe clinical practices. When utilized by doctors, the system emphasizes solid clinical evidence and conducts verification and citation checks during information retrieval.

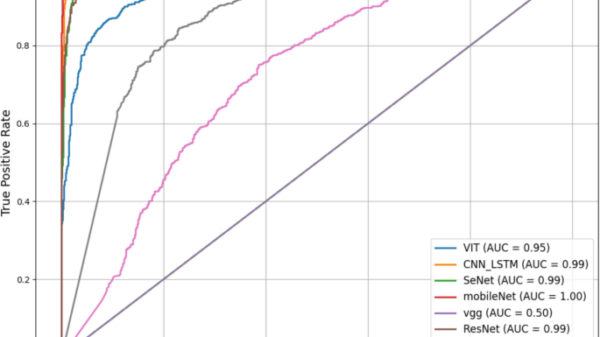

Despite these advancements, the study revealed that experienced physicians consistently outperformed the AI co-clinician across 140 assessed aspects of consultation quality, including triage, history taking, clinical reasoning, communication and counseling, treatment steps, recognizing warning signs, and conducting physical exams. The findings suggest that while the AI co-clinician matched or exceeded primary care physicians in 68 of the evaluated areas, it lagged behind seasoned doctors, especially in identifying critical warning signs and executing thorough physical examinations. OpenAI’s GPT-realtime ranked lowest across all seven evaluated domains. The researchers concluded that AI systems like this are best utilized as supportive tools for healthcare professionals rather than substitutes for their clinical judgment.

Moving forward, it remains uncertain whether this research initiative will evolve into a commercially available product. Although the results underscore progress in AI-driven evidence synthesis and telemedicine applications, there remains a clear gap when compared to the expertise of experienced physicians, particularly in safety-critical scenarios. “While it’s early days, the promise is clear,” noted DeepMind researcher Alan Karthikesalingam.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility