As Nigeria gears up for its general elections in 2027, concerns are intensifying over the potential misuse of artificial intelligence (AI) in the political arena. Experts are warning that AI tools, particularly deepfakes and misinformation, could pose significant risks to the integrity of the electoral process, undermining public trust and manipulating voter perceptions in a country marked by a polarized political landscape.

With traditional campaigning methods giving way to algorithm-driven strategies, the electoral landscape is rapidly changing. AI’s dual potential to influence and deceive makes it a powerful tool that could sway the outcome of elections. The imminent threat is exacerbated by Nigeria’s heavy reliance on social media platforms like WhatsApp, Facebook, and X (formerly Twitter), which serve as conduits for misinformation.

Deepfakes, or AI-generated synthetic media that convincingly portray individuals saying or doing things they have not, represent one of the most pressing dangers. These technologies could fabricate campaign speeches, concession messages, or even staged incidents of violence at polling stations. The speed and scale at which AI can generate such content complicate efforts to combat misinformation, potentially overwhelming fact-checkers and creating confusion, particularly among voters with low media literacy.

Voice cloning technology further heightens this threat. AI can mimic the voices of political figures, leading to the dissemination of misleading audio messages, which could falsely announce election results or incite panic. Moreover, AI-powered bots and coordinated networks could amplify specific political narratives, giving the illusion of widespread support or dissent, thereby shaping public perception on a large scale.

While misinformation is the most visible threat, the implications of AI extend deeper into electoral disruptions. Analysts fear that AI could produce convincing fake documents, such as result sheets, complicating the verification of genuine electoral outcomes. Additionally, the risk of cyberattacks on electoral infrastructure, including voter databases and result transmission systems, raises alarms about the potential to disrupt the voting process itself.

As misinformation proliferates, a phenomenon known as “epistemic erosion” may occur, where voters begin to distrust all information sources, including legitimate electoral outcomes. Nigeria’s unique sociopolitical environment, characterized by ethnic and religious tensions, amplifies these risks. In a country where many citizens struggle with digital literacy and misinformation spreads rapidly through encrypted messaging platforms, the challenge is daunting.

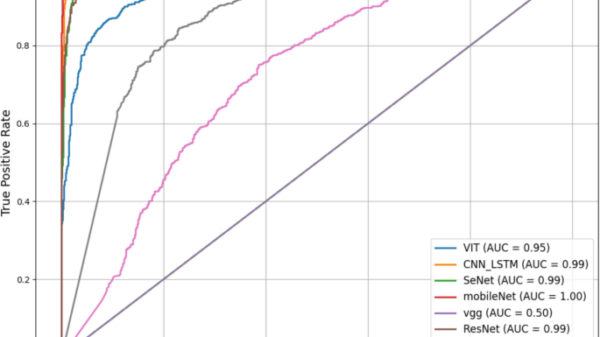

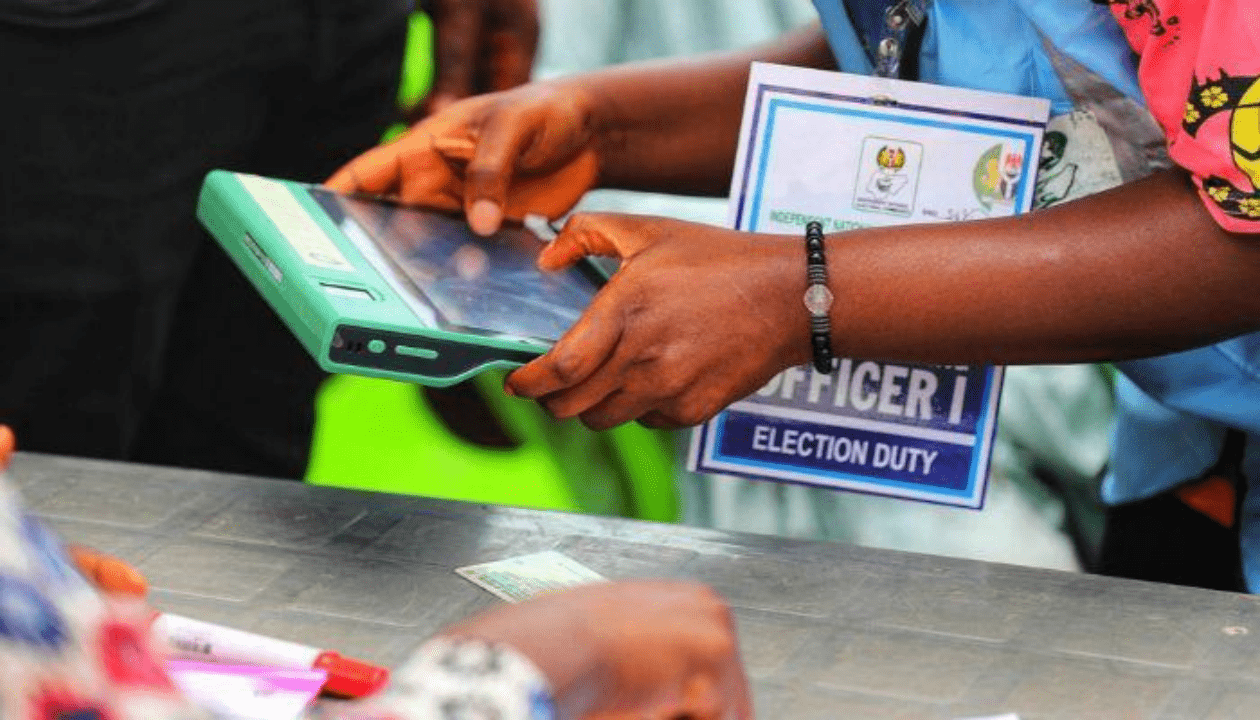

As regulatory frameworks lag behind, there is an urgent need for institutions like the Independent National Electoral Commission (INEC) and media organizations to engage AI experts to identify and counter deepfakes. Recently, INEC has initiated an AI division aimed at enhancing voter engagement and combating disinformation; however, experts caution that substantial improvements in institutional capacity and public awareness are critical to match the pace of rapidly evolving AI technologies.

Nigeria’s challenges are not unique. Around the globe, recent elections have demonstrated AI’s influence on democratic processes. Cases such as Cambridge Analytica illustrate how data-driven manipulation can sway voter behavior. More recent incidents highlight the growing use of generative AI for electoral interference, from misleading robocalls in the United States to fabricated political endorsements in India and the use of AI-generated speeches in Pakistan.

These global examples reveal a consistent pattern: AI is often employed not to directly alter vote counts, but to manipulate the informational landscape in which voters make decisions. In Nigeria, the potential for AI to influence the 2027 elections could unfold in several strategic ways, such as public opinion manipulation prior to the elections, misinformation on election day, and undermining post-election confidence through fabricated evidence.

Addressing these multifaceted risks requires a comprehensive approach. Implementing clear regulations on AI usage in political campaigns, investing in technology to detect deepfakes, and launching nationwide digital literacy campaigns are essential steps. Strengthening institutional capabilities, particularly within the Ministry of Communications, Innovation and Digital Economy, and fostering collaboration with social media companies to identify harmful content will be pivotal in safeguarding the electoral process.

The 2027 Nigerian general elections may mark a critical juncture in the intersection of technology and democracy. While AI holds potential for improving electoral engagement, its misuse could pose grave threats to democratic integrity. Policymakers, technologists, and citizens face the philosophical challenge of preserving truth and trust in an era where reality can be manufactured. The choices made today will ultimately determine whether AI serves as a tool for democratic enhancement or as an instrument of electoral manipulation.

In summary, the lesson from the global experience is clear: while AI does not rig elections by itself, it can be utilized by those with intent to subvert democratic processes. Ensuring responsible governance of this powerful technology is imperative for the future of democracy in Nigeria and beyond.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature