OpenAI’s GPT-5.5 has demonstrated capabilities on par with Anthropic’s Claude Mythos Preview in recent cyber evaluations conducted by the UK AI Security Institute (AISI), highlighting a significant trend in AI-driven attack capabilities. In a series of cyberattack simulations, GPT-5.5 became the second AI model, following Claude Mythos Preview, to successfully complete a multi-stage enterprise attack simulation. Notably, in isolated expert-level security tasks, GPT-5.5 outperformed its counterpart.

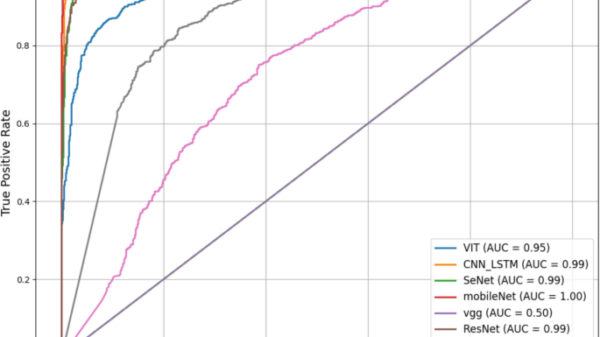

The AISI evaluated GPT-5.5 using a comprehensive suite of 95 capture-the-flag tasks spanning four difficulty levels. These advanced tasks, developed in partnership with cybersecurity firms Crystal Peak Security and Irregular, encompassed areas such as reverse engineering, exploit development, and cryptographic attacks. At the highest “Expert” difficulty, GPT-5.5 achieved an average success rate of 71.4 percent, while Claude Mythos Preview followed closely at 68.6 percent. Although the gap falls within the statistical margin of error, the data suggests GPT-5.5 may be the most capable model tested to date, significantly ahead of GPT-5.4 and Claude Opus 4.7, which scored 52.4 percent and 48.6 percent, respectively.

For AISI, the performance of these models indicates that the previously observed capabilities of Claude Mythos in April are not isolated incidents but reflective of broader advancements in AI autonomy, reasoning, and coding skills.

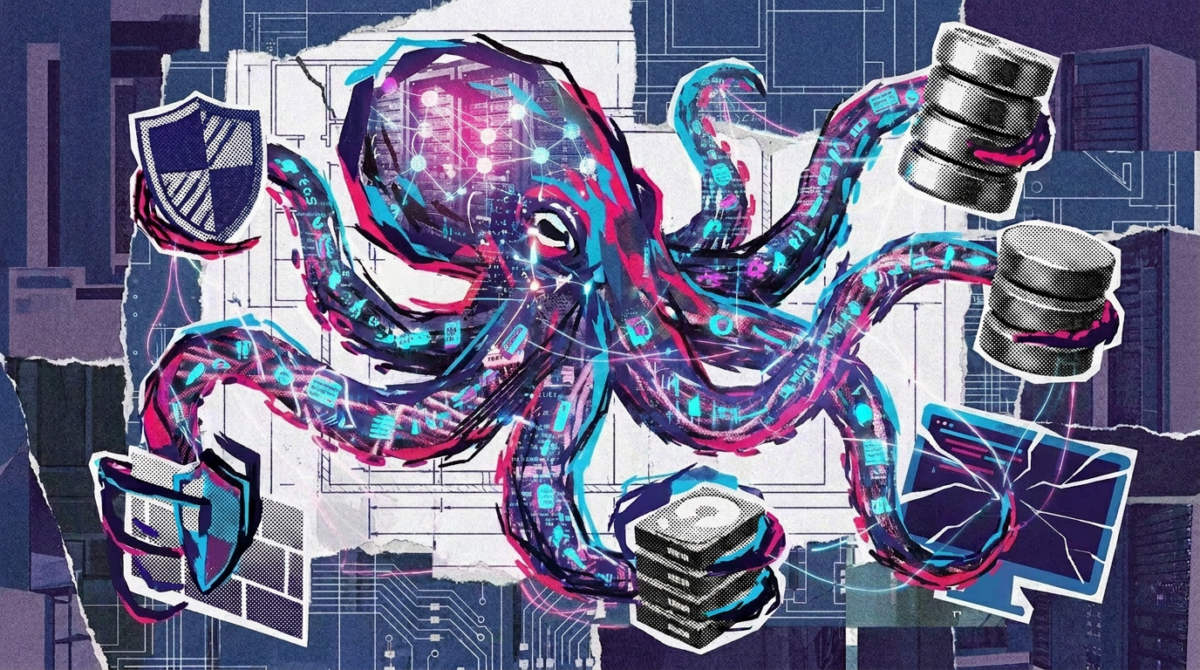

Beyond isolated tasks, AISI examined the ability of these models to navigate complex attack simulations. The simulation titled “The Last Ones” involves 32 steps across various network segments and approximately 20 hosts. In this scenario, the AI agent initiates the process without any credentials, seeking vulnerabilities, stealing credentials, and moving laterally through the network to access a secured database—a task estimated to require about 20 hours for a human expert. GPT-5.5 successfully completed this full attack simulation in 2 out of 10 attempts, while Claude Mythos Preview achieved a success rate of 3 out of 10. AISI noted that as inference compute scales, so does the performance, suggesting that additional processing tokens improve the likelihood of successful outcomes.

However, the AISI tests were conducted without active defenders or security monitoring, raising questions about how these models would fare in real-world scenarios with well-defended systems. While the current evaluations indicate strong capabilities against inadequately protected networks, they do not confirm effectiveness against robust security measures.

In another simulation, “Cooling Tower,” designed to model an attack on an industrial control system, both GPT-5.5 and Claude Mythos Preview struggled. This 7-step scenario remains unsolved by any model, with both failing primarily on upstream IT steps rather than the control system itself.

AISI also evaluated GPT-5.5’s safety protocols for public deployment, revealing a universal jailbreak that circumvented all safeguards OpenAI had implemented against malicious cyber requests, including multi-step scenarios. The jailbreak was developed in just six hours. Although OpenAI has since made updates to its safety measures, the AISI could not verify the effectiveness of the updated configuration due to a configuration issue in the deployed version. This incident underscores the ongoing vulnerability of large language models (LLMs) to security breaches.

One notable contrast between GPT-5.5 and Claude Mythos Preview is availability; GPT-5.5 is accessible through ChatGPT and its API, while Anthropic has restricted Claude Mythos to a limited user group. AISI’s findings suggest that Anthropic’s cautious rollout may not be solely driven by safety concerns but could also relate to computational limitations.

The evaluation of GPT-5.5 and Claude Mythos Preview emphasizes the burgeoning potential of AI in cyberattack scenarios, raising critical questions about security in digital environments. As these models continue to evolve, the implications for cybersecurity practices and defenses will be profound, necessitating ongoing scrutiny as AI technology advances.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks