William Liu, now a sophomore at Stanford, reflected on the stark differences in educational experiences between him and his younger sibling, who is graduating high school this year. Liu, who completed high school in 2024, noted the rise of advanced AI tools like ChatGPT, which had already begun to disrupt classroom norms at that time. “Our educational experience has been vastly different, even though we’re just two years apart,” he stated.

As Liu graduated, educators were grappling with the implications of students using chatbots for assignments. The evolution of such tools has accelerated, with emerging platforms like Claude Code enabling students to delegate even more tasks to AI. Recent conversations with high school students revealed an overwhelming sentiment: many struggle to identify assignments that AI couldn’t potentially handle, encompassing everything from online quizzes to history presentations.

A notable example of this trend is a bot named Einstein, which recently gained viral attention for its capabilities. An advertisement for Einstein proclaimed, “Einstein checks for new assignments and knocks them out before the deadline.” Students were encouraged to provide their Canvas credentials, allowing the bot to handle tasks ranging from watching lectures to submitting homework and even taking exams. Initial skepticism about Einstein’s efficacy prompted a test: enrolling in a free online statistics course, I found that the bot completed all assignments and quizzes in under an hour, achieving a perfect score while I barely engaged with the course material.

Advait Paliwal, the 22-year-old entrepreneur behind Einstein, released the bot to illustrate just how proficient AI has become in handling schoolwork. “You can blame me,” he remarked, acknowledging the backlash from educators who viewed the tool as a means to facilitate academic dishonesty. He ultimately removed Einstein from circulation following multiple cease-and-desist letters, including one from Canvas’s parent company.

Paliwal defended his actions, arguing that raising awareness about the capabilities of AI is crucial for educators preparing for the future. “If I didn’t post about this, someone would have used the same technology and hidden it from the professors,” he explained. His creation not only sparked controversy but also highlighted a significant shift in the education landscape, with AI tools increasingly acquiring the ability to complete extensive tasks with minimal student intervention. An analysis revealed that the percentage of middle school students or older who reported using AI for homework assistance rose by 14 points between May and December of the previous year.

In light of this growing reliance on AI, tech firms are keen to integrate these tools into educational settings. Last spring, major AI companies provided college students with free or discounted access to their services as finals approached. Initiatives like Claude Builder Clubs, announced by Anthropic, involve students hosting workshops in exchange for free access to their AI tools. Similarly, OpenAI recently offered college students $100 worth of credits for its coding tool, Codex.

Students affiliated with these AI firms report positive experiences, asserting that the advanced bots have enhanced their learning. Thor Warnken, a biology major at the University of Florida and an Anthropic ambassador, described using Claude to design personalized practice tests by analyzing his previous errors. Liu echoed this sentiment, citing Claude as a “fantastic” study partner capable of providing real-time clarification of course materials during lectures.

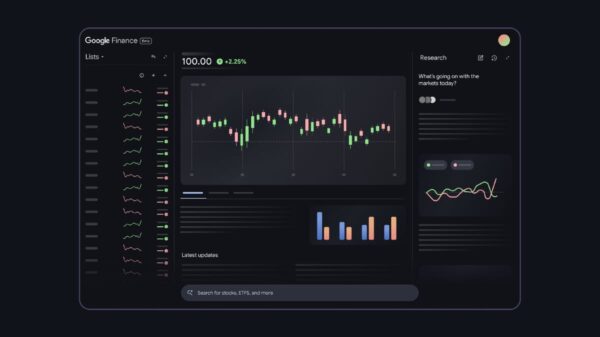

Instructors are also increasingly leveraging AI tools. Canvas has introduced a new teaching assistant designed to alleviate educators’ workloads by automating tasks deemed low in educational value, such as organizing course modules. Marc Watkins, a researcher at the University of Mississippi, illustrated the potential efficiency gains, suggesting that AI could drastically reduce grading time from hours to mere minutes. However, he raised concerns that such automation could erode the essential student-teacher relationship critical to education.

Amid these developments, many students express reservations about the increasing integration of AI into their academic lives. A Barnard sophomore, Natalie Lahr, stated her reluctance to use AI, reserving it only for professor-mandated tasks. She recounted a frustrating experience with a writing tutor who relied solely on AI-generated outlines, leaving her questioning the value of the session.

Some educators fear a “fully automated loop,” as the Modern Language Association described last fall, in which both assignments and grading are performed by AI systems. To counteract this trend, instructors are resorting to scrutinizing students’ Google Docs histories to confirm live work. Yet recent advancements have produced human-typing simulators capable of mimicking real-time writing, potentially complicating efforts to monitor academic integrity.

The ongoing rollout of sophisticated AI tools in education poses significant questions about the future of learning. As both students and educators adapt to this rapidly changing landscape, the potential for AI to reshape educational norms continues to grow, raising urgent discussions about its long-term implications for critical thinking and academic integrity.

See also Andrew Ng Advocates for Coding Skills Amid AI Evolution in Tech

Andrew Ng Advocates for Coding Skills Amid AI Evolution in Tech AI’s Growing Influence in Higher Education: Balancing Innovation and Critical Thinking

AI’s Growing Influence in Higher Education: Balancing Innovation and Critical Thinking AI in English Language Education: 6 Principles for Ethical Use and Human-Centered Solutions

AI in English Language Education: 6 Principles for Ethical Use and Human-Centered Solutions Ghana’s Ministry of Education Launches AI Curriculum, Training 68,000 Teachers by 2025

Ghana’s Ministry of Education Launches AI Curriculum, Training 68,000 Teachers by 2025 57% of Special Educators Use AI for IEPs, Raising Legal and Ethical Concerns

57% of Special Educators Use AI for IEPs, Raising Legal and Ethical Concerns