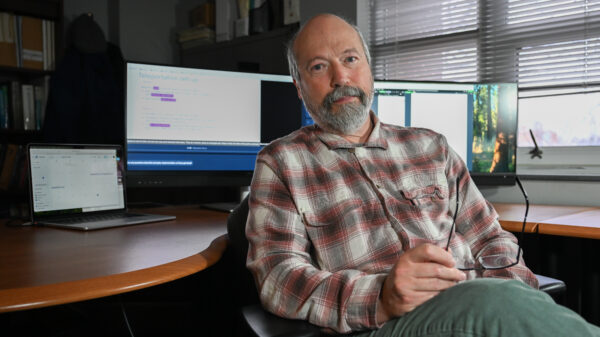

A study led by Oksana Babenko from the University of Alberta reveals that the increasing reliance on generative AI tools is reshaping learning behaviors and trust in educational resources among health profession students. Published in the journal International Medical Education, the research investigates how generative AI tools, such as ChatGPT, are influencing students’ perceptions of these technologies as lifelong learning partners. This evolving dynamic could significantly impact the future integration of AI in healthcare education and practice.

The study surveyed 558 health profession students aged 18 to 25 across various disciplines, including medicine, nursing, dentistry, pharmacy, and allied health. The findings indicate that generative AI has become a prevalent educational tool, with a significant majority—over 80%—reporting active use of AI-powered platforms for learning, idea generation, and problem-solving tasks.

This trend reflects a broader shift in educational methodologies as students increasingly engage with intelligent systems for instant feedback and information generation. Traditional methods of learning, such as textbooks and lectures, are evolving as technology mediates educational experiences. The study emphasizes the critical role of lifelong learning in healthcare, necessitating ongoing skill and knowledge updates. However, it also raises concerns that reliance on generative AI may shift the responsibility for learning away from the student and towards technology.

The implications of this transformation highlight potential drawbacks. While AI tools can enhance learning efficiency, they may inadvertently limit critical thinking, peer interaction, and originality in problem-solving. The study conveys mixed messages—generative AI can support complex learning environments, yet students must navigate the challenge of maintaining essential competencies that underpin effective clinical practice.

Trust Grows with Usage, but Gender and Geographic Disparities Persist

Interestingly, the research identifies a strong correlation between the frequency of generative AI use and students’ trust in these systems, with usage accounting for nearly 40% of the variation in trust levels. This relationship suggests that as students interact more with AI, they begin to see these tools not merely as supplementary resources but as reliable educational partners.

However, trust levels vary across different demographics. Male students reported higher usage and greater confidence in generative AI compared to their female peers, who approached the technology with more caution due to ethical concerns. Geographic differences also play a significant role; students from the Global South exhibited significantly higher trust in AI than those from the Global North. This disparity is thought to stem from varying access to traditional educational resources, which may render generative AI tools more valuable in regions with limited educational infrastructure.

While higher trust levels in the Global South may indicate a reliance on AI as an alternative knowledge source, they could also reflect limited exposure to discussions around AI risks, including data privacy and misinformation. Furthermore, the study warns that increased trust does not equate to well-calibrated trust. Students who heavily depend on AI without a solid foundation in critical evaluation may become vulnerable to misinformation and overreliance on automated systems.

As generative AI continues to permeate health professions education, it is essential to address the integration of these technologies thoughtfully. While the advantages of AI in enhancing learning are clear, the study highlights concerns regarding overdependence, especially among students still developing their clinical judgment and critical thinking skills.

In healthcare, where the stakes are high, the ability to critically assess information and collaborate with colleagues is paramount for patient safety. Overreliance on AI-generated outputs may compromise these core competencies, raising alarms among educators about the potential erosion of essential skills such as communication, empathy, and teamwork that AI cannot replicate.

Importantly, the study also underscores the need for students to maintain a healthy skepticism towards AI as a source of information. While many students acknowledge the necessity for caution, a significant number express confidence in AI-generated content. This duality reveals a tension between trust and skepticism that will shape the future utilization of AI in healthcare.

To effectively address these challenges, the research calls for enhanced AI literacy among students. Understanding the workings, limitations, and potential biases of AI systems is crucial for their responsible use. Educational institutions are encouraged to incorporate training that focuses on the critical evaluation of AI outputs and the ethical implications of relying on them.

The findings advocate for a proactive approach to embedding AI within educational frameworks, emphasizing that it should not be treated merely as an external tool but integrated in ways that align with learning objectives while upholding professional standards. The study marks a pivotal moment, illustrating both the opportunities and risks associated with generative AI in healthcare education. Balancing innovation with the development of critical thinking and professional competence is essential as the next generation of healthcare professionals navigates an increasingly complex educational landscape.

See also Andrew Ng Advocates for Coding Skills Amid AI Evolution in Tech

Andrew Ng Advocates for Coding Skills Amid AI Evolution in Tech AI’s Growing Influence in Higher Education: Balancing Innovation and Critical Thinking

AI’s Growing Influence in Higher Education: Balancing Innovation and Critical Thinking AI in English Language Education: 6 Principles for Ethical Use and Human-Centered Solutions

AI in English Language Education: 6 Principles for Ethical Use and Human-Centered Solutions Ghana’s Ministry of Education Launches AI Curriculum, Training 68,000 Teachers by 2025

Ghana’s Ministry of Education Launches AI Curriculum, Training 68,000 Teachers by 2025 57% of Special Educators Use AI for IEPs, Raising Legal and Ethical Concerns

57% of Special Educators Use AI for IEPs, Raising Legal and Ethical Concerns