Generative AI has emerged as a powerful force in artificial intelligence, capable of producing new data that mimics its training examples. This innovative branch of AI differs from traditional models by focusing on creating original outputs, such as text, images, music, or video. Recent advancements, particularly in model scale and performance, have heightened interest in generative AI, transforming its role from mere analytical assistance to creative collaboration.

Large language models, like OpenAI’s GPT-3 and Google’s LaMDA, can now draft articles and engage in conversations, while image generation systems create visuals from text prompts. Audio models are even synthesizing speech and music. However, these capabilities come with risks, including deepfakes, factual inaccuracies, and biased outputs, underscoring the necessity for responsible development and oversight.

The evolution of generative AI dates back several decades, with foundational work involving probabilistic approaches such as Gaussian mixture models. The introduction of deep learning brought significant breakthroughs, notably Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs). These models have since evolved, with recent advancements in diffusion models and Transformer-based architectures leading to state-of-the-art performance in tasks like image and audio synthesis.

GANs, introduced in 2014, are known for their ability to generate high-fidelity samples rapidly. They operate through a competitive framework involving a generator and a discriminator, although they face challenges such as instability during training and mode collapse. In contrast, VAEs offer a more stable approach, utilizing probabilistic latent encodings but often producing blurrier outputs. Diffusion models, which denoise data from random noise, have become popular for their high-quality generation and avoidance of adversarial training instability, while Transformer-based models excel in natural language tasks due to their self-attention mechanisms.

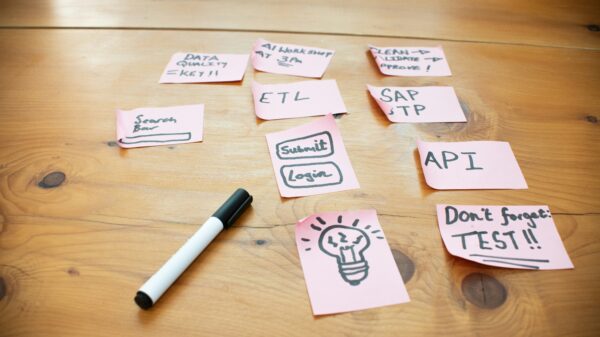

Generative AI is being harnessed across various sectors, from language and visual media to audio and video. In text generation, models like GPT-4 are reshaping content creation, supporting diverse applications including chatbots, translation, and programming assistance. Image generation tools such as DALL·E and Midjourney are revolutionizing creative design by facilitating rapid concept exploration, marketing material production, and product design.

In the audio domain, generative AI is transforming music and speech synthesis, enabling natural-sounding text-to-speech systems and dynamic sound effect generation. Video generation is an emerging frontier, where deepfakes and video prediction models illustrate both the potential and ethical concerns of this technology, particularly regarding misinformation and identity misuse. The challenges of maintaining temporal consistency in video highlight the complexities these models face in providing reliable outputs.

As generative AI technologies become more prevalent, ethical and societal implications are increasingly scrutinized. Bias in models, often stemming from training on flawed datasets, raises concerns about reinforcing stereotypes. Additionally, the risk of misinformation through realistic deepfakes threatens public trust, pushing researchers to develop detection tools and explore legal frameworks for accountability. Inaccuracies, or hallucinations, in generated content can pose significant risks in critical sectors, emphasizing the need for improved reliability and explainability.

The ownership of AI-generated content also introduces significant questions regarding intellectual property and authorship. The ambiguity of whether the creator, the user, or the original artists hold rights over AI-generated works has led to ongoing debates and legal challenges. As generative AI continues to evolve, its impact on employment and the quality of creative work is a subject of considerable concern, prompting discussions about the necessity for new professional roles centered around collaboration with AI technologies.

Looking ahead, challenges remain for generative AI, including aligning systems with human values and ensuring robustness in unfamiliar scenarios. Efficiency in training and inference will be crucial for broader accessibility, and the development of multimodal AI systems capable of handling diverse data types poses both opportunities and challenges. Governance frameworks will also play an essential role in ensuring responsible deployment as generative AI becomes increasingly integrated into society. The journey of generative AI reflects a significant shift in technology, offering remarkable opportunities while demanding careful management of its risks and limitations.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature