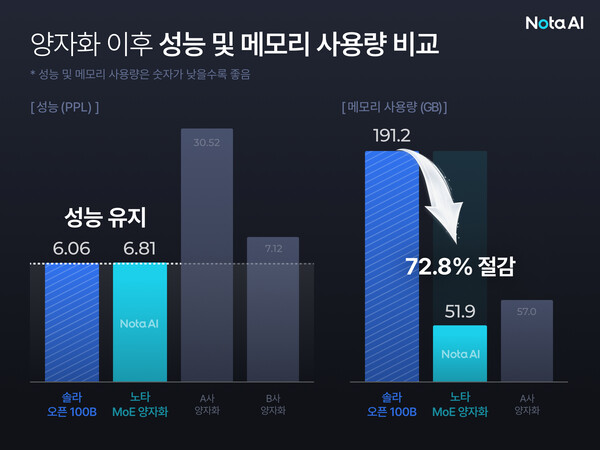

Nota, an AI model optimisation company, announced on Thursday that it has successfully developed a quantisation technology that reduces the memory usage of Upstage’s large language model (LLM), Solar 100B, by an impressive 72.8%. This breakthrough was achieved as part of a project initiated by the Ministry of Science and ICT aimed at creating an independent AI foundation model.

Nota’s innovative approach, termed “Nota MoE quantisation,” is specifically designed for a mixture-of-experts (MoE) structure, which represents a next-generation architecture for LLMs. Unlike existing quantisation techniques that compress an entire model simultaneously—often leading to inevitable performance degradation—Nota’s method takes a more nuanced approach. It assesses the specific characteristics of each expert model, allowing it to maintain precision where needed while compressing less critical components.

The application of this technology resulted in a significant decrease in the memory footprint of Solar 100B, which fell from 191.2 GB to 51.9 GB. Despite this drastic reduction, the performance indicator known as perplexity (PPL) showed a minimal decline, measuring 6.81 compared to the original model’s 6.06. Nota highlighted that other general-purpose quantisation methods can lead to performance reductions of more than fivefold, underscoring the effectiveness of its innovative technique. The company has also filed a patent application for this technology.

The implications of Nota’s breakthrough are significant for the deployment of large-scale models in various constrained environments, such as on-device applications including robots and vehicles. Nota has stressed that this development could alleviate operational costs for companies struggling to secure high-spec GPUs, a critical component for running extensive AI models.

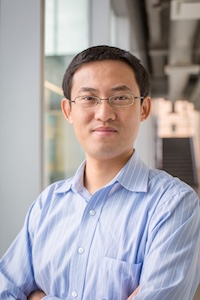

In remarks regarding the achievement, Nota Chief Executive Chae Myung-soo stated, “As demand grows to implement large-scale models on devices, Nota’s lightweighting and optimisation technologies will play a key role.” This assertion aligns with the broader trend in the tech industry, where the push for efficient AI solutions is becoming increasingly vital. Companies are under pressure to provide smarter, faster, and more cost-effective AI capabilities, and innovations like Nota’s quantisation technology are likely to meet this demand.

As the landscape of AI continues to evolve, the ability to deploy sophisticated models in more accessible formats resonates with ongoing market needs. Nota’s advancements could pave the way for further innovations in AI optimisation, potentially restructuring how companies approach AI development and deployment in the future.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature