Defense Secretary Pete Hegseth has issued a stark ultimatum to artificial intelligence company Anthropic, threatening to blacklist it from working with the U.S. military due to its refusal to relax its safety standards. The warning emerged during a meeting on Tuesday between Hegseth and Anthropic CEO Dario Amodei, according to sources familiar with the discussions who were not authorized to speak publicly.

For several months, Amodei has maintained that the company will not engage in the use of AI for domestic mass surveillance or AI-controlled weaponry, describing such applications as “illegitimate” and “prone to abuse.” During the meeting, Amodei reiterated these positions, emphasizing the ethical boundaries that Anthropic has established.

Hegseth contended that Anthropic’s technology should be available for all “lawful” purposes, which could encompass AI-directed warfare and surveillance operations. Despite Anthropic’s firm stance, Pentagon officials indicated that the Defense Department intends to continue using the company’s tools regardless of its preferences. If Anthropic does not acquiesce, Hegseth is prepared to invoke the Defense Production Act, a law traditionally employed during national emergencies to compel companies to produce essential products for national security.

Should this action be taken, it would obligate Anthropic to permit the military to utilize its technology “whether they want to or not,” according to a senior official at the Pentagon. This potential move is compounded by the Trump administration’s plans to classify Anthropic as a “supply chain risk,” effectively placing the company on a government blacklist, according to the same sources.

Hegseth and other officials from the Trump administration have labeled Anthropic’s stance on domestic surveillance and AI weaponry as “woke AI.” This term has emerged in political discourse to criticize perceived safety protections in AI technology, with White House AI czar David Sacks having drafted an executive order last year that targeted tech firms on similar grounds.

In contrast to Anthropic’s hardline approach, competing firms such as OpenAI and Google have consented to allow their AI tools to be used in any “lawful” scenarios. Similarly, Elon Musk’s xAI recently received approval for use in classified settings. The Pentagon previously awarded contracts worth up to $200 million each to Anthropic, Google, OpenAI, and xAI, with Anthropic being the first to be granted clearance for classified applications after being deemed the most advanced and secure model for sensitive military use.

The tension between Anthropic and the White House comes at a critical juncture, as the company is preparing to go public later this year. It remains uncertain how this friction with the administration will affect investor sentiment. Amodei noted that Anthropic’s valuation and revenue have only increased since the company took its stand against Trump officials regarding the deployment of AI in military contexts.

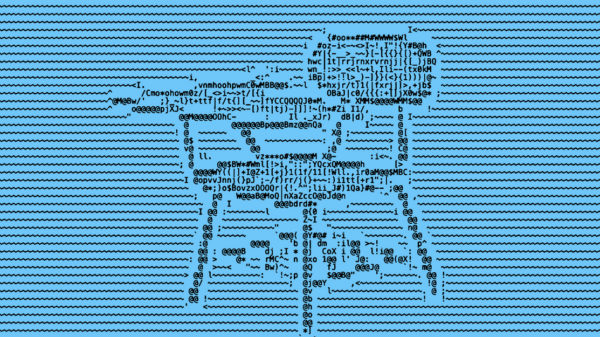

“My main fear is having too small a number of ‘fingers on the button,’ such that one or a handful of people could essentially operate a drone army without needing any other humans to cooperate to carry out their orders,” Amodei expressed in a January essay outlining his concerns. He argued that the approach to fully autonomous weapons should be met with caution, advocating for careful consideration before their deployment without appropriate safeguards.

See also Toby Walsh Warns Australia Faces AI Risks Without Strong Regulatory Framework

Toby Walsh Warns Australia Faces AI Risks Without Strong Regulatory Framework Odisha Government Launches AI Training for Works Dept to Enhance Governance Efficiency

Odisha Government Launches AI Training for Works Dept to Enhance Governance Efficiency NationGraph Secures $18M Funding to Enhance AI-Driven Public Procurement Insights

NationGraph Secures $18M Funding to Enhance AI-Driven Public Procurement Insights Anthropic Partners with Rwanda on AI Health and Education Initiatives in Landmark MOU

Anthropic Partners with Rwanda on AI Health and Education Initiatives in Landmark MOU Dutch Government Launches GPT-NL AI Model to Enhance Data Privacy and Local Innovation

Dutch Government Launches GPT-NL AI Model to Enhance Data Privacy and Local Innovation