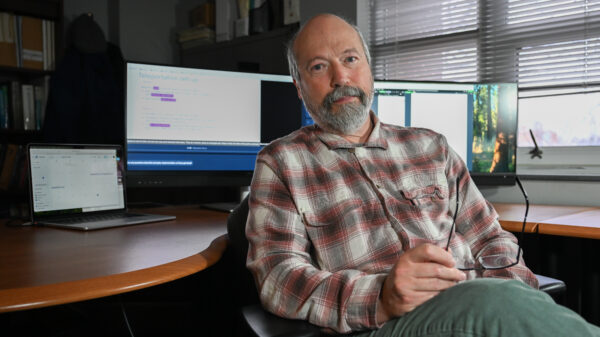

Oscar Brownfield, representing himself in federal court in Oklahoma, recently sought sanctions against legal counsel for his employer, the Cherokee County School District. He accused them of knowingly filing false claims in ongoing litigation. However, Brownfield’s attempt to challenge certain pleadings in the school district’s summary judgment motion encountered significant backlash when opposing counsel uncovered that his motion, crafted with the assistance of artificial intelligence, referenced fictitious legal cases.

This revelation prompted the defense to pursue $7,000 in sanctions, claiming it needed to cover the time and resources spent reviewing and responding to Brownfield’s filing. The situation escalated in a court session last month, where an Eastern District of Oklahoma magistrate judge ultimately imposed a fine of $500 on Brownfield. The judge also issued a warning that future infractions could lead to “more severe ramifications.”

The case spotlights the growing intersection of artificial intelligence and legal processes, raising concerns about the reliability of AI-generated content. While AI tools have become increasingly popular in various sectors, their use in the legal field presents unique challenges, particularly regarding accuracy and accountability. Brownfield’s reliance on AI for legal drafting brought to light issues about the potential pitfalls of automated systems in producing credible legal documents.

Legal experts have long debated the implications of AI in law, noting that while these tools can enhance efficiency and support research, they come with risks, including the generation of spurious information that could undermine a case. The incident involving Brownfield serves as a cautionary tale for individuals considering self-representation with AI assistance, emphasizing the importance of critical scrutiny of AI outputs.

As the legal landscape continues to evolve with the integration of technology, the ramifications of Brownfield’s experience may extend beyond this case. Legal practitioners and clients alike are urged to remain vigilant about the limitations of AI tools. The case underscores the necessity for a balanced approach, where technology complements human expertise rather than replaces it.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health