As artificial intelligence evolves from a mere innovation cycle to a foundational infrastructure, industry leaders are grappling with the implications of rapid advancements. Recently, **Anthropic**, a prominent AI company, revised its Responsible Scaling Policy, abandoning a previous commitment to hold back the development of more powerful systems until safety conditions were met. This decision raised eyebrows, not due to the competitive nature of the industry or the ongoing need for innovation, but because of the rationale provided by senior leadership, which cited competitive pressure and the lack of binding regulation as key factors influencing their change in stance.

This shift reflects a broader market design problem rather than a moral failing on the part of AI firms. Coinciding with the policy revision, Anthropic launched a Substack newsletter dedicated to its retired model, **Claude 3 Opus**, a move that highlights the complex relationship between AI systems and their lifecycle. Unlike traditional industrial machines, AI software can be archived, reactivated, and repurposed, extending its relevance and influence well beyond initial deployment. This development raises questions regarding authorship, attribution, and intellectual property, particularly when AI outputs are presented as serialized essays instead of transient responses.

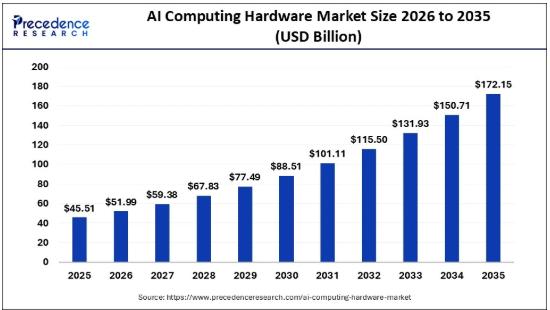

These developments prompt a fundamental question: Are we prepared for the rapid evolution of frontier artificial intelligence? The disparity between the pace of AI advancements and the slower tempo of legal and regulatory systems is becoming increasingly evident. While capability in AI scales swiftly through innovations in computing power, data availability, and architectural design, the legal frameworks that govern these technologies are invariably slower, moving through processes of consultation, legislation, and enforcement.

The crux of the issue extends beyond the intentions of individual companies like **Anthropic** or **OpenAI** regarding safety. The pressing concern lies in whether our legal architecture is adequately designed to cope with the accelerated pace of technological capability. In other sectors, regulatory frameworks adapt once certain thresholds are crossed; for instance, **commercial aviation** mandates rigorous certification and airworthiness standards, while **pharmaceuticals** require extensive clinical trials before market introduction. However, in the realm of AI, such threshold governance remains fragmented and underdeveloped.

For instance, the **European Union’s AI Act** introduces specific obligations for general-purpose AI models exhibiting systemic risk, yet globally harmonized definitions of frontier capabilities are still lacking. Most jurisdictions do not automatically trigger independent audits based solely on computational scale. As a result, decisions related to pausing development or redefining acceptable risks predominantly rest within individual companies, relying on voluntary frameworks like Anthropic’s Responsible Scaling Policy or OpenAI’s Preparedness Framework.

This situation is not an argument for imposing bureaucratic processes on every innovation. History shows that technological advancements generally outpace regulation—and quite rightly so, as exploration and discovery necessitate the freedom to innovate. However, as the landscape begins to transform the fundamental technosocial and technolegal paradigms, the absence of clearly defined capability thresholds manifests as more than mere regulatory delay; it presents a structural gap.

Companies face a dilemma: if they slow down voluntarily, they risk ceding competitive advantage, yet if they move forward unchecked in a regulatory vacuum, they may dramatically reshape the environment before democratic institutions can establish the parameters of such transformation. The implications of generative AI extend far beyond conversational tools; they are already influencing knowledge production, creative sectors, research workflows, and decision-making processes.

If artificial intelligence is indeed transitioning from product to infrastructure at an exponential rate, the urgency increases regarding whether we are constructing the necessary technolegal architecture in time. This urgency escalates when considering that “we” encompasses vastly different capacities and constraints across the globe. While the **Global North** increasingly shapes AI development, it often externalizes its environmental costs to the **Global South**, where nations grapple with the dual challenge of establishing both technological and regulatory infrastructure with limited resources.

As AI continues to reshape our societies, the need for a cohesive and comprehensive regulatory framework becomes critical. It is imperative that the dialogue surrounding AI governance evolves to keep pace with technological advancements, ensuring that the benefits of AI are equitably distributed and that potential harms are managed effectively.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health