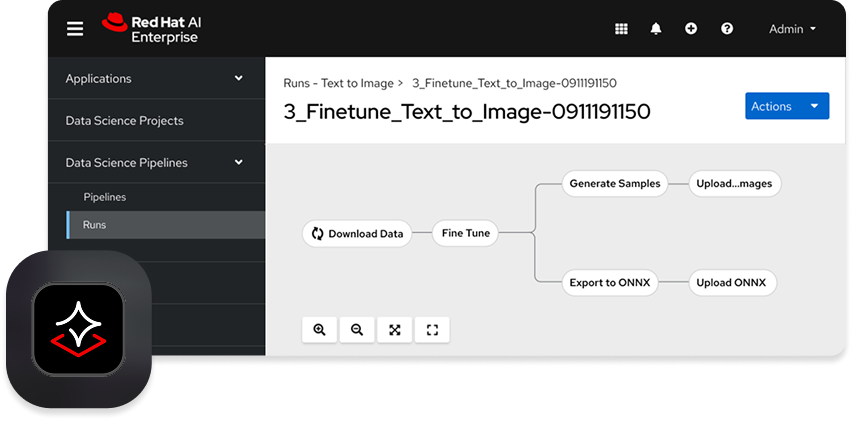

Red Hat has unveiled its new unified AI platform, Red Hat AI Enterprise, aimed at streamlining the deployment and management of AI models, agents, and applications. The announcement comes during a period when many organizations find themselves stalled in AI pilot projects due to fragmented tools and infrastructure.

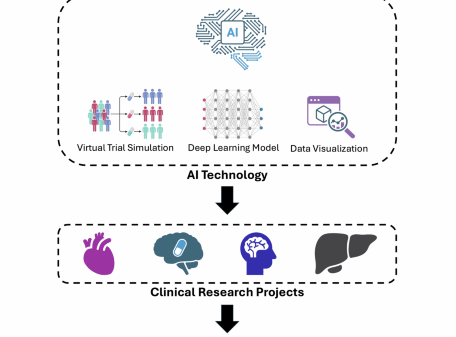

With Red Hat AI Enterprise, the company seeks to provide a standardized environment that facilitates AI inference, model customization, tuning, and deployment across various hardware and frameworks. This initiative addresses the challenges faced by enterprises in transitioning from experimentation to full-scale AI implementation.

The platform integrates optimized runtimes tailored for high-throughput and low-latency model serving, utilizing technologies such as vLLM and the llm-d framework. Additionally, it introduces a standardized API layer through the Llama Stack and supports the Model Context Protocol (MCP), which allows connections to external systems.

To instill greater confidence in AI applications, Red Hat AI Enterprise incorporates several monitoring and evaluation tools. These include drift detection, bias monitoring, and model explainability features, such as the RAGAS evaluation framework designed to assess the quality of retriever-augmented generation systems. This focus on operational safety and compliance is increasingly critical as AI systems become more integrated into business processes.

“Red Hat AI Enterprise is more than just a collection of tools—it is a strategic foundation for the AI-driven era,” said Jennifer Vargas, principal product marketing manager at Red Hat, in a blog post. She emphasized that the platform closes the gap between experimentation and production, enabling organizations to innovate at a faster pace while maintaining necessary security protocols.

Alongside the launch of AI Enterprise, Red Hat also introduced a new version of its existing platform, Red Hat AI 3.3. This update expands the model ecosystem with production-ready, compressed versions of models such as Mistral-Large-3, Nemotron-Nano, and Apertus-8B-Instruct. The release enhances hardware compatibility by adding support for AMD MI325X accelerators, broadening certification for NVIDIA’s Blackwell Ultra, and offering a technology preview for Intel CPUs.

Another significant addition in Red Hat AI 3.3 is the technology preview of Models-as-a-Service (MaaS). This feature allows IT departments to establish self-service access to privately hosted models, effectively promoting greater AI adoption within enterprises. The integration of this service is a reflection of the growing trend toward democratizing AI tools within organizations.

The update further includes the Red Hat AI Python Index, which offers access to enterprise-grade versions of essential tools such as Docling, SDG Hub, and Training Hub. It also provides real-time telemetry across various AI workloads and deployments, facilitating better resource management and efficiency.

Furthermore, the new version introduces a technology preview of a NeMo Guardrails integration, designed to assist developers in enforcing operational safety and alignment in AI applications. This aligns with the increasing emphasis on responsible AI development at a time when organizations are under scrutiny to ensure that their models operate ethically and transparently.

As AI continues to evolve, the advancements from Red Hat position it as a key player in the market, addressing the urgent needs of enterprises striving to leverage AI technologies effectively. The dual announcements of Red Hat AI Enterprise and AI 3.3 reflect a concerted effort to provide robust solutions that can facilitate the transition from pilot phases into full-scale AI operations, ultimately aiming to support organizations in navigating the complexities of the AI landscape.

See also Alphabet Outpaces Meta in AI Investment: $402.8B Revenue and Cloud Growth Surge

Alphabet Outpaces Meta in AI Investment: $402.8B Revenue and Cloud Growth Surge Path Launches AI-Native Platform to Transform Custom Software Development for Businesses

Path Launches AI-Native Platform to Transform Custom Software Development for Businesses Snowflake Forecasts $5.66B Revenue for 2027, Driven by AI Demand and Major Deals

Snowflake Forecasts $5.66B Revenue for 2027, Driven by AI Demand and Major Deals Adobe Acrobat Launches AI-Powered Tool to Streamline Presentation Creation from PDFs

Adobe Acrobat Launches AI-Powered Tool to Streamline Presentation Creation from PDFs